Roy K.K. Potential theory in applied geophysics

Подождите немного. Документ загружается.

17.15 Global Optimization 609

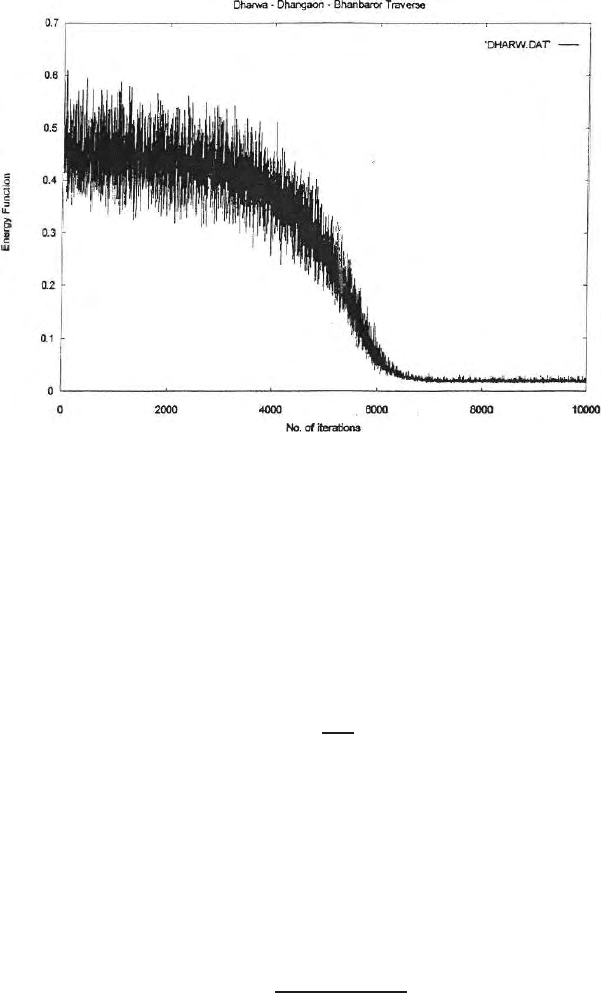

Fig. 17.13. Nature of conv ergence in VFSA inversion

10,000 iterations are needed for convergence. Because of random jump, the

probability, of a solution, getting trapped in a local minimum pocket, is zero.

Once a forward problem is solved, inverse problem is so lved auto matically. In

global search, there is no mathematics in an inverse problem so stability is

guaranteed. However computation time required to handle 10,000 iterations

remains as a problem. Geman and Geman (1984) showed that a necessary and

sufficient condition for convergence to the global minimum level for Simulated

Annealing is given by the following cooling schedule

T(K)=

T

0

ln k

(17.198)

where T (K) is the temperature at iteration K.

Fast Simulated Annealing (FSA)

Szu and Hartley (1987) proposed a new algorithm known as Fast Simulated

Annealing. This algorithm uses Cauchy like distribution which shows very

sharp peak at lower temperature (Sen and Stoffa 1995). This Cauchy like

distribution is a function of temperature and is given by

f(∆m

i

) α

T

(∆m

2

i

+T

2

)

1/2

(17.199)

where T is the temperature or the controlling parameter to be lowered as per

any specific cooling schedule. ∆m

i

is the perturbation in the ith parameter

610 17 Inversion of Potential Field Data

model with respect to the present model. Szu and Hartely showed that for

choice of the perturbed model generation program, the cooling schedule for

convergence is chosen a s

T(K)=

T

0

K

(17.200)

where K is the number of iteration. The nature of Cauchy type distribution is

very much different from the Gaussian type distribution. At lower tempera-

ture, Cauchy type peaks can be used to find out the highest probability values

with greater sensitivity.

Very Fast Simulated Annealing (VFSA)

Ingber (1989, 1993) did several modifications of Simulated Annealing and

proposed a new algorithm known as Very Fast Simulated Annealing (VFSA)

Ingber proposed a new probability distribution for model generation such

that a slow cooling schedule is no longer required. The special features of this

algorithm are (i) each parameter can have different types of discretization

and different degree of perturbation (ii) the temperature or the controlling

parameter may be different for different parameters (iii) new probability dis-

tribution avoids Cauchy type distribution but avoids slow cooling and quick

convergence of an inverse problem. Thus it became a very effective tool to

handle geophysical inverse problems and is used by many geophysicists to

solve 2D/3D inverse problems. A brief sketch of the Ingb er’s (1993) VFSA

model is

m

k+1

i

=m

k

+y

i

m

max

i

− m

min

i

(17.201)

where m

k

i

is the ith model parameters after the iteration K and this value is

within the upper and lower bounds i.e., m

min

i

≤ m

k

i

≤ m

max

i

.m

k+1

i

is the model

parameter after (K + 1) iteration and it is also within the upper and lower

bounds i.e. m

k+1

i

is also within m

min

i

≤ m

k+1

i

≤ m

max

i

. Th e special parameter

Y

i

is defined by (Ingber 1993) as

g

T

(y) =

NM

2

i=1

1

2(|Y

i

| +T

i

)ln

1+

1

T

i

=

NM

2

i=1

g

T

i

(y

i

) . (17.202)

Equation (17.202) has the following cumulative probability as

G

T

i

(y

i

)=

1

2

+

Sgn (y

i

)ln

1+

|Y

i

|

T

i

ln

1+

1

T

i

. (17.203)

Thus a random number u

i

drawn from an uniform distribution U (0, 1) can

be mapped into the above distribution with the following formulae

17.15 Global Optimization 611

Y

i

=Sgn

u

i

−

1

2

T

i

)

1+

1

T

i

|2u

i

−1|

− 1

*

. (17.204)

Distribution of global minimum can be statistically obtained using the cooling

schedule

T

i

(k) = T

0

i

exp

−C

i

K

1/NM

. (17.205)

T

i

is the controlling parameter for the ith model parameter and T

0

i

the initial

controlling parameter in the model. The value of u

i

randomly varies within

+1 to −1. Sgn denotes the sign of the expression. So model perturbation has

some relation with the probability distribution of the respective parameters.

Generally Metropolis criterion is used here also for selection of the models

automatically. In global search, there is no mathematics in an inverse prob-

lem so stability is guaranteed. However computation time required to handle

10,000 iterations may be more as mentioned. Geman and Geman (1984) has

shown the conditions for convergence of the model. The temperature is used

to decide on the acceptance criterion. VFSA also has the flexibility of chang-

ing the cooling schedule. Figure (17.13) shows the nature of convergence in

VFSA applied to two dimensional DC resistivity problem. Convergence in an

inverse problem starts after several thousand iterations are over.

17.15.4 Genetic Algorithm

Introduction

Genetic Algorithm is one of the powerful tools for global optimization. It is

also based on the principle of random walk in the parameter space. GA has

artificial intelligence like SA and can handle strongly nonlinear pr oblems. GA

was discovered by Holland (1977) and was brought to the present stage of

development by Goldberg (1989) and Davis (1991). The procedure for genetic

algorithm has some similarity with the biological evolution and surviva l for

the fittest. Like Monte Carlo inversion, and Simulated Annealing, GA does

not need any derivatives or curvature information. Therefore once the forward

problem is solved, inverse problem can be solved automatically because the

entire exercise is to choose some models, compute synthetic data, compare d

Pre

with d

Obs

, compute the cost function or error function and go on choosing

the better and better models through certain guide lines in deciding on the

acceptance criterion.

Unlike other approaches, GA works with a population of models. Larger

the number models to start with more efficient will be the global search in

the parameter space. The basic guidelines for genetic algorithms are divided

into the following nine steps viz.,

(i) Selection of the even number (N) models from a large set of models.

(ii) Randomly generate N/2 number of pairs.

612 17 Inversion of Potential Field Data

(iii) Discretize the of model parameters in one, two or three dimensional

domain. In that process a continuous domain gets discretized.

(iv) Discretize each model par ameter fixing the upper and lower bounds and

ρ

i

be the number of models parameters for the parameter m

i

.Herem

i

is the i th model parameter and ρ

i

is the number of discretized values

between the upper and lower bounds. Larger the value of ρ

i

finer will be

model resolution. ρ

i

may be same or different for different parameters

of the model.

(v) Binary coding is done for each model parameter from upp er and lower

bounds in O’S and 1’S and bit strings or chromosomes in GA language

are g enerated.

(vi) Generation of Concatenated strings.

(vii) Generation of randomly chosen pairs and crossovers with reasonably

high crossover probability are the next steps.

(viii) Mutation with reasonably low mutation probability is done next to

retain certain diversity in the models or to remove any bias.

(ix) Model updating or survival for the fittest test is done next. This is

the last step where the best models are chosen from the p opulation.

The entire genetic operation is completed at this stage and only first

iteration of the Genetic algorithm is over at this stage. With the updated

models the second iteration starts. The entire operation continues till

the complete convergence is obtained upto a specified limit.

Selection

Since GA starts with a population of models instead of a single model, an arbi-

trarily 200 or 300 models are randomly generated using the random number

generator and the energy function or cost function or error function

E (m)=

1

NS

'

d

Obs

− d

Pr e

(

t

'

d

Obs

− d

Pr e

(

(17.206)

is computed where d

Obs

and d

pre

as defined earlier are observed and predicted

field data. t is the transpose and NS is the number of data points. Gibbs

probability distribution

P (m

ij

)=

exp (−Em

ij

) /T

m

4

j=1

exp (−Em

ij

) /T

(17.207)

is computed. T the temperature or the controlling par ameter is also used

these days for model search the way it is used in simulated annealing. In case

we decide to work with 50 initial models, we select 50 mod els with relatively

higher probabilities from the arbitrary population of 300 models. For one

dimensional problems, 10 to 20 models are good enough to start with the

Genetic process. For two dimensional problem, initial population of 50 to 100

17.15 Global Optimization 613

should be appropriate. For three dimensional problem 100 to 1000 models

should be chosen to start with. One should remember that larger the number

of initial, models, higher will be the computation time and more efficient will

be the genetic algorithm process.

Discretization

Double discretization of the model space is done in two stages. In the first

stage, the discrete set of model parameters are assumed for an earth model,

where the physical property might be varying continuously. The seco n d set

of discreti zation comes from fixing the upper and lower bounds for the model

parameters i.e.

where

m

i

=m

min

i

+J∆ m

i

(17.208)

where J varies from 0 to N

i

and

∆m

i

=

m

max

i

− m

min

i

N

i

. (17.209)

So for a finite number of models N having M parameters the number of model

will be

N=

M

2

i=1

N

i

. (17.210)

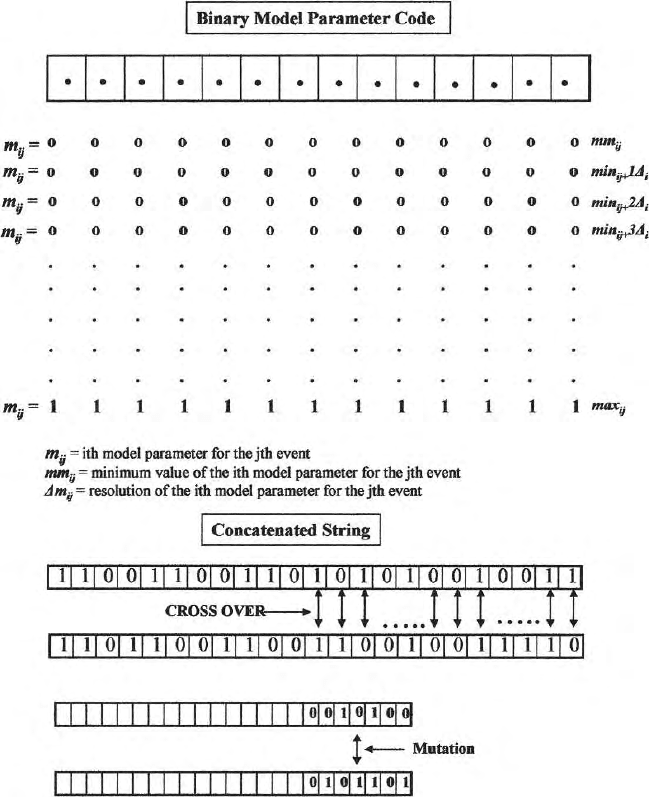

Parameter Coding

Q number of models are binary coded with 0’s and 1’s. Binary coding is

done to represent a model par ameter, such that all the bits become zero

at the minimum value of the parameter and all the bits become one at the

maximum value as shown in the Fig. (17.14). Depending upon the level of

resolution needed and the discretization done, a parameter can be a 3, 4,

5 ...... 9 bit string for one dimensional problem. for two /three dimensional

problem, the parameter can b e of 15 to 20 bit string or even more. These ‘bits’

are called ‘genes’ which can take a value of ‘0’ or ‘1’ called alleles. For a 7 bit

string 2

7

-1 discrete values of the model parameters will be obtained. For two

dimensional problems 15 bit strings have been used with success. These 7 bit,

9 bit or 15 bit strings are called chromosomes. One chromosome is generated

for one parameter. They are attached one after the other to obtain binary

co ded concatenated strings. Larger the number of bits in a chromosome, longer

will be the concatenated st ring, higher will be the c omputation time. For

Two/three dimensional problems in finite difference or finite element forward

problem, the concatenated strings will be very long because each element or

a group of elements will form one parameter.

614 17 Inversion of Potential Field Data

Fig. 17.14. Genetic Algorithm structure: (a) Parameter coding and preparation of

concatenated string (b) selection of pairs(c) crossover (d)mutation

Crossover

These binary co ded strings (Fig. 17.14) are randomly paired to generate Q/2

pairs of models. At this stage the crossover starts at randomly chosen crossover

point with high crossover probability. Crossover can be a single point or a

multipoint crossover. The exchange of bits or ‘genes’ take place to all the bits

on the right of the crossover point (Fig. 17.14). The bits, left to the crossover

point, remain unaltered. For multipoint crossover also, the crossover, is done

17.15 Global Optimization 615

with randomly selected crossover points for each chromosome and for each

parameter. But the crossover or floor crossing of the bits take place only to

the bits right of the crossover points. For two a nd three dimensional problems

multipoint crossover is advisable. For one dimensional problems single point

crossover works well. Genetic Algorithm is a very active area of research.

Therefore these procedures are also changing.

Mutation

After crossover is over, just to give little diversity and to remove any kind of

bias, mutation is done with low mutation probability. Here floor crossing of

one or two bits is done and allowed the system to converge. (Fig. 17.14). High

mutation probability will delay the convergence and may lead to Monte Carlo

type inversion. Mutation probability is always kept low.

Model update

This is the last genetic operation. This operation is also termed as the survival

for the fittest test. Three or four different approaches are available in the

literature regarding the model update. In this chapter Stochastic Remainder

Selection Without Replacement (SRSWR) as prop osed by Goldberg (1989)

and as it is used by the author for inversion of one or two dimensional problem

is discussed .

Once

Q

2

pairs of offspring models are generated from

Q

2

pairs of parents.

Each of these modes had to pass through the survival for the fittest test. The

stronger models will survive and strengthen their position. The weaker models

are eliminated from the contest.

Each model has its own objective function or error function. The proba-

bilities of these new generation of populations are multiplied by the number

models present. So me of these fractional probabilities have integer numbers.

It can be 1 or 2 or 3. Some of the members do not have integer number. In

the selection for next generation of new models, all the models having integer

number will go first. If the model no.1 has the multiplied probability 2.5 (say),

then the model no.1 will go twice. If it is 3.4 , then it will go thrice. Once all

the models having multiplied probability greater than 1 are taken care of,

the fractional part of the multiplied probabilities are tak en. Higher fractional

parts are given preference to get a berth in the next generation mo dels with

which the next generation genetic process will start. If a particular model

had multiplied probability 2.8 (say), then if gets two berths for two integer

numbers and one berth for higher fractional number. So this model gets three

berths to start the next genetic process. These are offspring models. Parents

models are destroyed after the crossover is completed.

If we start with 50 initial models, there will be 50 berths in the model

update. Who will occupy these berths and how. The berths are given to the

models with higher multiplied probabilities. The stronger models may occupy

616 17 Inversion of Potential Field Data

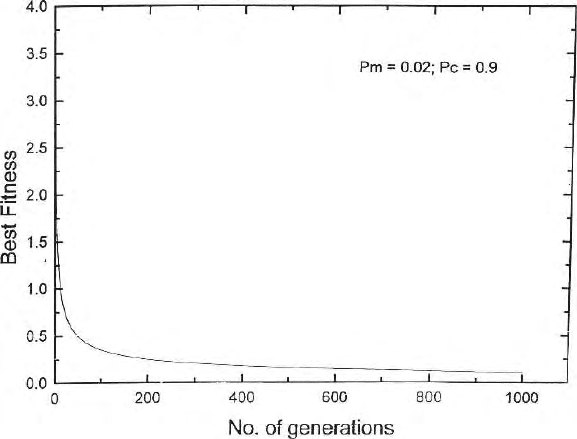

Fig. 17.15. Nature of convergence in two dimensional Genetic algorithm optimiza-

tion

3 or 4 berths. Weaker model have to vacate their berths. Weaker model means,

the models with less probability or higher error function or higher discrepancy

between the observed and predicted data.

Model updating continues this way in successive iterations till one gets the

convergence (Fig. 17.15). Ultimately the stronger models only will occupy all

the berths. Since the parameter values change within a model in successive

crossover, therefore near convergence the parameter values come quite chose

to each other in different models near global minimum point. The number

of models also reduces in successive iteration. Self learnin g process or the

artificial intelligence takes the model search in the direction of high probability

density models and makes it a powerful tool.

17.16 Neural Network

17.16.1 Intro du ction

Neural Network as the name suggests, mimics the functioning of brain. Arti-

ficial Neural Network (ANN) originated to simulate the complex behaviour of

the brain initially. Later it proved to be a versatile tool to be used in many

branches of science and engineering viz., pattern recognition, global optimiza-

tion, noise reduction and classification, data compression etc.

17.16 Neural Network 617

These are some of the areas where artificial neural network can be and is

being used. This subject as such is vast and it is outside the scope of this

book. Here only a few basic points regarding the use of neural network for

global optimization of geophysical data will be highlighted very briefly.

There are mill ions of structure elements within the brain called neurons.

Millions of synapses are formed to connect these neurons so that the brain

becomes a parallel computing system. Synapses are also elements of structural

units, which are responsible for the interaction between the neurons. These

neurons with variable synaptic connection functions as information processing

units in the human brain. In neural network, the structure is made with

artificial neuron interconnected to each other. These artificial neural networks

are designed to function the way the brain do es a particular job. Human brain

is an immensely superior system. ANN networks are trained to a particular

job and it tries to do that job only. Hopfields ( 1975 ) procedure is discussed

briefly.

A Neural networ k can be defined as follows: A neural network is a system

composed of many simple processing elements operating in parallel whose

function is determined by network structure, strengths of the connecting links.

The processing is performed at the nodes or neurons. In global optimization

problem, the structure of ANN consists of an input layer, one or more hidden

layers and an output layer. The number of hidden layers can vary from 1 to

n where the value of n will b e problem dependent. For geophysical inverse

problems the input layer will contain the data and the output layer contains

all the models parameters obtained. Each of the nodes of the input layer is

connected with each of the nodes of the first hidden layer. Nodes of the input

layers are not connected to each other.

Each of the nodes of the output layer is connected to each of the nodes of

the nth hid den layer. That is how a neural net is constructed. The number of

data points of an inverse problem will dictate the number of nodes in the input

layer and the number of parameters to b e retrieved from a set of data will be

equal to the number of nodes in the output layer. How many hidden layers

and how many nodes, required in each hidden layers, are decided thro ugh

repeated experiment while trying to solve a particular problem in a particular

field. Number of nodes in a hidden layer and number of hidden layers in a

problem ar e highly variable parameters. Each of these connections between

the nodes is assigned a certain weightage. These weights are initially selected

at random. Then the learning process or the training process starts. It starts

with known synthetic models for which both inputs and outputs are known.

The message about the discrepancy between actual output and the outputs

obtained with randomly chosen synaptic weights are back propagated through

preceptors and the weights are changed in successive iterations till the actual

output and computed output becomes more or less the same with already

prescribed minimum error level. This learning process is done several times

with noise fr ee synthetic data and then with data mixed with Gaussian noise.

618 17 Inversion of Potential Field Data

Say for one-dimensional direct current resistivity or magnetotelluric prob-

lems, the neural net is trained for 2 to n hidden layers. The same program

trained for different models can be kept separately and used accordingly. The

artificial neural network acquires knowledge through this learning process that

involves modification of the connecting weights. The acquired knowledge is

stored in the form of modified weights. Information processing occurs at the

nodes simultaneously and the weights are gradually modified till the error

function or cost function is modified. Once a neural net is trained for a known

system, it is now ready to take the field data or experimental data to find out

the model. Neural net can function only for which it is trained. A net trained

for some other models or for some other purp o se will generate wrong results.

Therefore the interpreter has to choose the prop erly trained package. Choice

of the forward mo del (ID or 2D or 3D) will dictate the proper choice of the

package. Except random choice of the synaptic weights at the beginning of

the training or learning process, there is nothing random is subsequent stages.

The functioning of the neural network is similar to gradient minimization in

an inverse problem.

17.16.2 Optimization Problem

Theprocedureisasfollows:

Let the desired output values at different nodes are m

true

1

..................

m

true

M

for a known system where a s the actual computed model parameters

for the known synthetic data are m

Pr e

1

..................m

Pr e

M

which passed

through the ra n d on weights. The discrepancy between the actual and observed

values of the model parameters generate the error function

ε

M

=

M

i=1

m

true

i

− m

Pr e

i

2

. (17.211)

One obtains this m

Pr e

1

from all the connecting paths from the hidden layer

(s). Multi channel input comes from the hidden layers to the output layer

to generate a single output at a particular node. Since neural network is a

parallel pr ocessing system, the same pro cess continues at all the nodes with

different interactions with the nodes or neurons of the hidden layer because

the interacting links and the synaptic weights are different. The processing

occur at the say j th node where the signals d

h

j

are coming from the different

input nodes. Each of these input signals are multiplied by their weights to

generate the output function.

m

j

=

n

i=1

W

ji

d

h

i

. (17.212)

These ou t pu t values m

j

pass through a nonlinear activation function, gener-

ally a sigmoidal functions to produce the output M

j

.Here