Kortum P. (ed.) HCI Beyond the GUI. Design for Haptic, Speech, Olfactory, and Other Nontraditional Interfaces

Подождите немного. Документ загружается.

and when her feet are touching the ground. These cues also communicate

the relative motion of the person’s body (i.e., how the limbs move relative to

the torso).

Vestibular:

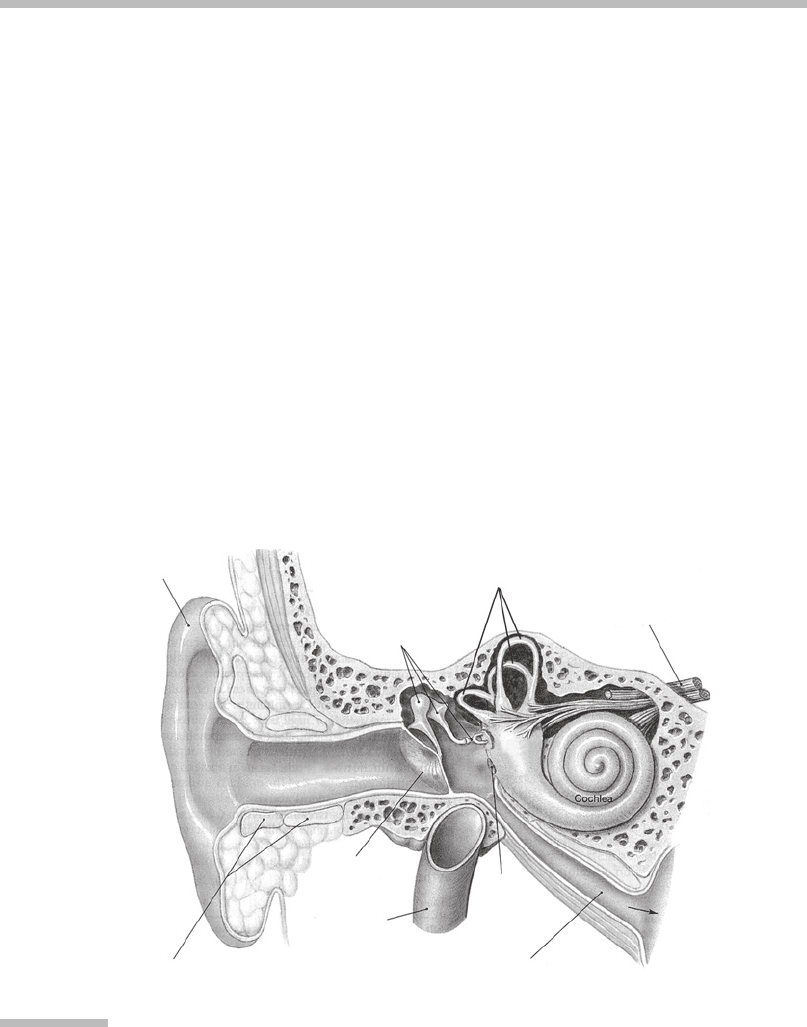

The vestibular system is able to sense motion of the head with respect to

the world. Physically, the system consists of labyrinths in the temporal bones of

the skull, just behind and between the ears. The vestibular organs are divided

into the semicircular canals (SCCs) and the saccule and utricle (Figure 4.4).

As a first-order approximation, the vestibular system senses motion by acting

as a three-axis rate gyroscope (measuring angular velocity) and a three-axis

linear accelerometer (Howard, 1986). The SCCs sense rotation of the head and

are more sensitive to high-frequency (quickly changing) components of motion

(above roughly 0.1 Hz) than to low-frequency (slowly changing) components.

Because of these response characteristics, it is often not possible to determine

absolute orientation from vestibular cues alone. Humans use visual information

to complement and disambiguate vestibular cues.

Visual:

Visual cues alone can induce a sense of self-motion, which is known as

vection

. The kinds of visual processing can be separated into

landmark recognition

(or

piloting

), where the person cognitively identifies objects (e.g., chairs,

windows) in her visual field and so determines her location and

optical flow

.

Pinna

Auditory

ossicles

Cartila

g

e

Tympanic

membrane

External

auditory

canal

Vestibular

complex

Internal

jugular

vein

Round

window

Auditory tube

To

pharyn

x

Nerve

Semicircular canals

FIGURE

4.4

Vestibular system.

A cut-away illustration of the outer, middle, and inner ear.

Source:

Adapted from

Martini (1999). Copyright

#

1998 by Frederic H. Martixi, Inc. Reprinted by

permission of Pearson Education, Inc.

4 Locomotion Interfaces

112

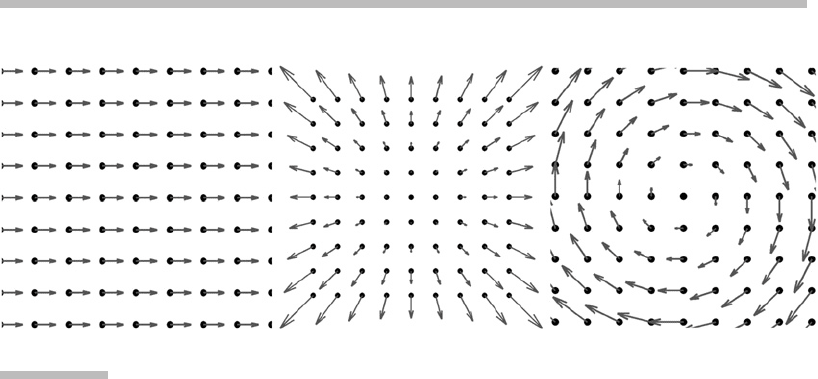

Optical flow is a low-level perceptual phenomenon wherein the movement of

light patterns across the retina is sensed. In most situations, the optical-flow

field corresponds to the motion field. For example, if the eye is rotating in

place, to the left, the optical-flow pattern is a laminar translation to the right.

When a person is moving forward, the optical-flow pattern radiates from a

center of expansion (Figure 4.5). Both optical-flow and landmark recognition

contribute to a person’s sense of self-motion (Riecke, van Veen, & Bulthoff,

2002; Warren Jr. et al., 2001).

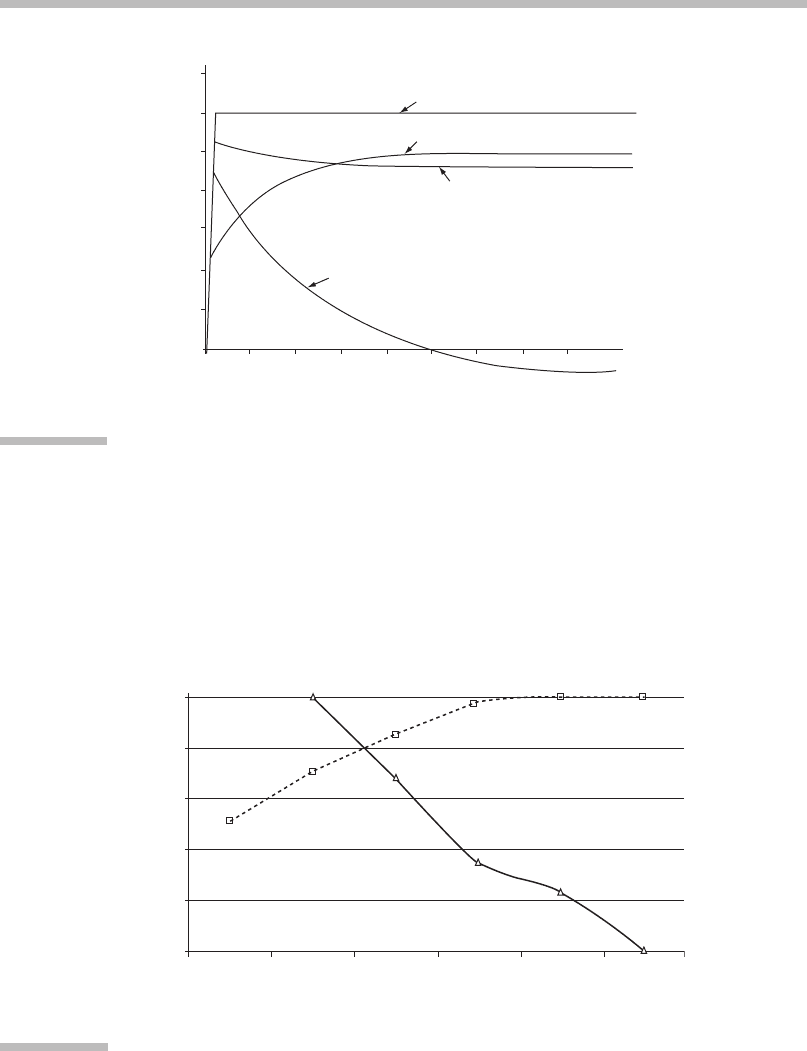

Visual and Vestibular Senses Are Complementary

As mentioned before, the vestibular system is most sensitive to high-frequency

components of motions. On the other hand, the visual system is most sensitive to

low

-frequency components of motion. The vestibular and visual systems are com-

plementary (Figure 4.6).

2

The crossover frequency of the two senses (Figure 4.7)

has been reported to be about 0.07 Hz (Duh et al., 2004).

Combining Information from Different Senses into a

Coherent Self-Motion Model

Each sensory modality provides information about a particular quality of a per-

son’s motion. These pieces of information are fused to create an overall sense of

FIGURE

4.5

Three optical flow patterns.

Left:

Laminar translation result from turning the head left.

Center:

Radial

expansion resulting from moving forward.

Right:

Circular flow resulting from

rolling about the forward axis.

2

This has a striking similarity to the hybrid motion trackers mentioned in Section 4.2.1,

which use accelerometers and gyros to sense high-frequency components of motion while

correcting for low-frequency drift by using low-frequency optical or acoustic sensors.

4.1 Nature of the Interface

113

Stimulus

3.5

3.0

2.5

2.0

1.5

1.0

0.5

0

0 5 10 15 20

3025 35 40

Perceived with visual

and inertial cues

Perceived with visual cues only

Perceived with

inertial cues only

Time (s)

Angular velocity (degrees)

FIGURE

4.6

Contribution of the visual and vestibular (or inertial) systems to perceiving a

step function in angular velocity.

The vestibular system detects the initial, high-frequency step, whereas the visual

system perceives the sustained, low-frequency rotation.

Source:

Adapted from

Rolfe and Staples (1988). Reprinted with the permission of Cambridge University

Press.

1.00

0.80

0.60

0.40

0.20

Normalized relative response

0.00

0.01 0.05 0.10 0.20

Frequency-hz

0.40 0.80

FIGURE

4.7

Crossover frequency of visual and vestibular senses.

Visual (

solid line

) and vestibular (

dashed line

) responses as a function of motion

frequency.

Source:

Adapted from Duh et al. (2004);

#

Dr. Donald Parker and

Dr. Henny Been-Lim Duh.

4 Locomotion Interfaces

114

self-motion. There are two challenges in this process. First, the information must

be fused quickly so that it is up to date and relevant (e.g., the person must know

that and how she has tripped in time to regain balance and footing before hitting

the ground). Second, the total information across all the sensory channels is often

incomplete. One theory states that, at any given time, a person has a

model

or

hypothesis of how she and surrounding objects are moving through the world.

This model is based on assumptions (some of which are conscious and cognitive,

while others are innate or hardwired) and previous sensory information.

New incoming sensory cues are evaluated

in terms of this model

, rather than

new models being continuously constructed from scratch. The model is updated

when new information arrives.

Consider the familiar example of a person on a stopped train that is beside

another stopped train. When the train on the adjacent track starts to move, the

person might have a short sensation that

her

train has started moving instead.

This brief, visually induced illusion of self-motion (vection) is consistent with all

of her sensory information thus far. When she looks out the other side of her train

and notices the trees are stationary (relative to her train), she has a moment of

disorientation or confusion and then, in light of this new information, she revises

her motion model such that her train is now considered stationary. In short,

one

tends to perceive what one is expecting to perceive

. This is an explanation for

why so many visual illusions work (Gregory, 1970). An

illusion

is simply the

brain’s way of making sense of the sensory information; the brain builds a model

of the world based on assumptions and sensory information that happen to be

wrong (Berthoz, 2000).

For a visual locomotion interface to convincingly give users the illusion that

they are moving, it should reduce things that make the (false) belief (that they

are moving) inconsistent. For example, a small display screen gives a narrow field

of view of the virtual scene. The users see the edge of the screen and much of the

real world; the real world is stationary and thus inconsistent with the belief that

they are moving. A large screen, on the other hand, blocks out more of the real

world, and makes the illusion of self-motion more convincing.

Perception is an active process, inseparably linked with action (Berthoz,

2000). Because sensory information is incomplete, one’s motion model is con-

stantly tested and revised via interaction with and feedback from the world. The

interplay among cues provides additional self-motion information. For example,

if a person sees the scenery (e.g., she is standing on a dock, seeing the side of a

large ship only a few feet away) shift to the left, it could be because she herself

turned to her right or because the ship actually started moving to her left.

If she has concurrent proprioceptive cues that her neck and eyes are turning to

the right, she is more likely to conclude that the ship is still and that the motion

in her visual field is due to her actions. The active process of self-motion percep-

tion relies on prediction (of how the incoming sensory information will change

because of the person’s actions) and feedback. Locomotion interfaces must

maintain such a feedback loop with the user.

4.1 Nature of the Interface

115

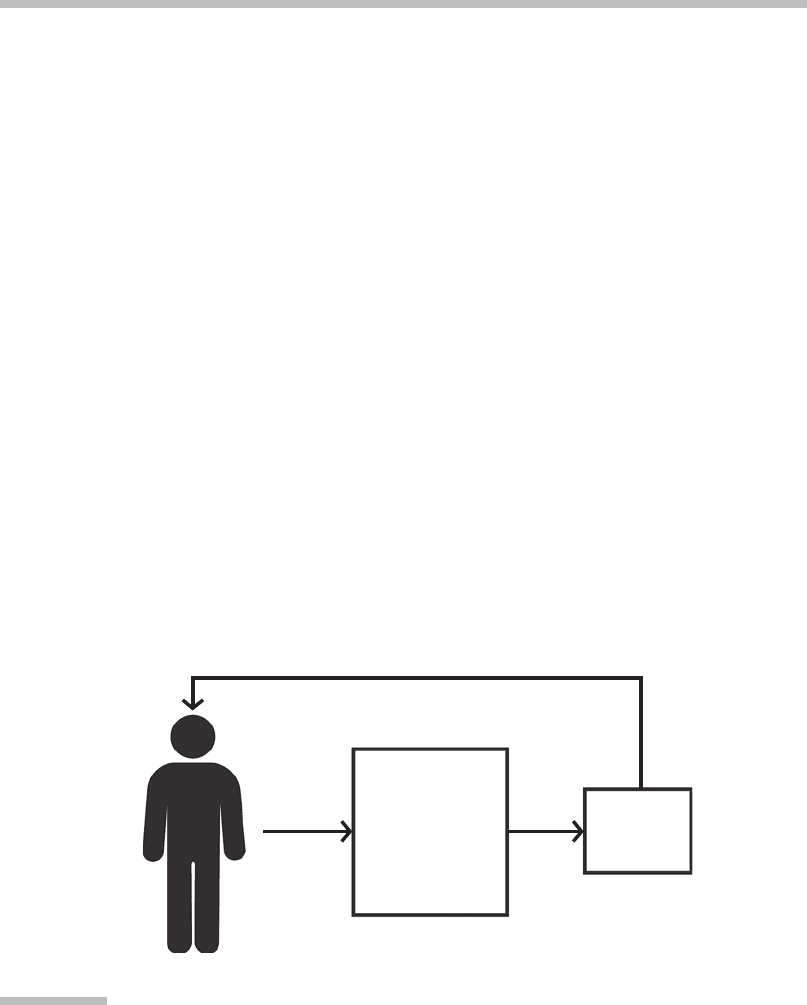

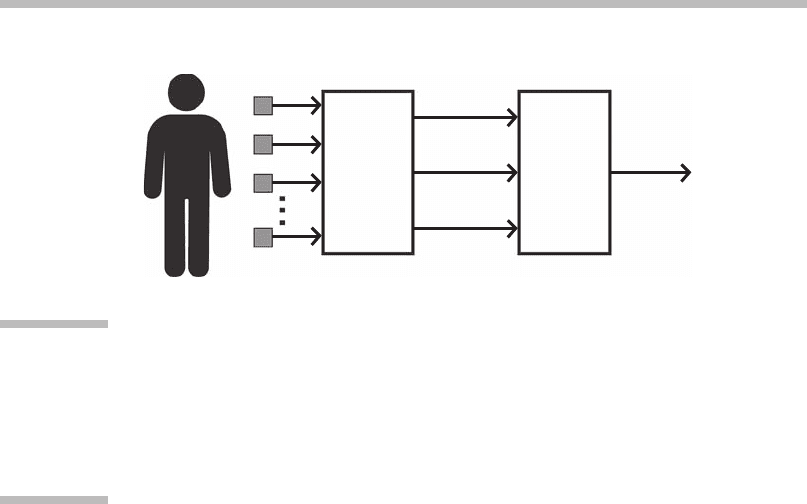

4.1.2 Locomotion Interfaces as Interaction Loops

In the case of a whole-body virtual locomotion interface, the inputs to the inter-

face are the pose of one or more parts of the user’s body, and the output of

the interface is the point of view (POV) in the virtual scene. The change in eye

position between samples is a vector quantity that includes both direction and

magnitude. The primary mode of

feedback

in most virtual locomotion systems

is visual: What you see changes when you move. In the locomotion system, the

change in POV causes the scene to be (re)drawn as it is seen from the new eye

position, and then the user sees the scene from the new eye point and senses that

she has moved. Figure 4.8 shows the interaction loop that is repeated each time

the pose is sampled and the scene redrawn.

Delving a level deeper, a locomotion interface must detect whether it is the

user’s intent to be moving or not, and, if moving, determine the direction and

speed of the intended motion and initiate a change in POV. Figure 4.9 shows this

as a two-step process. First, sensors of various types (described in more detail in

the next section) are used to capture data such as the position and orientation of

parts of the user’s body, or to capture information about the speed and/or acceler-

ation of the motion of parts of the user’s body. These data are processed to gener-

ate signals that specify whether the user is moving, the direction of the motion,

and the speed of the movement. Those signals are interpreted and changed into

a form that specifies how the POV is moved before the scene is next rendered.

We show examples of this in the case study (available at

www.beyondthegui.com

).

Feedback

Is moving?

Which direction?

How far/fast?

Display

system

Locomotion

Interface

Sensed

pose

information

Change

in POV

FIGURE

4.8

Data about the user’s body pose are inputs to the interface.

The interface computes the direction and distance of the virtual motion from the

inputs. The direction and distance, in turn, specify how the POV (from which

the virtual scene is drawn) moves. By displaying the scene from a new POV, the

interface conveys, to the user, that she has moved. Based on this feedback, she, in

turn, updates her model of space and plans her next move.

4 Locomotion Interfaces

116

4.2

TECHNOLOGY OF THE INTERFACE

Whole-body locomotion interfaces require technology to

sense

user body position

and movement and to

display

to feed back the results of the locomotion to the user.

4.2.1 Pose and Motion Sensors

Sensors that measure and report body motions are often called

trackers

. Trackers

can measure the position and orientation of parts of the body, or can measure body

movement (e.g., displacement, rotational velocity, or acceleration). Trackers come

in a myriad of form factors and technologies. Most have some pieces that are

attached to parts of the user’s body (e.g., the head, hands, feet, or elbows) and pieces

that are fixed in the room or laboratory. A detailed overview of tracking technology

can be found in Foxlin (2002). Common categories of tracking systems are trackers

with sensors and beacons (including full-body trackers) and beaconless trackers.

Trackers with Sensors and Beacons

One class of tracker, commonly used in virtual-reality systems, has one or more

sensors worn on the user’s body, and beacons fixed in the room. Beacons can be

active, such as blinking LEDs, or passive, such as reflective markers. Trackers

with sensors on the user are called

inside-looking-out

because the sensors look

out to the beacons. Examples are the InterSense IS-900, the 3rdTech HiBall-3100,

and the Polhemus LIBERTY. Until recently, body-mounted sensors were often

connected to the controller and room-mounted beacons with wires carrying

power and data; new models are battery powered and wireless.

In inside-looking-out systems, each sensor reports its pose relative to the

room. Many virtual-environment (VE) systems use a sensor on the user’s head

Sensor

signal

processing

Is moving?

Move

direction

Move

speed

Sensors on or around user

Interpret

signals

and

convert to

Δ POV

Δ POV

FIGURE

4.9

Details of virtual-locomotion interface processing.

The sensors measure position, orientation, velocity, and/or acceleration of body

parts. Signal processing of the sensor data determines whether the user intends to

move and determines the direction and speed of her intended movement. Speed

and direction are interpreted and converted into changes in the POV.

4.2 Technology of the Interface

117

to establish which way the head is pointing (which is approximately the way the

user is looking) and a sensor on a handheld device that has buttons or switches

that allow the user to interact with the environment. In Figure 4.3, the sensor

on the top of the head is visible; the sensor for the hand controller is inside the

case. A sensor is required on each body part for which pose data are needed. For

example, if a locomotion interface needs to know where the feet are, then users

must wear sensors or beacons on their feet. Figure 4.10 shows a walking-in-place

FIGURE

4.10

Sensors on a user of a walking-in-place locomotion interface.

This system has a head position and orientation sensor on the back of the headset,

a torso orientation sensor hanging from the neck, knee position sensors, and

pressure sensors under each shoe. (Photo courtesy of the Department of

Computer Science, UNC at Chapel Hill.)

4 Locomotion Interfaces

118

user with sensors on her head, torso, and below the knee of each leg. The tracker

on the head determines the user’s view direction; the torso tracker determines

which way she moves when she pushes the joystick away from her; the trackers

at the knees estimate the position and velocity of the heels, and those data are

used to establish how fast the user moves.

Sensor beacon trackers can be built using any one of several technologies.

In magnetic trackers, the beacons create magnetic fields and the sensors measure

the direction and stretch of the magnetic fields to determine location and orienta-

tion. In optical trackers, the beacons are lights and the sensors act as cameras.

Optical trackers work a bit like celestial navigation—the sensors can measure

the angles to three or more stars (beacons) of known position, and then the sys-

tem triangulates to find the position of the sensor. Acoustic trackers measure

position with ultrasonic pulses and microphones.

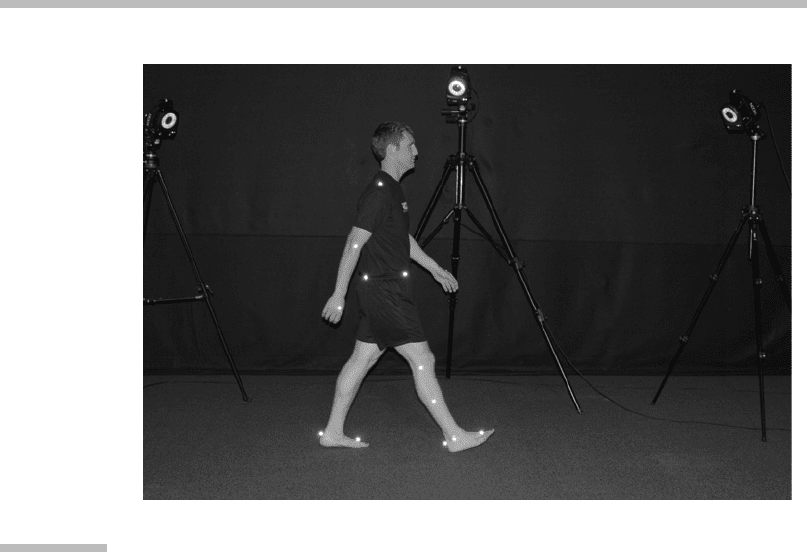

Another class of trackers is called

outside-looking-in

because the beacons are

on the user and the sensors are fixed in the room. Camera-based tracker systems

such as the WorldViz Precision Position Tracker, the Vicon MX, and the Phase-

Space IMPULSE system are outside-looking-in systems and use computer vision

techniques to track beacons (sometimes called markers in this context) worn by

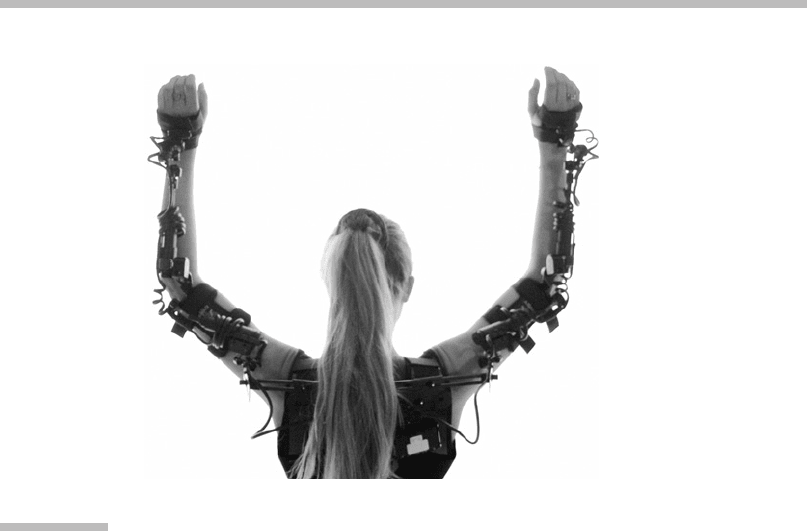

the user. This technology is common in whole-body trackers (also called

motion

capture systems

) where the goal is to measure, over time, the path of tens of mar-

kers. Figure 4.11 shows a user wearing highly reflective foam balls and three cam-

eras on tripods. Motion capture systems can also be built using magnetic,

mechanical (Figure 4.12 on page 121), and other nonoptical technologies. Motion

capture systems often require significant infrastructure and individual calibration.

Beaconless Trackers

Some tracking technologies do not rely on beacons. Some, for example, determine

the orientation of the body part from the Earth’s magnetic field (like a compass),

or the Earth’s gravitational field (a level-like

tilt sensor

). Others are inertial

sensors—they measure positional acceleration and/or rotational velocity using

gyroscopes. Some inertial tracking systems integrate the measured acceleration

and rotation data twice to compute position or displacement. However, this

computation causes any errors in the acceleration measurement (called

drift

)to

result in rapidly increasing errors in the position data. Some tracker systems, such

as the Intersense IS-900, correct for accelerometer drift by augmenting the inertial

measurements with measurements from optical or acoustic trackers. Such systems

with two (or more) tracking technologies are called

hybrid trackers.

Trade-offs in Tracking Technologies

Each type of tracker has advantages and disadvantages. Wires and cables are

a usability issue. Many magnetic trackers are susceptible to distortions in mag-

netic fields caused by metal objects in the room (e.g., beams, metal plates in the

floor, other electronic equipment). Some systems require recalibration whenever

4.2 Technology of the Interface

119

the metal objects in the room are moved. Optical systems, on the other hand,

require that the sensors have a direct line of sight to the beacons. If a user has

an optical sensor on her head (pointing at a beacon in the ceiling) and bends over

to pick something off the floor, the head-mounted sensor might not be able to

“see” the ceiling beacon anymore and the tracker will no longer report the pose

of her head.

Other Motion Sensors

In addition t o position-tracking motion sensors, there are sensors that mea-

sure other, simpler characteristics of the u ser’s body m otion. Examples include

pressure sensors that report if, where, or how hard a user is stepping on the

floor. These sensors can be mounted in the shoes (Yan, Allison, & Rushton,

2004) or in the floor i tself. The floor swi tches can be far apart, as in the

mats used in Konami Corporation’s Dance Dance Revolution music video game

(Figure 4.13 on page 122) or closer together to provide higher positional resolu-

tion (Bouguila et al., 2004).

FIGURE

4.11

Optical motion capture system.

In this system, the user wears foam balls that reflect infrared light to the cameras

mounted on the tripods. The bright rings are infrared light sources. Cameras

are embedded in the center of the rings. (Courtesy of Vicon.)

4 Locomotion Interfaces

120

4.2.2 Feedback Displays

As users specify how they want to move, the system must provide feedback via a

display to indicate how and where they are moving. Feedback closes the locomo-

tion interface loop (Figure 4.8).

Display

is a general term, and can refer to visual

displays as well as to means of presenting other stimuli to other senses.

Many locomotion interfaces make use of head-worn visual displays, as in

Figure 4.3, to provide visual feedback. These are often called head-mounted dis-

plays (HMDs). Using such a display, no matter how the user turns her body or head,

the display is always directly in front of her eyes. On the negative side, many HMDs

are heavy to wear and the cables may interfere with the user’s head motion.

Another type of visual display has a large flat projection screen or LCD

or plasma panel in front of the user. These displays are usually of higher image

quality (e.g., greater resolution, higher contrast ratio, and so on) than HMDs.

However, if the user physically turns away from the screen (perhaps to look

around in the virtual scene or to change her walking direction), she no longer

has visual imagery in front of her.

To address this problem, several systems surround the user with projection dis-

plays. One example of this is a CAVE, which is essentially a small room where one or

more walls, the floor, and sometimes the ceiling, are display surfaces (Figure 4.14).

Most surround screen displays do not completely enclose the user, but some do.

FIGURE

4.12

Mechanical motion capture system.

This mechanical full-body system is produced by SONALOG. (Courtesy of

SONALOG.)

4.2 Technology of the Interface

121