He M., Petoukhov S. Mathematics of Bioinformatics: Theory, Methods and Applications

Подождите немного. Документ загружается.

SEQUENCE ALIGNMENT 73

and

Lij Lij sabLijsa

Lij

ij i

, max , , , , , , ,

,

()

=−−

()

+

()

−

()

+−

()

{

−

()

011 1

1

++−

()

≤≤ ≤≤

}

sb im jn

j

,,11

where s ( x , y ) ≥ 0 if x and y match; s ( x , y ) ≤ 0 if x and y do not match or one

of them is a blank.

Then

Ljl Sa a b b i j m k l n

ijkl

, max , , ,

()

=

()

≤≤≤ ≤ ≤≤

{}

011

Each maximal entry L ( j * , l * ) of the array L corresponds to an optimal local

alignment of the sequences a and b .

Example 3.2 Consider the sequences GGTATGG and CCCTTTTCCC and

score the matches with the value of 5, mismatches with the value of − 4, and

indels with the values of − 7. The matrix L is then given as follows:

The optimal local alignment score for these two sequences is L (5, 6) = 6. This

score leads to the optimal local alignment

TAT

TTT

Global – Local Alignment

Global – local alignment ( hybrid alignment ) compares a sequence with the

subsequences of another sequence. This can be especially useful when the

downstream part of one sequence overlaps with the upstream part of the other

sequence. In this case, neither global nor local alignment is entirely appropri-

ate: A global alignment would attempt to force the alignment to extend beyond

L

i

j

0

—

1

C

2

C

3

C

4

T

5

T

6

T

7

C

8

C

9

C

0 — 0 0 0 0 0 0 0 0 0 0

1 G 0 0 0 0 0 0 0 0 0 0

2 G 0 0 0 0 0 0 0 0 0 0

3 T 0 0 0 0 5 5 5 0 0 0

4 A 0 0 0 0 0 1 1 1 0 0

5 T 0 0 0 0 5 5 6 0 0 0

6 G 0 0 0 0 0 2 1 2 0 0

7 G 0 0 0 0 0 0 0 0 0 0

74 BIOLOGICAL SEQUENCES, SEQUENCE ALIGNMENT, AND STATISTICS

the region of overlap, while a local alignment might not fully cover the region

of overlap (Lipman et al., 1984 ). Here we present an optimal global – local

alignment.

Let a = a

1

a

2

…

a

m

and b = b

1

b

2

…

b

n

be two sequences of different length

over the alphabet Σ . Here we let m ≤ n . The problem is to fi nd the maximum

matching of the shorter sequence with the longer one. That is, fi nd

HSbbklm

kl

ab a, max ,

()

=

()

≤≤≤

{}

1

Theorem 3.4 (Optimal Global – Local Alignment) Let a = a

1

a

2

…

a

m

and

b = b

1

b

2

…

b

n

be two sequences over the alphabet Σ . Defi ne

Hj jm00 0,,

()

=≤≤

Hi sa i n

k

k

i

,,,00

1

()

=−

()

≤≤

=

∑

and

Hij Hij sabHijsa

Hij s

ij i

,max , ,, , ,,

,

()

=−−

()

+

()

−

()

+−

()

{

−

()

+

11 1

1 −−

()

≤≤ ≤≤

}

,,bimjn

j

11

where s ( x , y ) ≥ 0 if x and y match; s ( x , y ) ≤ 0 if x and y do not match or one

of them is a blank.

Then

Hij Sa a b b i m k j n

iik j

,max , ,

()

=

()

≤≤ ≤ ≤≤

{}

11

In particular,

HHmjjnab, max ,

()

=

()

≤≤

{}

1

Example 3.3 Consider the sequences AUUA and UAAUAAU and score the

matches with a value of 5 and mismatches and indels with a value of − 4. The

matrix H is given as follows:

H

i

j

0

—

1

U

2

A

3

A

4

U

5

A

6

A

7

U

0 — 0 0 0 0 0 0 0 0

1 A

− 4 − 4

5 5 1 5 5 1

2 U

− 8

1 1 1 10 6 2 10

3 U

− 12 − 3 − 3 − 3

6 6 2 7

4 A

− 16 − 7

2 2 2 11 11 7

SEQUENCE ALIGNMENT 75

The optimal alignment score for these two sequences is given by the maximum

entries H (4, 5) = H (4, 6) = 11. This alignment leads to the respective optimal

global – local alignments:

AUUA

AUAA

and

AUUA

AAUA

Multiple Sequence Alignment

Multiple sequence alignment is an extension of pairwise alignment used to

incorporate more than two sequences at a time. Multiple sequence alignment

invalues aligning a number of sequences simultaneously to determine common

features among a collection of sequences. Multiple alignments are often used

to identify conserved sequence regions across a group of sequences hypo-

thesized to be related evolutionarily. Such conserved sequence motifs can be

used in conjunction with structural and mechanistic information to locate the

catalytic active sites of enzymes. To identify the common features, one needs

to determine an optimal alignment for the entire collection of sequences.

Multiple sequence alignments are computationally diffi cult to produce, and

most formulations of the problem lead to NP - complete combinatorial optimi-

zation problems (Deken, 1983 ). Nevertheless, the utility of these alignments

in bioinformatics has led to the development of a variety of methods suitable

for aligning three or more sequences.

Let Ω = ( a

1

a

2

…

a

k

) be a family of sequences over the alphabet Σ ,

aa a

n111 1

1

=

aa a

kk kn

k

=

1

and

Σ*(

** *

)= aa a

12

k

be a corresponding family of sequences of equal length

l over the extended alphabet Σ * = Σ ∪ { · },

aa a

l111 1

** *

=

aa a

kk kl

** *

=

1

by inserting blanks, where max{ n

1

, n

2

, … , n

k

} ≤ l ≤ n

1

+ n

2

+

…

+ n

k

.

For example, a multiple alignment of the three sequences AAGAA,

ATAATG, and CTGGG is

76 BIOLOGICAL SEQUENCES, SEQUENCE ALIGNMENT, AND STATISTICS

AA GAAA

AT AATG

CTGG G

−

−

−−

The optimal global alignment is to fi nd the maximum similarity between these

sequences Ω in terms of a scoring function s ( Ω * ), that is,

S is a multiple alignment ofΩΩΩ Ω

()

=

()

{}

max * *s

where

ssaa

iki

i

l

Ω*(

*

,,

*

)

()

=

=

∑

1

1

…

is the sum of scores of the columns. Here it is assumed that the columns of the

alignment are statistically independent. We are now in a position to state the

optimal multiple sequence alignment result.

Theorem 3.5 (Optimal Global Multiple Sequence Alignment) Let Ω =

( a

1

a

2

…

a

k

) be a family of sequences over the alphabet Σ ,

aa a

n111 1

1

=

aa a

kk kn

k

=

1

and B = ( b

1

, … , b

k

) be a binary vector over {0, 1} and defi ne b * x = x if b = 1

and b * x = − x if b = 0. For all index vectors ( i

1

, … , i

k

), defi ne

Si i Si b i b sba ba

kkkikki

k

11111

1

, , max{ , , (

*

,,

*

)}………

()

=−−

()

+

where the maximum is taken over all nonzero binary vectors B . Also, we set

S 000,,…

()

=

Then

Si i Sa a a a

kikki

k

11111

1

,, ,, ,,,…………

()

=

()

In particular,

SSnn

k

Ω

()

=

()

1

,,…

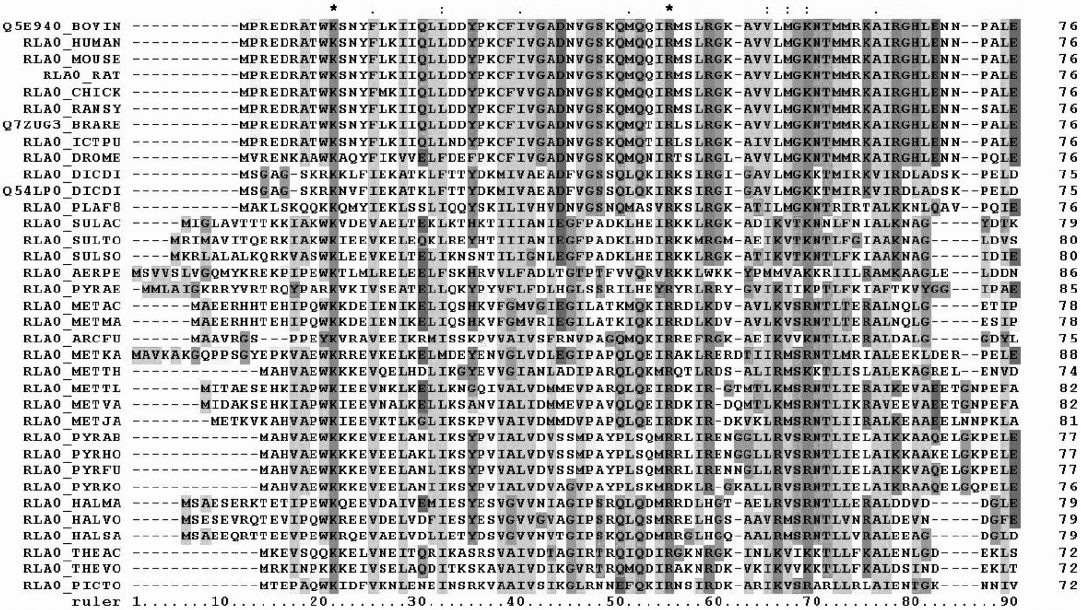

Example 3.4 Figure 3.1 is a representation of a protein multiple sequence

alignment produced using ClustalW (Chenna et al., 2003 ). The sequences are

FIGURE 3.1 First 90 positions of the alignment. The shadings represent the amino acid conservation according to the properties and

distribution of amino acid frequencies in each column. Note the two completely conserved residues arginine (R) and lysine (K), marked

with asterisks at the top of the alignment.

77

78 BIOLOGICAL SEQUENCES, SEQUENCE ALIGNMENT, AND STATISTICS

instances of the acidic ribosomal protein P0 homolog (L10E) encoded by the

Rplp0 gene from multiple organisms. The protein sequences were obtained

through SwissProt searching using the gene name. This was generated by

Miguel Andrade, February 2006 (UTC).

Profi le and Sequence Alignment

Profi le analysis has long been a useful tool in fi nding and aligning distantly

related sequences and in identifying known sequence domains in new

sequences. Basically, a profi le is a description of the consensus of a multiple

sequence alignment. It represents the common characteristics of a family

of similar sequences where any single sequence is just one realization of a

family ’ s characteristics. It uses a position - specifi c scoring system to capture

information about the degree of conservation at various positions in the

multiple alignment. The profi le method has several advantages over most

sequence comparison methods. Since the profi le represents the alignment of

a number of known sequences, it contains information that defi nes where

the family of sequences is conserved and where it is variable. Comparison

of a new sequence to a profi le search can emphasize similarity to conserved

regions while tolerating diversity in variable regions. This makes it a much

more sensitive and specifi c method for database searching than pairwise

methods, such as those used by BLAST or FastA, that use position -

independent scoring.

Example 3.5 Consider an optimal profi le - sequence alignment that aligns a

sequence against a profi le. Let Ω = ( a

1

a

2

…

a

k

) be a family of sequences over

the alphabet Σ and

Ω*(

** *

)= aa a

12

k

be a corresponding family of sequences

of equal length l over the extended alphabet Σ * = Σ ∪ { · }:

aa a

l111 1

** *

=

aa a

kk kl

** *

=

1

The profi le of the alignment Ω * is a sequence of l probability distributions

P

j

= ( p

j

,

x

) on the alphabet Σ * such that p

j

,

x

is the relative frequency of the

character x to occur in the j th column of the alignment. We denote the profi le

of the alignment Ω * by

PPP

L

**(

**

)Ω

()

=

1

. An alignment of the profi le

P ( Ω * ) and the sequence

a*

**

= aa

L1

is a pair of sequences

PP

L1

**

aa

L1

**

SEQUENCE ALIGNMENT 79

A blank in the profi le P * ( Ω * ) is the probability distribution denoted by –

p

:

1

0

0

which assigns probability 1 to the blank and probability 0 to all other charac-

ters. The optimal profi le - sequence alignment is to fi nd the maximum similarity

between the profi le P and the sequence a , that is,

SsP a P* a* P* a* P a, max , , ,

()

=

()() ()

{}

is an alignment of

where s ( P * , a * ) is a score function that may be defi ned as

ssaxp

ix

xi

l

P* a*,(

*

,)

*

()

=

∈=

∑∑

Ω1

with an individual similarity score s ( a , x ) on the alphabet Σ * and a score

between the probability distribution p = ( p

x

) on the alphabet Ω * and the char-

acter x in Σ * .

Theorem 3.6 (Optimal Profi le - Sequence Alignment) Let P = p

1

p

2

…

p

n

be

the profi le of a multiple sequence alignment and a = a

1

a

2

…

a

n

be a sequence

over the alphabet Σ * . Defi ne

Sij S aa a i m j n

ij

,,,,

()

=

()

≤≤ ≤≤pp p

12 12

11

and set

SSispSjsa

k

k

i

k

k

j

00 0 0 0

11

,, , ,, , ,

()

=

()

=−

() ()

=−

()

==

∑∑

p

Then

Sij Sijs Sij saSij s

iij

, max , , , , , , ,

()

=−

()

+−

()

−−

()

+

()

−

()

+−111 1pp

pp

, a

j

()

{}

In particular,

SSmnPa,,

()

=

()

Example 3.6 Consider the sequences AGCA, AGAGA, ACCG, and CGGC

over the DNA alphabet. The multiple alignment

80 BIOLOGICAL SEQUENCES, SEQUENCE ALIGNMENT, AND STATISTICS

AG CA

AGAGA

AC CG

CG GC

−

−

−

has the following profi le P :

0 0 3/4 0 0

3/4 0 1/4 0 1/2

1/4 1/4 0 1/2 1/4

0 3/4 0 1/2 1/4

0 0 0 0 0

where the columns are labeled in turn by – , A, C, G, and T. The profi le P can

be viewed as a column stochastic matrix.

An alignment between this profi le and the sequence AACCT is

ppppp

12345

−

p

AA CC T−

A blank in the profi le must be inserted into all sequences of the corresponding

multiple alignment, so the resulting multiple alignment is

AG CA

AGAGA

AC CG

CG GC

AA CC T

−−

−

−−

−−

−

Next we present an optimal profi le - to - profi le alignment in terms of the

distance scoring function.

Theorem 3.7 (Optimal Profi le - to - Profi le Alignment) Let P = p

1

p

2

…

p

m

be

the profi le of a multiple sequence alignment and Q = q

1

q

2

…

q

n

be the second

profi le of a multiple sequence alignment over the alphabet Σ * . Then defi ne

Dddpq

ii

i

l

PQ PQ PQ,min*,* (

*

,

*

)*,*

()

=

() ()

=

∑

= is an alignment

1

oof PQ,

()

⎧

⎨

⎩

⎫

⎬

⎭

as the minimum distance between the profi les P and Q . Let

SEQUENCE ANALYSIS AND FURTHER DISCUSSION 81

Di j D i m j n

ij

,,,,

()

=

()

≤≤ ≤≤pp p qq q

12 12

11

and set

DDidp Djdq

k

k

i

k

k

i

00 0 0 0

11

,,, ,, , ,

()

=

()

=−

()

()

=−

()

==

∑∑

pp

Then

Dij Dijd Dij

sSij

i

ij

,min , ,, ,

,,,

p

()

=−

()

+−

()

−−

()

+

{

()

−

()

111

1

p

pq ++−

()

}

d

jp

, q

In particular,

DDmnPQ,,

()

=

()

3.4 SEQUENCE ANALYSIS AND FURTHER DISCUSSION

Now we know how to fi nd an optimal alignment. A major concern when inter-

preting alignment results is whether similarity between sequences is biologi-

cally signifi cant. Good alignments can occur by chance alone. Many chance

mechanisms are involved in the creation of these data. How do we know if it

is a biologically meaningful alignment, especially when the similarity is only

marginal? Here we present two approaches. One is the classical approach

based on the traditional statistical approach of calculating the chance of a

match score greater than the value observed. The other is the Bayesian

approach, based on a comparison of models.

Under even the simplest random models and scoring systems, very little is

known about the random distribution of optimal global alignment scores

(Deken, 1983 ). Monte Carlo experiments can provide rough distributional

results for some specifi c scoring systems and sequence compositions (Reich

et al., 1984 ), but these cannot be generalized easily.

Therefore, one of the few methods available for assessing the statistical

signifi cance of a particular global alignment is to generate many random

sequence pairs of the appropriate length and composition, and calculate the

optimal alignment score for each (Altschul and Erickson, 1985 ; Fitch, 1983 ).

Although it is then possible to express the score of interest in terms of standard

deviations from the mean, it is a mistake to assume that the relevant distribu-

tion is normal and convert this Z - value into a P - value; the tail behavior of

global alignment scores is unknown. The most that one can say reliably is that

if 100 random alignments have scores inferior to the alignment of interest, the

P - value in question is probably less than 0.01. One further pitfall to avoid is

exaggerating the signifi cance of a result found among multiple tests. When

82 BIOLOGICAL SEQUENCES, SEQUENCE ALIGNMENT, AND STATISTICS

many alignments have been generated (e.g., in a database search), the signifi -

cance of the best must be discounted accordingly. An alignment with a P - value

of 0.0001 in the context of a single trial may be assigned a P - value of 0.1 only

if it was selected as the best among 1000 independent trials.

However, unlike those of global alignments, statistics for the scores of local

alignments are well understood. This is particularly true for local alignments

lacking gaps, which we consider fi rst. Such alignments were precisely those

sought by the original BLAST database search programs (Altschul et al., 1990 )

from each of the two sequences being compared. A modifi cation of the Smith –

Waterman (1981) or Sellers (1984) algorithms will fi nd all segment pairs whose

scores cannot be improved by extension or trimming. These are called high -

scoring segment pairs (HSPs). To analyze how high a score is likely to rise by

chance, a model of random sequences is needed. For proteins, the simplest

model chooses the amino acid residues in a sequence independently, with

specifi c background probabilities for the various residues. Additionally, the

score expected for aligning a random pair of amino acid is required to be

negative. Were this not the case, long alignments would tend to have high

scores independent of whether the segments aligned were related, and the

statistical theory would break down. Just as the sum of a large number of

independent identically distributed (i.i.d.) random variables tends to a normal

distribution, the maximum of a large number of i.i.d. random variables tends

to an extreme value distribution (Gumbel, 1958 ). (We elide the many technical

points required to make this statement rigorous.) In studying optimal local

sequence alignments, we are essentially dealing with the latter case (Dembo

et al., 1994 ; Karlin and Altschul, 1990 ). In the limit of suffi ciently large sequence

lengths m and n , the statistics of HSP scores are characterized by two para-

meters, K and λ (lambda). Most simply, the expected number of HSPs with a

score of at least S is given by the formula E = K

mn

e

- λ

S

. We call this the E - value

for the score S .

This formula makes eminently intuitive sense. Doubling the length of either

sequence should double the number of HSPs attaining a given score. Also, for

an HSP to attain the score 2 x , it must attain the score x twice in a row, so one

expects E to decrease exponentially with the score. The parameters K and λ

can be thought of simply as natural scales for the search space size and the

scoring system, respectively.

The other approach is based on a comparison of models.

1 . Hidden Markov model (HMM). This is a statistical model in which the

system being modeled is assumed to be a Markov process with unknown

parameters, and the challenge is to determine the hidden parameters from the

observable parameters. The extracted model parameters can then be used to

perform further analysis: for example, for pattern recognition applications. In

a regular Markov model, the state is directly visible to the observer, and there-

fore the state transition probabilities are the only parameters. In a hidden

Markov model, the state is not directly visible, but variables infl uenced by the