Manning Ch. D., Raghavan P., Sch?tze H. Introduction to Information Retrieval - Введение в информационный поиск

Подождите немного. Документ загружается.

Online edition (c)2009 Cambridge UP

286 13 Text classification and Naive Bayes

13.7 Ref erences and further reading

General introductions to statistical classification and machine learning can be

found in (Hastie et al. 2001), (Mitchell 1997), and (Duda et al. 2000), including

many important methods (e.g., decision trees and boosting) that we do not

cover. A comprehensive review of text classification methods and results is

(Sebastiani 2002). Manning and Schütze (1999, Chapter 16) give an accessible

introduction to text classification with coverage of decision trees, perceptrons

and maximum entropy models. More information on the superlinear time

complexity of learning methods that are more accurate than Naive Bayes can

be found in (Perkins et al. 2003) and (Joachims 2006a).

Maron and Kuhns (1960) described one of the first NB text classifiers. Lewis

(1998) focuses on the history of NB classification. Bernoulli and multinomial

models and their accuracy for different collections are discussed by McCal-

lum and Nigam (1998). Eyheramendy et al. (2003) present additional NB

models. Domingos and Pazzani (1997), Friedman (1997), and Hand and Yu

(2001) analyze why NB performs well although its probability estimates are

poor. The first paper also discusses NB’s optimality when the independence

assumptions are true of the data. Pavlov et al. (2004) propose a modified

document representation that partially addresses the inappropriateness of

the independence assumptions. Bennett (2000) attributes the tendency of NB

probability estimates to be close to either 0 or 1 to the effect of document

length. Ng and Jordan (2001) show that NB is sometimes (although rarely)

superior to discriminative methods because it more quickly reaches its opti-

mal error rate. The basic NB model presented in this chapter can be tuned for

better effectiveness (Rennie et al. 2003;Kołcz and Yih 2007). The problem of

concept drift and other reasons why state-of-the-art classifiers do not always

excel in practice are discussed by Forman (2006) and Hand (2006).

Early uses of mutual information and χ

2

for feature selection in text clas-

sification are Lewis and Ringuette (1994) and Schütze et al. (1995), respec-

tively. Yang and Pedersen (1997) review feature selection methods and their

impact on classification effectiveness. They find that pointwise mutual infor-POINTWISE MUTUAL

INFORMATION

mation is not competitive with other methods. Yang and Pedersen refer to

expected mutual information (Equation (

13.16)) as information gain (see Ex-

ercise 13.13, page 285). (Snedecor and Cochran 1989) is a good reference for

the χ

2

test in statistics, including the Yates’ correction for continuity for 2 ×2

tables. Dunning (1993) discusses problems of the χ

2

test when counts are

small. Nongreedy feature selection techniques are described by Hastie et al.

(2001). Cohen (1995) discusses the pitfalls of using multiple significance tests

and methods to avoid them. Forman (2004) evaluates different methods for

feature selection for multiple classifiers.

David D. Lewis defines the ModApte split at www.daviddlewis.com/resources/testcollections/reuters21578/readme

based on Apté et al. (1994). Lewis (1995) describes utility measures for theUTILITY MEASURE

Online edition (c)2009 Cambridge UP

13.7 References and further reading 287

evaluation of text classification systems. Yang and Liu (1999) employ signif-

icance tests in the evaluation of text classification methods.

Lewis et al. (2004) find that SVMs (Chapter

15) perform better on Reuters-

RCV1 than kNN and Rocchio (Chapter

14).

Online edition (c)2009 Cambridge UP

Online edition (c)2009 Cambridge UP

DRAFT! © April 1, 2009 Cambridge University Press. Feedback welcome. 289

14 Vector space classi fication

The document representation in Naive Bayes is a sequence of terms or a bi-

nary vector he

1

, . . . , e

|V|

i ∈ {0, 1}

|

V|. In this chapter we adopt a different

representation for text classification, the vector space model, developed in

Chapter

6. It represents each document as a vector with one real-valued com-

ponent, usually a tf-idf weight, for each term. Thus, the document space X,

the domain of the classification function γ, is R

|V|

. This chapter introduces a

number of classification methods that operate on real-valued vectors.

The basic hypothesis in using the vector space model for classification is

the contiguity hypothesis.CONTIGUITY

HYPOTHESIS

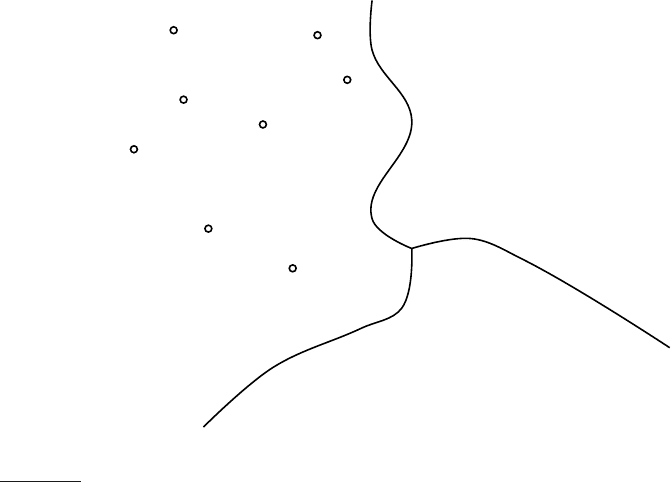

Contiguity hypothesis. Documents in the same class form a contigu-

ous region and regions of different classes do not overlap.

There are many classification tasks, in particular the type of text classification

that we encountered in Chapter

13, where classes can be distinguished by

word patterns. For example, documents in the class China tend to have high

values on dimensions like Chinese, Beijing, and Mao whereas documents in the

class UK tend to have high values for London, British and Queen. Documents

of the two classes therefore form distinct contiguous regions as shown in

Figure

14.1 and we can draw boundaries that separate them and classify new

documents. How exactly this is done is the topic of this chapter.

Whether or not a set of documents is mapped into a contiguous region de-

pends on the particular choices we make for the document representation:

type of weighting, stop list etc. To see that the document representation is

crucial, consider the two classes written by a group vs. written by a single per-

son. Frequent occurrence of the first person pronoun I is evidence for the

single-person class. But that information is likely deleted from the document

representation if we use a stop list. If the document representation chosen

is unfavorable, the contiguity hypothesis will not hold and successful vector

space classification is not possible.

The same considerations that led us to prefer weighted representations, in

particular length-normalized tf-idf representations, in Chapters 6 and 7 also

Online edition (c)2009 Cambridge UP

290 14 Vector space classification

x

x

x

x

⋄

⋄

⋄

⋄

⋄

⋄

China

Kenya

UK

⋆

◮

Figure 14.1 Vector space classification into three classes.

apply here. For example, a term with 5 occurrences in a document should get

a higher weight than a term with one occurrence, but a weight 5 times larger

would give too much emphasis to the term. Unweighted and unnormalized

counts should not be used in vector space classification.

We introduce two vector space classification methods in this chapter, Roc-

chio and kNN. Rocchio classification (Section

14.2) divides the vector space

into regions centered on centroids or prototypes, one for each class, computedPROTOTYPE

as the center of mass of all documents in the class. Rocchio classification is

simple and efficient, but inaccurate if classes are not approximately spheres

with similar radii.

kNN or k nearest neighbor classification (Section

14.3) assigns the majority

class of the k nearest neighbors to a test document. kNN requires no explicit

training and can use the unprocessed training set directly in classification.

It is less efficient than other classification methods in classifying documents.

If the training set is large, then kNN can handle non-spherical and other

complex classes better than Rocchio.

A large number of text classifiers can be viewed as linear classifiers – clas-

sifiers that classify based on a simple linear combination of the features (Sec-

tion

14.4). Such classifiers partition the space of features into regions sepa-

rated by linear decision hyperplanes, in a manner to be detailed below. Because

of the bias-variance tradeoff (Section

14.6) more complex nonlinear models

Online edition (c)2009 Cambridge UP

14.1 Document representations and measures of rel atedness in vector spaces 291

d

true

d

projected

x

1

x

2

x

3

x

4

x

5

x

′

1

x

′

2

x

′

3

x

′

4

x

′

5

x

′

1

x

′

2

x

′

3

x

′

4

x

′

5

◮

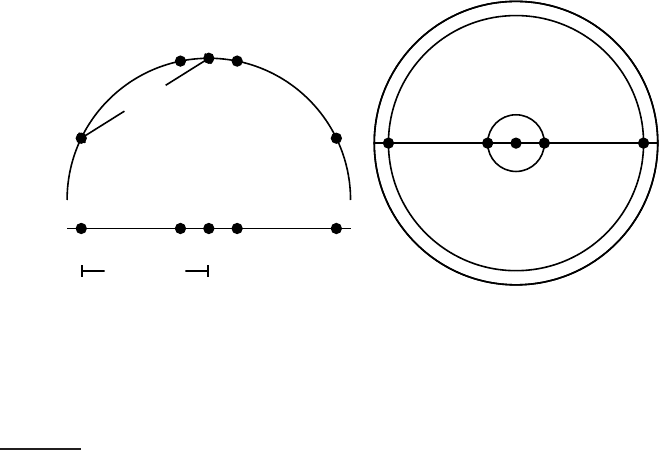

Figure 14.2 Projections of small areas of the unit sphere preserve distances. Left:

A projection of the 2D semicircle to 1D. For the points x

1

, x

2

, x

3

, x

4

, x

5

at x coordinates

−0.9, −0.2, 0, 0.2, 0.9 the distance |x

2

x

3

| ≈ 0.201 only differs by 0.5% from |x

′

2

x

′

3

| =

0.2; but |x

1

x

3

|/|x

′

1

x

′

3

| = d

true

/d

projected

≈ 1.06/0.9 ≈ 1.18 is an example of a large

distortion (18%) when projecting a large area. Right: The corresponding projection of

the 3D hemisphere to 2D.

are not systematically better than linear models. Nonlinear models have

more parameters to fit on a limited amount of training data and are more

likely to make mistakes for small and noisy data sets.

When applying two-class classifiers to problems with more than two classes,

there are one-of tasks – a document must be assigned to exactly one of several

mutually exclusive classes – and any-of tasks – a document can be assigned to

any number of classes as we will explain in Section

14.5. Two-class classifiers

solve any-of problems and can be combined to solve one-of problems.

14.1 Document representations and measures of relatedness in vec-

tor spaces

As in Chapter

6, we represent documents as vectors in R

|V|

in this chapter.

To illustrate properties of document vectors in vector classification, we will

render these vectors as points in a plane as in the example in Figure

14.1.

In reality, document vectors are length-normalized unit vectors that point

to the surface of a hypersphere. We can view the 2D planes in our figures

as projections onto a plane of the surface of a (hyper-)sphere as shown in

Figure

14.2. Distances on the surface of the sphere and on the projection

plane are approximately the same as long as we restrict ourselves to small

areas of the surface and choose an appropriate projection (Exercise

14.1).

Online edition (c)2009 Cambridge UP

292 14 Vector space classification

Decisions of many vector space classifiers are based on a notion of dis-

tance, e.g., when computing the nearest neighbors in kNN classification.

We will use Euclidean distance in this chapter as the underlying distance

measure. We observed earlier (Exercise

6.18, page 131) that there is a direct

correspondence between cosine similarity and Euclidean distance for length-

normalized vectors. In vector space classification, it rarely matters whether

the relatedness of two documents is expressed in terms of similarity or dis-

tance.

However, in addition to documents, centroids or averages of vectors also

play an important role in vector space classification. Centroids are not length-

normalized. For unnormalized vectors, dot product, cosine similarity and

Euclidean distance all have different behavior in general (Exercise

14.6). We

will be mostly concerned with small local regions when computing the sim-

ilarity between a document and a centroid, and the smaller the region the

more similar the behavior of the three measures is.

?

Exercise 14.1

For small areas, distances on the surface of the hypersphere are approximated well

by distances on its projection (Figure

14.2) because α ≈ sin α for small angles. For

what size angle is the distortion α/ sin(α) (i) 1.01, (ii) 1.05 and (iii) 1.1?

14.2 Rocchio classification

Figure 14.1 shows three classes, China, UK and Kenya, in a two-dimensional

(2D) space. Documents are shown as circles, diamonds and X’s. The bound-

aries in the figure, which we call decision boundaries, are chosen to separateDECISION BOUNDARY

the three classes, but are otherwise arbitrary. To classify a new document,

depicted as a star in the figure, we determine the region it occurs in and as-

sign it the class of that region – China in this case. Our task in vector space

classification is to devise algorithms that compute good boundaries where

“good” means high classification accuracy on data unseen during training.

Perhaps the best-known way of computing good class boundaries is Roc-ROCCHIO

CLASSIFICATION

chio classification, which uses centroids to define the boundaries. The centroid

CENTROID

of a class c is computed as the vector average or center of mass of its mem-

bers:

~µ(c) =

1

|D

c

|

∑

d∈D

c

~v(d)

(14.1)

where D

c

is the set of documents in D whose class is c: D

c

= {d : hd, ci ∈ D}.

We denote the normalized vector of d by ~v(d) (Equation (6.11), page 122).

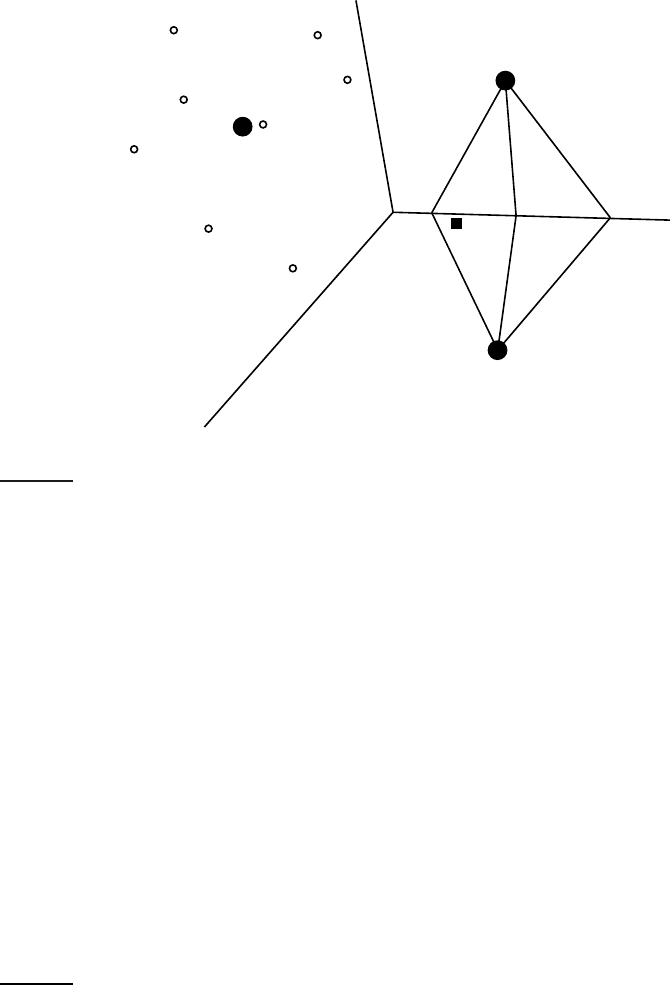

Three example centroids are shown as solid circles in Figure 14.3.

The boundary between two classes in Rocchio classification is the set of

points with equal distance from the two centroids. For example, |a

1

| = |a

2

|,

Online edition (c)2009 Cambridge UP

14.2 Rocchio classification 293

x

x

x

x

⋄

⋄

⋄

⋄

⋄

⋄

China

Kenya

UK

⋆

a

1

a

2

b

1

b

2

c

1

c

2

◮

Figure 14.3 Rocchio classification.

|b

1

| = |b

2

|, and |c

1

| = |c

2

| in the figure. This set of points is always a line.

The generalization of a line in M-dimensional space is a hyperplane, which

we define as the set of points ~x that satisfy:

~w

T

~x = b

(14.2)

where ~w is the M-dimensional normal vector

1

of the hyperplane and b is aNORMAL VECTOR

constant. This definition of hyperplanes includes lines (any line in 2D can

be defined by w

1

x

1

+ w

2

x

2

= b) and 2-dimensional planes (any plane in 3D

can be defined by w

1

x

1

+ w

2

x

2

+ w

3

x

3

= b). A line divides a plane in two,

a plane divides 3-dimensional space in two, and hyperplanes divide higher-

dimensional spaces in two.

Thus, the boundaries of class regions in Rocchio classification are hyper-

planes. The classification rule in Rocchio is to classify a point in accordance

with the region it falls into. Equivalently, we determine the centroid ~µ(c) that

the point is closest to and then assign it to c. As an example, consider the star

in Figure 14.3. It is located in the China region of the space and Rocchio

therefore assigns it to China. We show the Rocchio algorithm in pseudocode

in Figure

14.4.

1. Recall from basic linear algebra that ~v · ~w = ~v

T

~w, i.e., the dot product of ~v and ~w equals the

product by matrix multiplication of the transpose of ~v and ~w.

Online edition (c)2009 Cambridge UP

294 14 Vector space classification

term weights

vector Chinese Japan Tokyo Macao Beijing Shanghai

~

d

1

0 0 0 0 1.0 0

~

d

2

0 0 0 0 0 1.0

~

d

3

0 0 0 1.0 0 0

~

d

4

0 0.71 0.71 0 0 0

~

d

5

0 0.71 0.71 0 0 0

~µ

c

0 0 0 0.33 0.33 0.33

~µ

c

0 0.71 0.71 0 0 0

◮

Table 14.1 Vectors and class centroids for the data in Table 13.1.

✎

Example 14.1: Table

14.1 shows the tf-idf vector representations of the five docu-

ments in Table

13.1 (page 261), using the formula (1 + log

10

tf

t,d

) log

10

(4/df

t

) if tf

t,d

>

0 (Equation (

6.14), page 127). The two class centroids are µ

c

= 1/3 · (

~

d

1

+

~

d

2

+

~

d

3

)

and µ

c

= 1/1 · (

~

d

4

). The distances of the test document from the centroids are

|µ

c

−

~

d

5

| ≈ 1.15 and |µ

c

−

~

d

5

| = 0.0. Thus, Rocchio assigns d

5

to

c.

The separating hyperplane in this case has the following parameters:

~w ≈ (0 − 0.71 − 0.71 1/3 1/3 1/3)

T

b = −1/3

See Exercise

14.15 for how to compute ~w and b. We can easily verify that this hy-

perplane separates the documents as desired: ~w

T

~

d

1

≈ 0 · 0 + −0.71 ·0 + −0.71 · 0 +

1/3 ·0 + 1/3 · 1.0 + 1/3 ·0 = 1/3 > b (and, similarly, ~w

T

~

d

i

> b for i = 2 and i = 3)

and ~w

T

~

d

4

= −1 < b. Thus, documents in c are above the hyperplane (~w

T

~

d > b) and

documents in

c are below the hyperplane (~w

T

~

d < b).

The assignment criterion in Figure

14.4 is Euclidean distance (APPLYROC-

CHIO, line 1). An alternative is cosine similarity:

Assign d to class c = arg max

c

′

cos(~µ(c

′

),~v(d))

As discussed in Section 14.1, the two assignment criteria will sometimes

make different classification decisions. We present the Euclidean distance

variant of Rocchio classification here because it emphasizes Rocchio’s close

correspondence to K-means clustering (Section

16.4, page 360).

Rocchio classification is a form of Rocchio relevance feedback (Section 9.1.1,

page

178). The average of the relevant documents, corresponding to the most

important component of the Rocchio vector in relevance feedback (Equa-

tion (9.3), page 182), is the centroid of the “class” of relevant documents.

We omit the query component of the Rocchio formula in Rocchio classifica-

tion since there is no query in text classification. Rocchio classification can be

Online edition (c)2009 Cambridge UP

14.2 Rocchio classification 295

TRAINROCCHIO(C, D)

1 for each c

j

∈ C

2 do D

j

← {d : hd, c

j

i ∈ D}

3 ~µ

j

←

1

|D

j

|

∑

d∈D

j

~v(d)

4 return {~µ

1

, . . . ,~µ

J

}

APPLYROCCHIO({~µ

1

, . . . ,~µ

J

}, d)

1 return arg min

j

|~µ

j

−~v(d)|

◮

Figure 14.4 Rocchio classification: Training and testing.

a

a

a

a

a

a

a

a

a

a

a

a

a

a

a a

aa

a

a

a

a

a

a

a

a

a

a

a

a

a

a

a

aa

a

a

a

a

a

b

b

b

b

b

b

b

b

b

b

b

b

b

b

b

b

b

b

b

X

X

A

B

o

◮

Figure 14.5 The multimodal class “a” consists of two different clusters (small

upper circles centered on X’s). Rocchio classification will misclassify “o” as “a”

because it is closer to the centroid A of the “a” class than to the centroid B of the “b”

class.

applied to J > 2 classes whereas Rocchio relevance feedback is designed to

distinguish only two classes, relevant and nonrelevant.

In addition to respecting contiguity, the classes in Rocchio classification

must be approximate spheres with similar radii. In Figure

14.3, the solid

square just below the boundary between UK and Kenya is a better fit for the

class UK since UK is more scattered than Kenya. But Rocchio assigns it to

Kenya because it ignores details of the distribution of points in a class and

only uses distance from the centroid for classification.

The assumption of sphericity also does not hold in Figure

14.5. We can-