Cossey L. Introduction to Mathematical Physics

Подождите немного. Документ загружается.

Let X a random variable{random variable}. A stochastic process (associated to X) is a

function of X and t.

X

t

is called a stochastic function of X or a

stochastic process. Generally probability depends on the

history of values of X

t

before t

i

. One defines the conditional probability

as the probability of X

t

to take a value between x and

x + dx, at time t

i

knowing the values of X

t

for times anterior to t

i

(or X

t

"history"). A

Markov process is a stochastic process with the property that for any set of succesive

times one has:

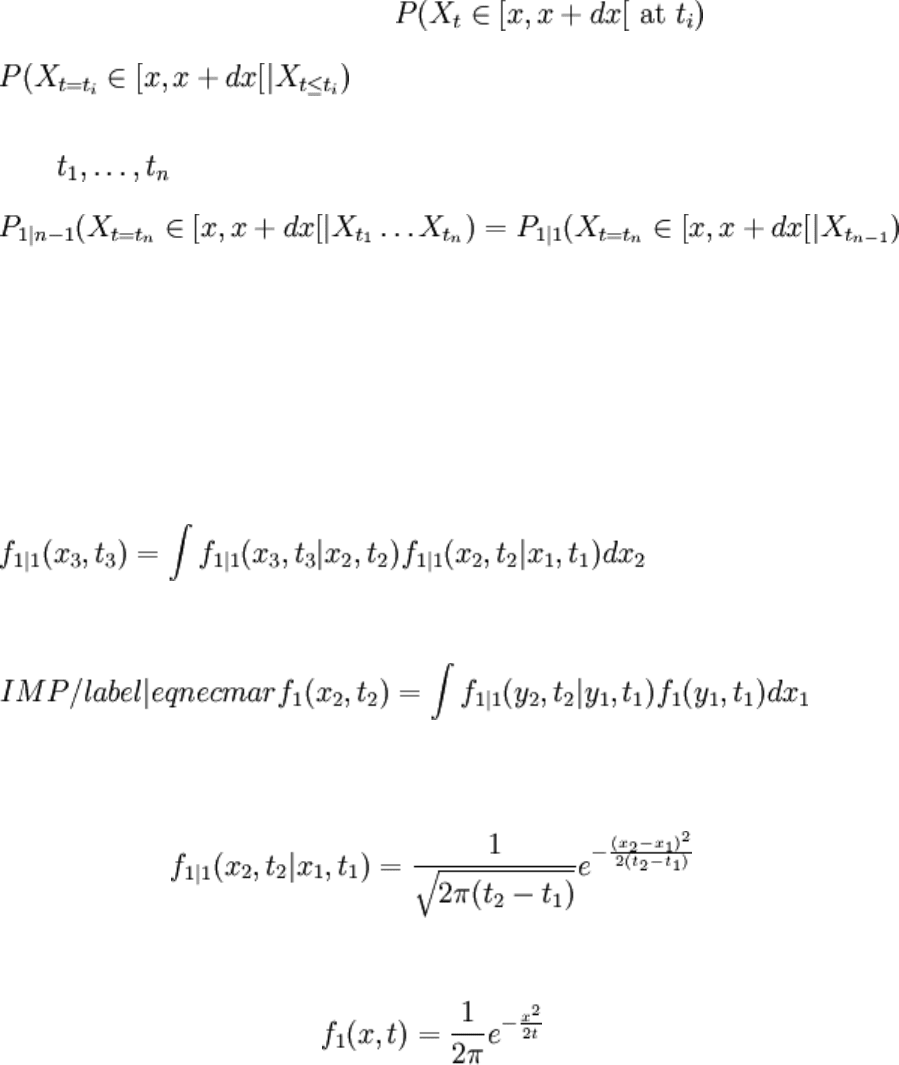

P

i | j

denotes the probability for i conditions to be satisfied, knowing j anterior events. In

other words, the expected value of X

t

at time t

n

depends only on the value of X

t

at

previous time t

n − 1

. It is defined by the transition matrix by P

1

and P

1 | 1

(or equivalently

by the transition density function f (x,t)

1

and f (x ,t | x ,t )

1 | 1 2 2 1 1

. It can be seen that two

functions f

1

and f

1 | 1

defines a Markov{Markov process} process if and only if they

verify:

• the Chapman-Kolmogorov equation{Chapman-Kolmogorov equation}:

•

A Wiener process{Wiener process}{Brownian motion} (or Brownian motion) is a

Markov process for which:

Using equation eqnecmar, one gets:

As stochastic processes were defined as a function of a random variable and time, a large

class\footnote{This definition excludes however discontinuous cases such as Poisson

processes} of stochastic processes can be defined as a function of Brownian motion (or

Wiener process) W

t

. This our second definition of a stochastic process:

Definition:

Let W

t

be a Brownian motion. A stochastic process is a function of W

t

and t.

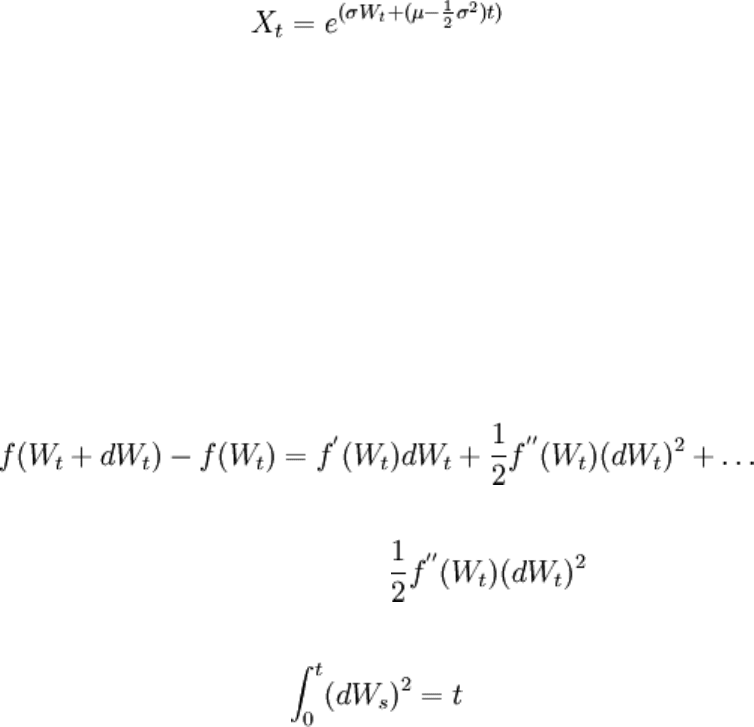

For instance a model of the temporal evolution of stocks

is

A stochastic differential equation

dX = a(t,X )dt + b(t,X )dW

t t t t

gives an implicit definition of the stochastic process. The rules of differentiation with

respect to the Brownian motion variable W

t

differs from the rules of differentiation with

respect to the ordinary time variable. They are given by the It\^o formula{It\^o formula}

.

To understand the difference between the differentiation of a newtonian function and a

stochastic function consider the Taylor expansion, up to second order, of a function f(W)

t

:

Usually (for newtonian functions), the differential df(W)

t

is just f(W )dW

'

t t

. But, for a

stochastic process f(W)

t

the second order term is no more neglectible.

Indeed, as it can be seen using properties of the Brownian motion, we have:

or

(dW ) = dt.

t

2

Figure figbrown illustrates the difference between a stochastic process (simple brownian

motion in the picture) and a differentiable function. The brownian motion has a self

similar structure under progressive zooms.

Let us here just mention the most basic scheme to integrate stochastic processes using

computers. Consider the time integration problem:

dX = a(t,X )dt + b(t,X )dW

t t t t

with initial value:

The most basic way to approximate the solution of previous problem is to use the Euler

(or Euler-Maruyama). This schemes satisfies the following iterative scheme:

More sofisticated methods can be found in .

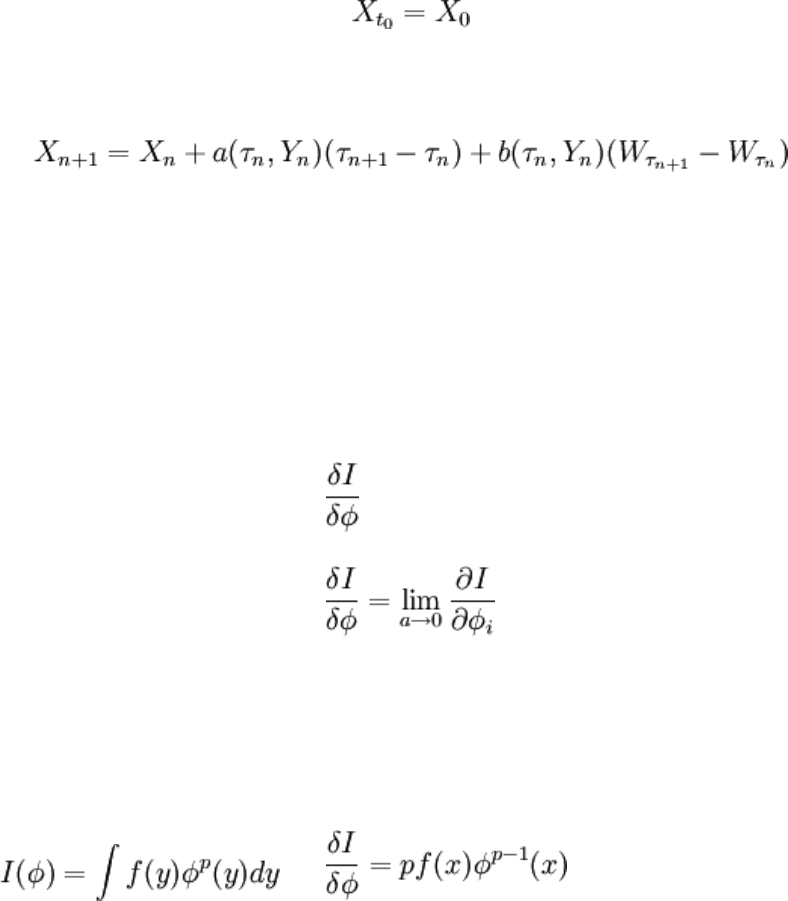

Functional derivative

Let (φ) be a functional. To calculate the differential dI(φ) of a functional I(φ) one express

the difference I(φ + dφ) − I(φ) as a functional of dφ.

The functional derivative of I noted is given by the limit:

where a is a real and φ = φ(ia)

i

.

Here are some examples:

Example:

If

then

Example:

If then .

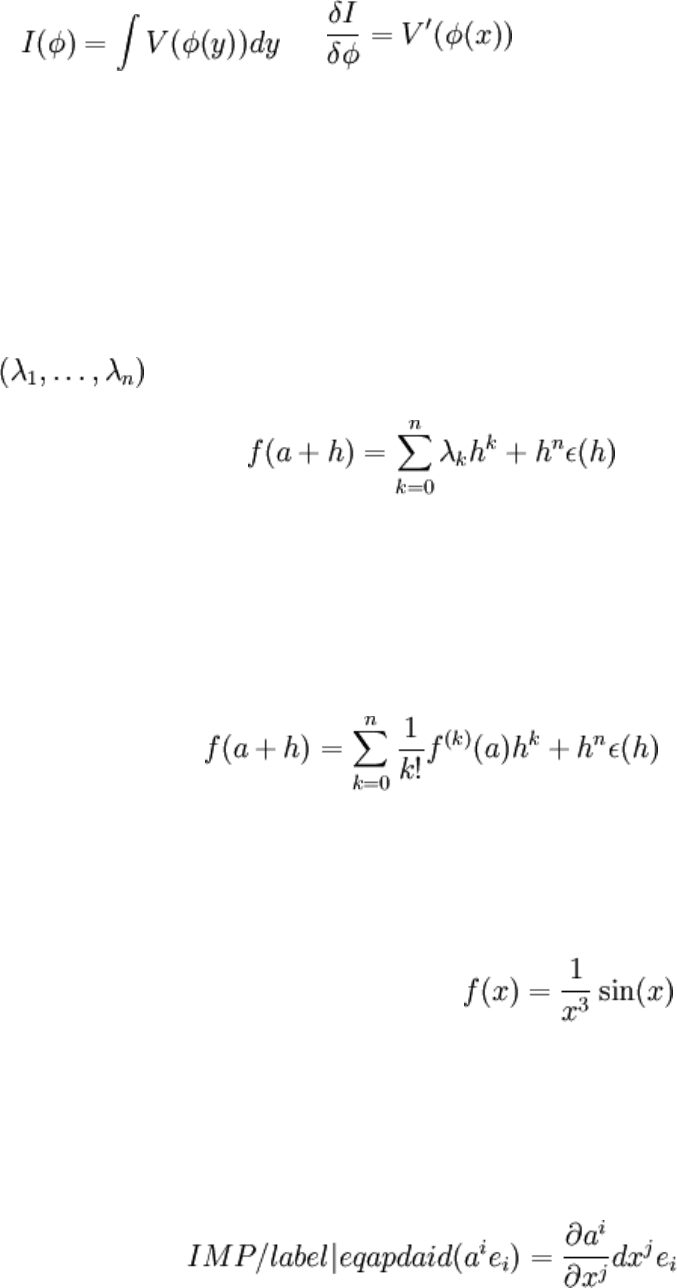

==Comparison of tensor values at different points==

Expansion of a function in serie about x=a

Definition:

A function f admits a expansion in serie at order n around x = a if there exists number

such that:

where ε(h) tends to zero when h tends to zero.

Theorem:

If a function is derivable n times in a, then it admits an expansion in serie at order n

around x = a and it is given by the Taylor-Young formula:

where ε(h) tends to zero when h tends to zero and where f (a)

(k)

is the k derivative of f at x

= a.

Note that the reciproque of the theorem is false: is a function that

admits a expansion around zero at order 2 but isn't two times derivable.

===Non objective quantities===

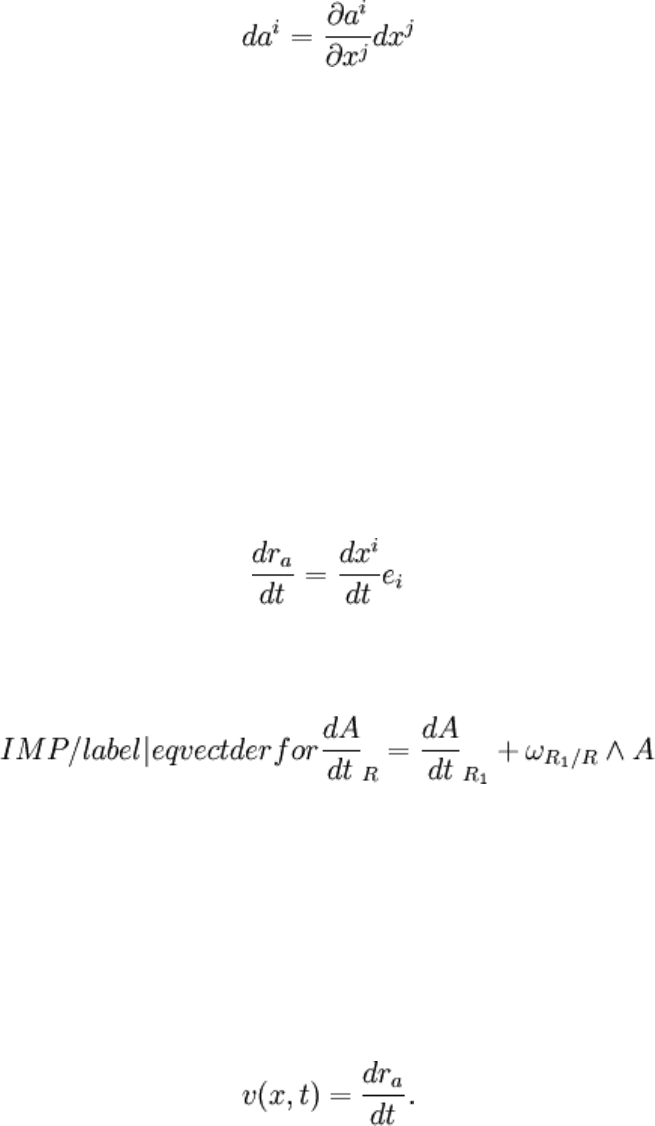

Consider two points M and M' of coordonates x

i

and x + dx

i i

. A first variation often

considered in physics is:

The non objective variation is

Note that da

i

is not a tensor and that equation eqapdai assumes that e

i

doesn't change from

point M to point M'. It doesn't obey to tensor transformations relations. This is why it is

called non objective variation. An objective variation that allows to define a tensor is

presented at next section: it takes into account the variations of the basis vectors.

Example:

Lagrangian speed: the Lagrangian

description of the mouvement of a particle number a is given by its position r

a

at each

time t. If

r (t) = x (t)e

a

i

i

the Lagrangian speed is:

Derivative introduced at example exmpderr is not objective, that means that it is not

invariant by axis change. In particular, one has the famous vectorial derivation formula:

Example:

Eulerian description of a fluid is given by a field of "Eulerian" v(x,t) velocities and initial

conditions, such that:

x = r (0)

a

where r

a

is the Lagrangian position of the particle, and:

Eulerian and Lagrangian descriptions are equivalent.

Example:

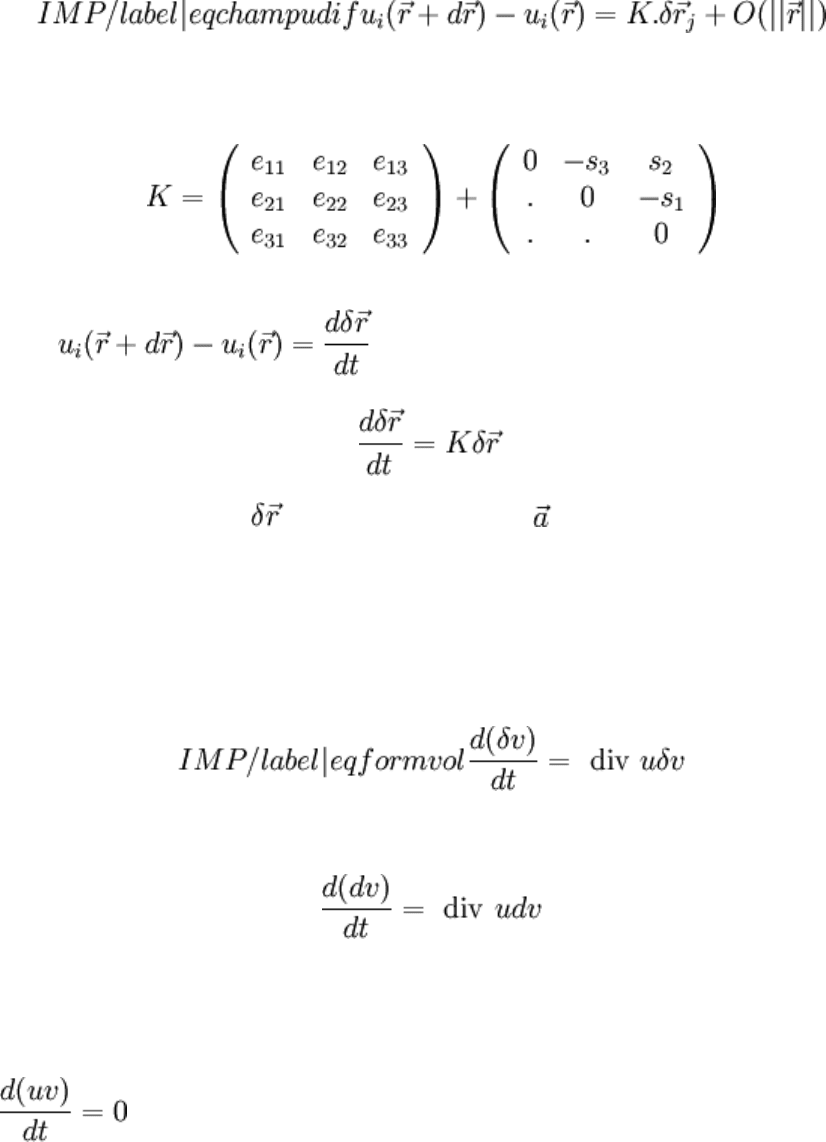

Let us consider the variation of the speed field u between two positions, at time t. If speed

field u is differentiable, there exists a linear mapping K such that:

K = u

ij i,j

is called the speed field gradient tensor. Tensor K can be shared into a symmetric

and an antisymmetric part:

Symmetric part is called dilatation tensor, antisymmetric part is called rotation tensor.

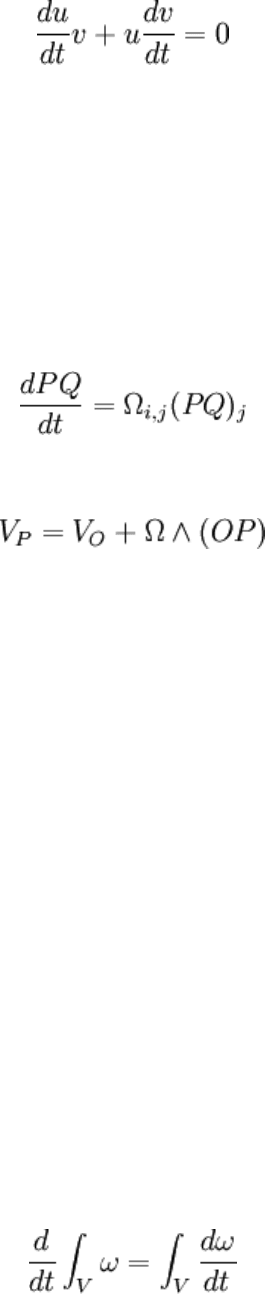

Now, . Thus using equation eqchampudif:

This result true for vector is also true for any vector . This last equation allows to

show that

• The derivative with respect to time of the elementary volume dv at the

neighbourhood of a particle that is followed in its movement is\footnote{ Indeed

d(δv) = d(δx)δyδz + d(δy)δxδz + d(δz)δxδy

} :

• The speed field of a solid is antisymmetric \footnote{ Indeed, let u and v be two

position vectors binded to the solid. By definition of a solid, scalar product uv

remains constant as time evolves. So:

So:

K u v + u K v = 0

ij j i i ij J

As this equality is true for any u,v, one has:

K = − K

ij ji

In other words, K is antisymmetrical. So, from the preceeding theorem:

This can be rewritten saying that speed field is antisymmetrical, {\it i. e.}, one has:

} .

Example:

Particulaire derivative of a tensor:

The particulaire derivative is the time derivative of a quantity defined on a set of particles

that are followed during their movement. When using Lagrange variables, it can be

identified to the partial derivative with respect to time

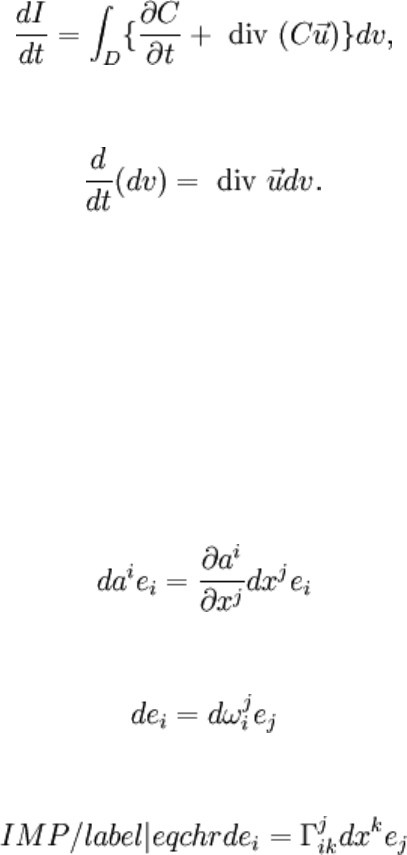

The following property can be showed : \begin{prop} Let us consider the integral:

I =

∫

ω

V

where V is a connex variety of dimension p (volume, surface...) that is followed during its

movement and ω a differential form of degree p expressed in Euler variables. The

particular derivative of I verifies:

\end{prop} A proof of this result can be found in .

Example:

Consider the integral

I =

∫

C(x,t)dv

V

where D is a bounded connex domain that is followed during its movement, C is a scalar

valuated function continuous in the closure of D and differentiable in D. The particulaire

derivative of I is

since from equation eqformvol:

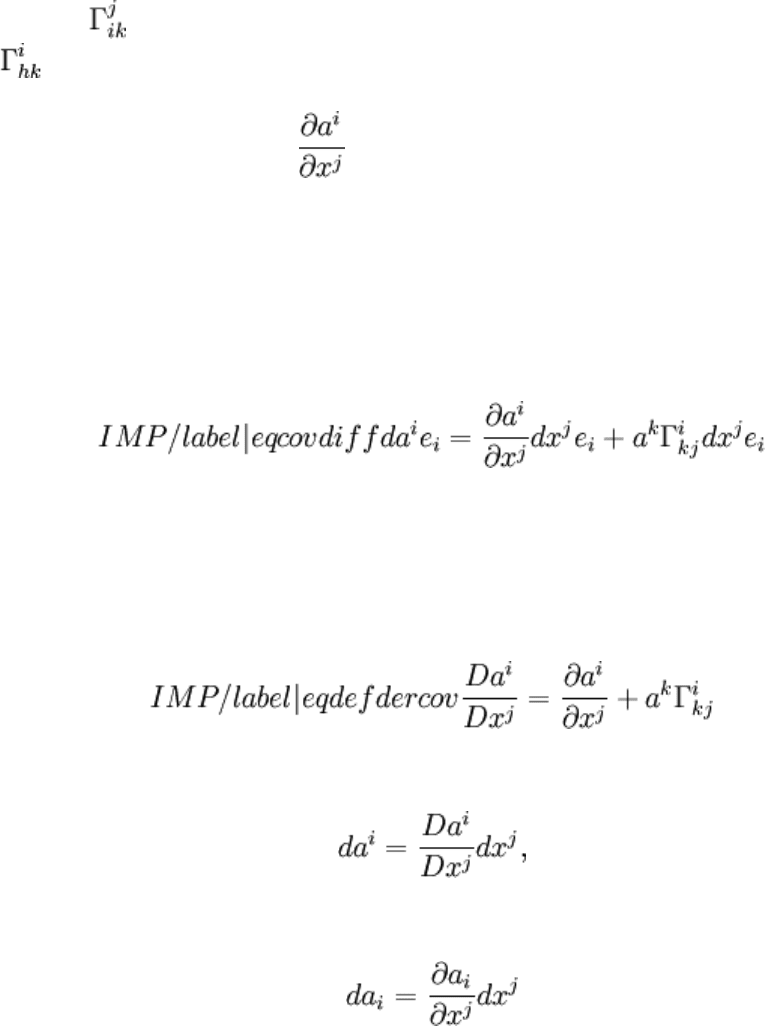

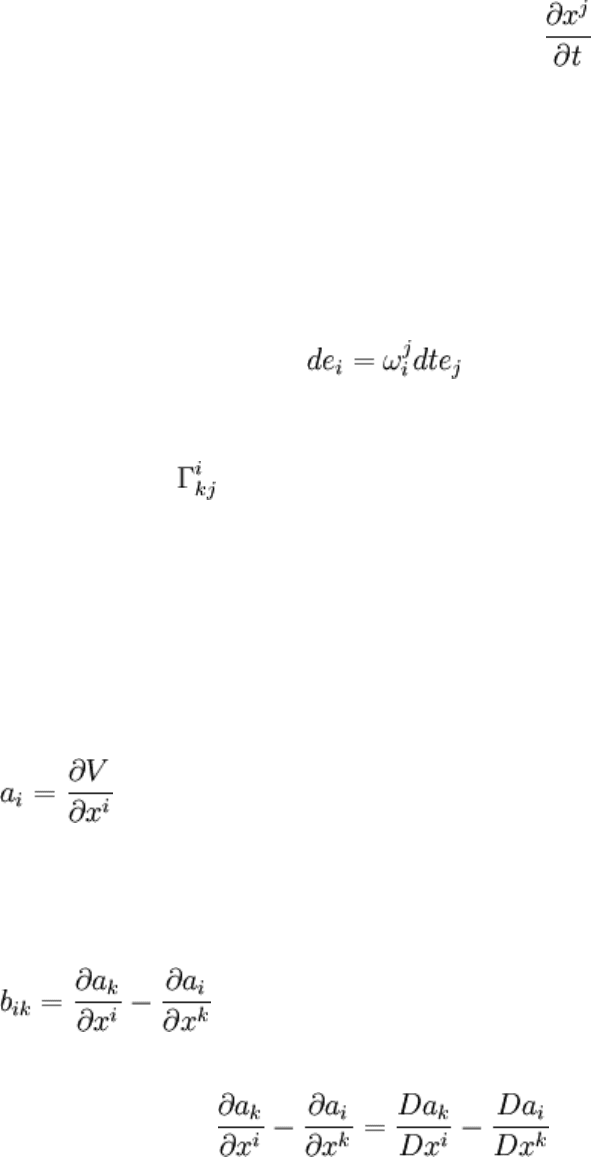

===Covariant derivative===

In this section a derivative that is independent from the considered reference frame is

introduced (an objective derivative). Consider the difference between a quantity a

evaluated in two points M and M'.

da = a(M') − a(M) = da e + a de

i

i

i

i

As at section secderico:

Variation de

i

is linearly connected to the e

j

's {\it via} the tangent application:

Rotation vector depends linearly on the displacement:

Symbols called Christoffel symbols\footnote{ I a space with metrics g

ij

coefficients

can expressed as functions of coefficients g

ij

. }

are not\footnote{Just as is not a

tensor. However, d(ae )

i

i

given by equation eqcovdiff does have the tensors properties}

tensors. they

connect properties of space at M and its properties at point M'. By a change of index in

equation eqchr :

As the x

j

's are independent variables:

Definition:

The covariant derivative of a contravariant vector a

i

is

The differential can thus be noted:

which is the generalization of the differential:

considered when there are no tranformation of axes. This formula can be generalized to

tensors.

Remark:

For the calculation of the particulaire derivative exposed at section

secderico the x

j

are the coordinates of the point, but the quantity

to derive depends also on time. That is the reason why a term appear in equation

eqformalder but not in equation

eqdefdercov.

Remark:

From equation eqdefdercov the vectorial derivation formula of equation

eqvectderfor can be recovered when:

Remark:

In spaces with metrics, are functions of the metrics tensor g

ij

.

Covariant differential operators

Following differential operators with tensorial properties can be defined:

• Gradient of a scalar:

a = grad V

with .

• Rotational of a vector

b = rot a

i

with . the tensoriality of the rotational can be shown using the

tensoriality of the covariant derivative:

• Divergence of a contravariant density:

d = div a

i