Engelbrecht Andries P. Computational Intelligence: An Introduction

Подождите немного. Документ загружается.

28 3. Supervised Learning Neural Networks

3.1.1 Feedforward Neural Networks

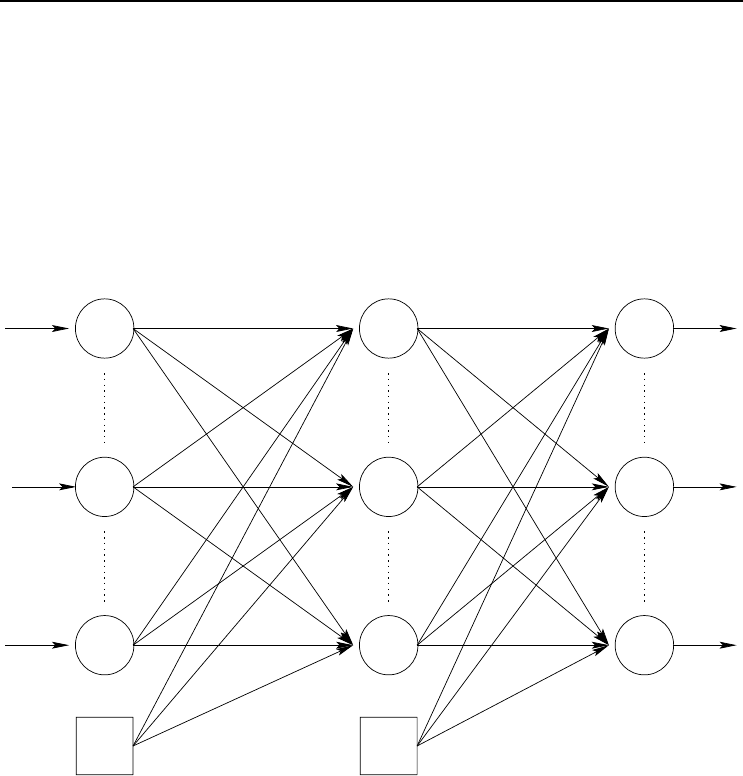

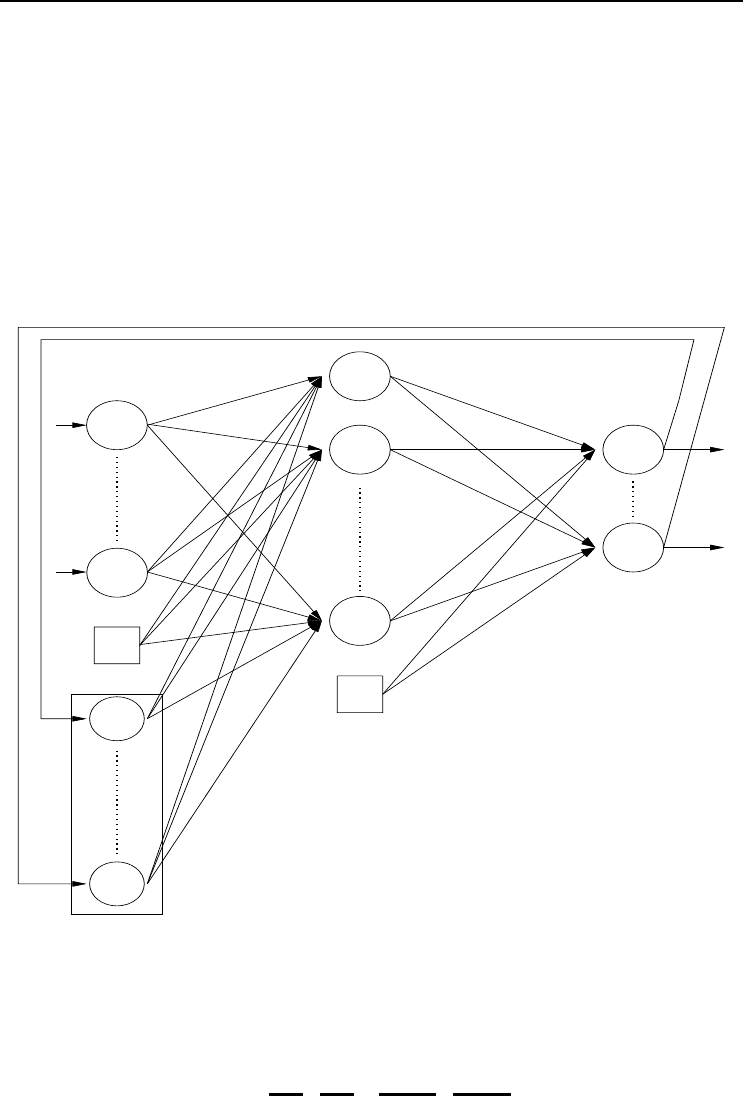

Figure 3.1 illustrates a standard feedforward neural network (FFNN), consisting of

three layers: an input layer (note that some literature on NNs do not count the input

layer as a layer), a hidden layer and an output layer. While this figure illustrates

only one hidden layer, a FFNN can have more than one hidden layer. However, it

has been proved that FFNNs with monotonically increasing differentiable functions

can approximate any continuous function with one hidden layer, provided that the

hidden layer has enough hidden neurons [383]. A FFNN can also have direct (linear)

connections between the input layer and the output layer.

−1−1

v

ji

v

JI

w

kj

z

I

z

i

z

I+1

z

1

y

J+1

y

J

y

j

y

1

v

11

w

11

o

1

o

k

o

K

v

J,I +1

w

K,J+1

v

1i

w

1j

w

J+1,k

w

KJ

v

I+1,j

Figure 3.1 Feedforward Neural Network

The output of a FFNN for any given input pattern z

p

is calculated with a single

forward pass through the network. For each output unit o

k

, we have (assuming no

direct connections between the input and output layers),

o

k,p

= f

o

k

(net

o

k,p

)

= f

o

k

J+1

j=1

w

kj

f

y

j

(net

y

j,p

)

= f

o

k

J+1

j=1

w

kj

f

y

j

I+1

i=1

v

ji

z

i,p

(3.1)

where f

o

k

and f

y

j

are respectively the activation function for output unit o

k

and

3.1 Neural Network Types 29

hidden unit y

j

; w

kj

is the weight between output unit o

k

and hidden unit y

j

; z

i,p

is the value of input unit z

i

of input pattern z

p

;the(I + 1)-th input unit and the

(J + 1)-th hidden unit are bias units representing the threshold values of neurons in

the next layer.

Note that each activation function can be a different function. It is not necessary that

all activation functions be the same. Also, each input unit can implement an activation

function. It is usually assumed that input units have linear activation functions.

z

I

z

i

z

1

−1

w

kj

y

J+1

y

J

y

j

y

1

w

11

o

1

o

k

o

K

w

K,J+1

w

1j

w

J+1,k

w

KJ

u

11

u

L2

v

jL

h

L−1

u

31

h

1

h

2

h

3

h

L

−1

v

J,L+1

v

11

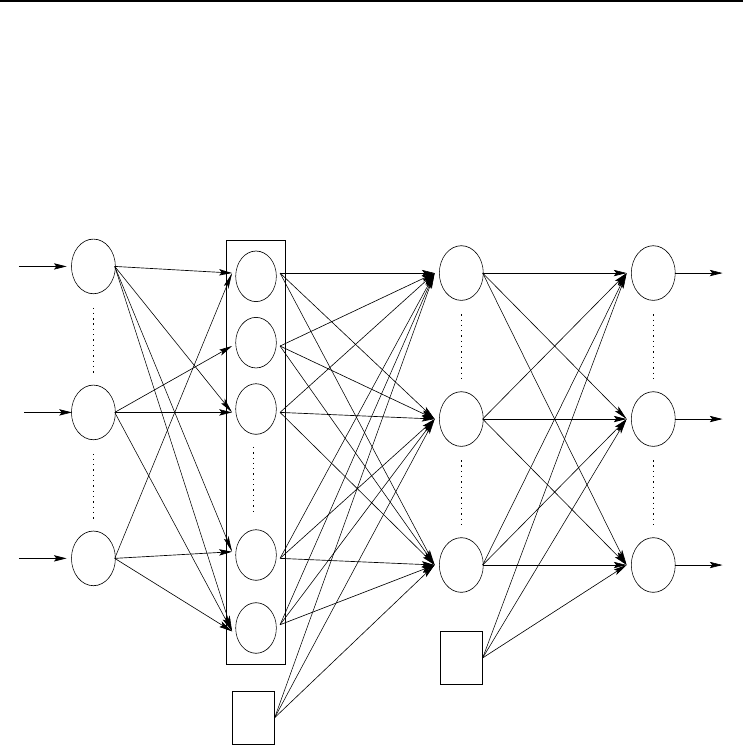

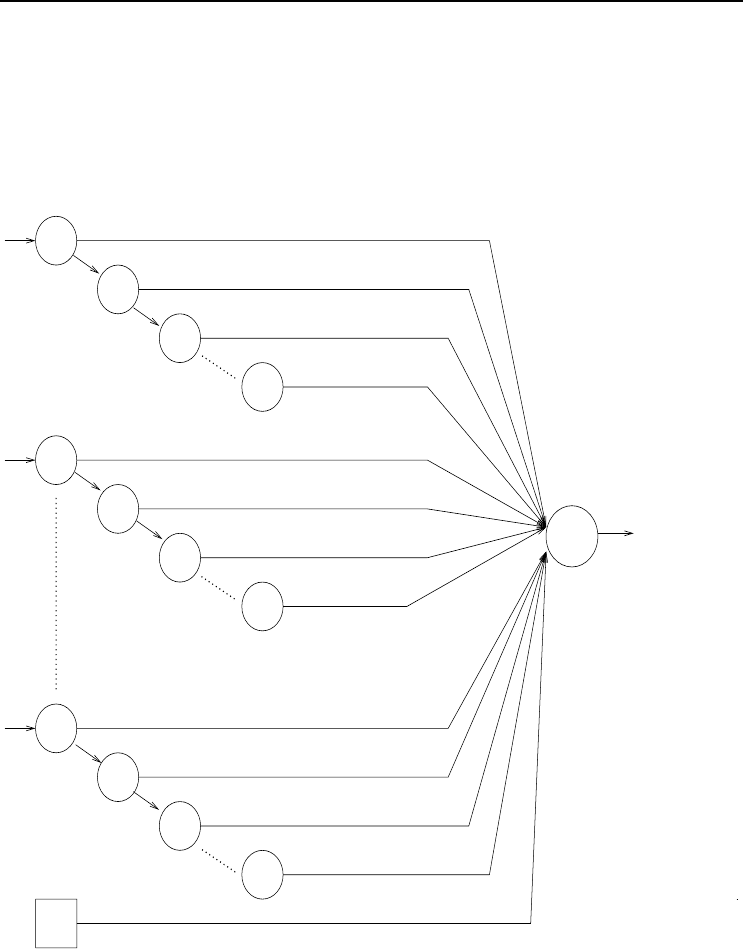

Figure 3.2 Functional Link Neural Network

3.1.2 Functional Link Neural Networks

In functional link neural networks (FLNN) input units do implement activation func-

tions (or rather, transformation functions). A FLNN is simply a FFNN with the input

layer expanded into a layer of functional higher-order units [314, 401]. The input layer,

with dimension I, is therefore expanded to functional units h

1

,h

2

, ···,h

L

, where L is

the total number of functional units, and each functional unit h

l

is a function of the

input parameter vector (z

1

, ···,z

I

), i.e. h

l

(z

1

, ···,z

I

) (see Figure 3.2). The weight

matrix U between the input layer and the layer of functional units is defined as

u

li

=

1 if functional unit h

l

is dependent of z

i

0otherwise

(3.2)

For FLNNs, v

jl

is the weight between hidden unit y

j

and functional link h

l

.

30 3. Supervised Learning Neural Networks

Calculation of the activation of each output unit o

k

occurs in the same manner as for

FFNNs, except that the additional layer of functional units is taken into account:

o

k,p

= f

o

k

J+1

j=1

w

kj

f

y

j

L

l=1

v

jl

h

l

(z

p

)

(3.3)

The use of higher-order combinations of input units may result in faster training times

and improved accuracy (see, for example, [314, 401]).

3.1.3 Product Unit Neural Networks

Product unit neural networks (PUNN) have neurons that compute the weighted prod-

uct of input signals, instead of a weighted sum [222, 412, 509]. For product units, the

net input is computed as given in equation (2.5).

Different PUNNs have been suggested. In one type each input unit is connected to

SUs, and to a dedicated group of PUs. Another PUNN type has alternating layers of

product and summation units. Due to the mathematical complexity of having PUs

in more than one hidden layer, this section only illustrates the case for which just

the hidden layer has PUs, and no SUs. The output layer has only SUs, and linear

activation functions are assumed for all neurons in the network. Then, for each hidden

unit y

j

, the net input to that hidden unit is (note that no bias is included)

net

y

j,p

=

I

i=1

z

v

ji

i,p

=

I

i=1

e

v

ji

ln(z

i,p

)

= e

i

v

ji

ln(z

i,p

)

(3.4)

where z

i,p

is the activation value of input unit z

i

,andv

ji

is the weight between input

z

i

and hidden unit y

j

.

An alternative to the above formulation of the net input signal for PUs is to include

a “distortion” factor within the product [406], such as

net

y

j,p

=

I+1

i=1

z

v

ji

i,p

(3.5)

where z

I+1,p

= −1 for all patterns; v

j,I+1

represents the distortion factor. The purpose

of the distortion factor is to dynamically shape the activation function during training

to more closely fit the shape of the true function represented by the training data.

If z

i,p

< 0, then z

i,p

can be written as the complex number z

i,p

= ı

2

|z

i,p

| (ı =

√

−1)

that, substituted in (3.4), yields

net

y

j,p

= e

i

v

ji

ln |z

i,p

|

e

i

v

ji

ln ı

2

(3.6)

3.1 Neural Network Types 31

Let c =0+ı = a + bı be a complex number representing ı. Then,

ln c =lnre

ıθ

=lnr + ıθ +2πkı (3.7)

where r =

√

a

2

+ b

2

=1.

Considering only the main argument, arg(c), k = 0 which implies that 2πkı =0.

Furthermore, θ =

π

2

for ı =(0, 1). Therefore, ıθ = ı

π

2

, which simplifies equation (3.10)

to ln c = ı

π

2

, and consequently,

ln ı

2

= ıπ (3.8)

Substitution of (3.8) in (3.6) gives

net

y

j,p

= e

i

v

ji

ln |z

i,p

|

e

i

v

ji

πı

= e

i

v

ji

ln |z

i,p

|

cos(

I

i=1

v

ji

π)+ı sin

I

i=1

v

ji

π

(3.9)

Leaving out the imaginary part ([222] show that the added complexity of including

the imaginary part does not help with increasing performance),

net

y

j,p

= e

i

v

ji

ln |z

i,p

|

cos

π

I

i=1

v

ji

(3.10)

Now, let

ρ

j,p

=

I

i=1

v

ji

ln |z

i,p

| (3.11)

φ

j,p

=

I

i=1

v

ji

I

i

(3.12)

with

I

i

=

0ifz

i,p

> 0

1ifz

i,p

< 0

(3.13)

and z

i,p

=0.

Then,

net

y

j,p

= e

ρ

j,p

cos(πφ

j,p

) (3.14)

The output value for each output unit is then calculated as

o

k,p

= f

o

k

J+1

j=1

w

kj

f

y

j

(e

ρ

j,p

cos(πφ

j,p

))

(3.15)

Note that a bias is now included for each output unit.

32 3. Supervised Learning Neural Networks

3.1.4 Simple Recurrent Neural Networks

Simple recurrent neural networks (SRNN) have feedback connections which add the

ability to also learn the temporal characteristics of the data set. Several different types

of SRNNs have been developed, of which the Elman and Jordan SRNNs are simple

extensions of FFNNs.

Ŧ1

Ŧ1

Context layer

Figure 3.3 Elman Simple Recurrent Neural Network

The Elman SRNN [236], as illustrated in Figure 3.3, makes a copy of the hidden

layer, which is referred to as the context layer. The purpose of the context layer is to

store the previous state of the hidden layer, i.e. the state of the hidden layer at the

previous pattern presentation. The context layer serves as an extension of the input

layer, feeding signals representing previous network states, to the hidden layer. The

input vector is therefore

z =(z

1

, ···,z

I+1

actual inputs

,z

I+2

, ···,z

I+1+J

context units

) (3.16)

Context units z

I+2

, ···,z

I+1+J

are fully interconnected with all hidden units. The

connections from each hidden unit y

j

(for j =1, ···,J) to its corresponding context

3.1 Neural Network Types 33

unit z

I+1+j

have a weight of 1. Hence, the activation value y

j

is simply copied to

z

I+1+j

. It is, however, possible to have weights not equal to 1, in which case the

influence of previous states is weighted. Determining such weights adds additional

complexity to the training step.

Each output unit’s activation is then calculated as

o

k,p

= f

o

k

J+1

j=1

w

kj

f

y

j

(

I+1+J

i=1

v

ji

z

i,p

)

(3.17)

where (z

I+2,p

, ···,z

I+1+J,p

)=(y

1,p

(t − 1), ···,y

J,p

(t − 1)).

Ŧ1

Ŧ1

State layer

Figure 3.4 Jordan Simple Recurrent Neural Network

Jordan SRNNs [428], on the other hand, make a copy of the output layer instead of

the hidden layer. The copy of the output layer, referred to as the state layer, extends

the input layer to

z =(z

1

, ···,z

I+1

actual inputs

,z

I+2

, ···,z

I+1+K

state units

) (3.18)

The previous state of the output layer then also serves as input to the network. For

34 3. Supervised Learning Neural Networks

each output unit,

o

k,p

= f

o

k

J+1

j=1

w

kj

f

y

j

I+1+K

i=1

v

ji

z

i,p

(3.19)

where (z

I+2,p

, ···,z

I+1+K,p

)=(o

1,p

(t − 1), ···,o

K,p

(t − 1)).

Ŧ1

z

I

(t)

z

2

(t)

z

1

(t)

y

j

v

j,1(t)

v

j,1(t−1)

v

j,1(t−2)

v

j,1(t−n

t

)

v

j,2(t)

v

j,2(t−1)

v

j,2(t−2)

v

j,2(t−n

t

)

v

j,I(t)

v

j,I(t−1)

v

j,I(t−2)

v

j,I(t−n

t

)

v

j,I+1

z

I

(t − 1)

z

I

(t − 2)

z

I

(t − n

t

)

z

2

(t − n

t

)

z

2

(t − 2)

z

2

(t − 1)

z

1

(t − n

t

)

z

1

(t − 2)

z

1

(t − 1)

Figure 3.5 A Single Time-Delay Neuron

3.1.5 Time-Delay Neural Networks

A time-delay neural network (TDNN) [501], also referred to as backpropagation-

through-time, is a temporal network with its input patterns successively delayed in

3.1 Neural Network Types 35

time. A single neuron with n

t

time delays for each input unit is illustrated in Fig-

ure 3.5. This type of neuron is then used as a building block to construct a complete

feedforward TDNN.

Initially, only z

i,p

(t), with t = 0, has a value and z

i,p

(t −t

) is zero for all i =1, ···,I

with time steps t

=1, ···,n

t

; n

t

is the total number of time steps, or number of delayed

patterns. Immediately after the first pattern is presented, and before presentation of

the second pattern,

z

i,p

(t − 1) = z

i,p

(t) (3.20)

After presentation of t

patterns and before the presentation of pattern t

+ 1, for all

t =1, ···,t

,

z

i,p

(t − t

)=z

i,p

(t − t

+ 1) (3.21)

This causes a total of n

t

patterns to influence the updates of weight values, thus

allowing the temporal characteristics to drive the shaping of the learned function.

Each connection between z

i,p

(t − t

)andz

i,p

(t − t

+ 1) has a value of 1.

The output of a TDNN is calculated as

o

k,p

= f

o

k

J+1

j=1

w

kj

f

y

j

I

i=1

n

t

t=0

v

j,i(t)

z

i,p

(t)+z

I+1

v

j,I+1

(3.22)

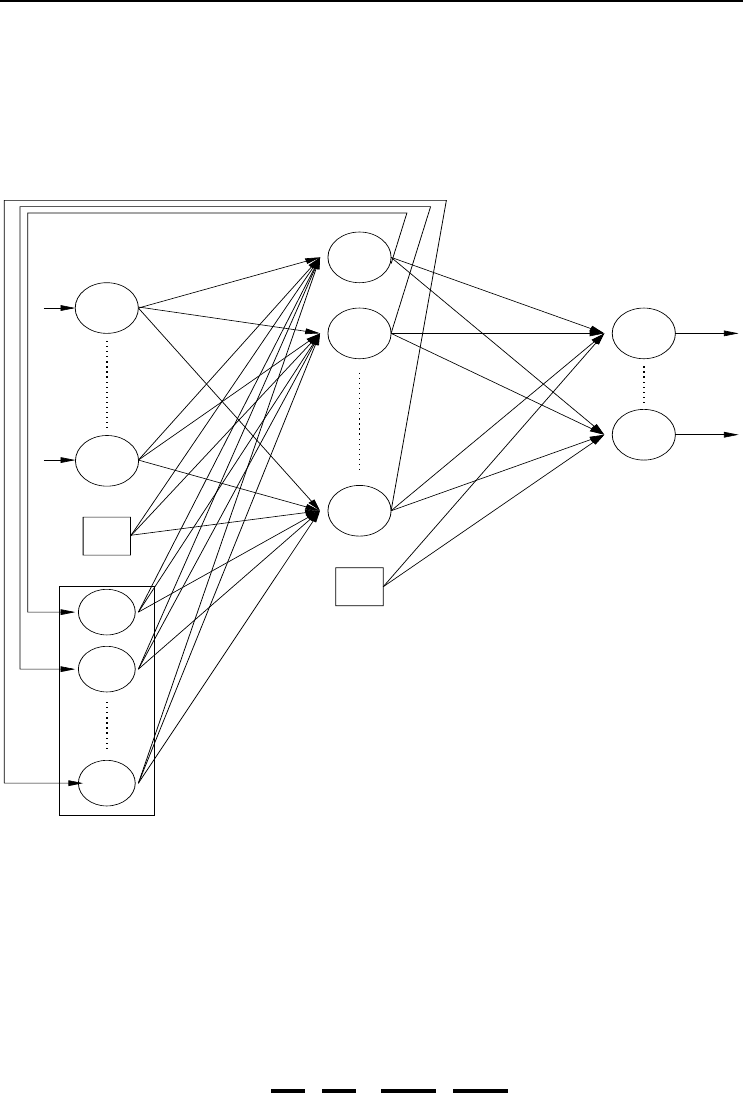

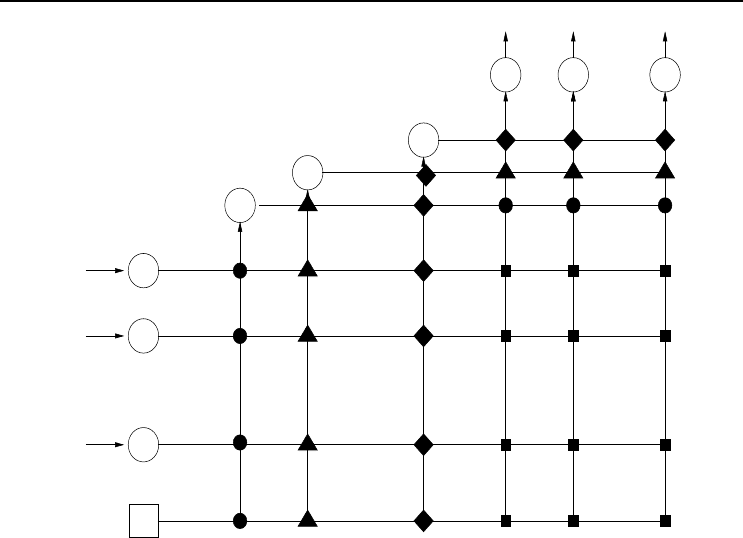

3.1.6 Cascade Networks

A cascade NN (CNN) [252, 688] is a multilayer FFNN where all input units have direct

connections to all hidden units and to all output units. Furthermore, the hidden units

are cascaded. That is, each hidden unit’s output serves as an input to all succeeding

hidden units and all output units. Figure 3.6 illustrates a CNN.

The output of a CNN is calculated is

o

k,p

= f

o

k

I+1

i=1

u

ki

z

i

+

J

j=1

w

kj

f

y

j

I+1

i=1

v

ji

z

i

+

j−1

l=1

s

jl

y

l

(3.23)

where u

ki

represents a weight between output unit k and input unit i, s

jl

is a weight

between hidden units j and l,andy

l

is the activation of hidden unit l.

At this point it is important to note that training of a CNN consists of finding weight

values and the size of the NN. Training starts with the simplest architecture containing

only the (I +1)K direct weights between input and output units (indicated by a solid

square in Figure 3.6). If the accuracy of the CNN is unacceptable one hidden unit

is added, which adds another (I +1)J +(J − 1) + JK weights to the network. If

J = 1, the network includes the weights indicated by the filled squares and circles in

Figure 3.6. When J = 2, the weights marked by filled triangles are added.

36 3. Supervised Learning Neural Networks

Ŧ1

z

1

z

2

z

I

z

I+1

y

1

y

2

y

J

o

2

o

1

o

K

Figure 3.6 Cascade Neural Network

3.2 Supervised Learning Rules

Up to this point it was shown how NNs can be used to calculate an output value given

an input pattern. This section explains approaches to train the NN such that the

output of the network is an accurate approximation of the target values. First, the

learning problem is explained, and then different training algorithms are described.

3.2.1 The Supervised Learning Problem

Consider a finite set of input-target pairs D = {d

p

=(z

p

, t

p

)|p =1, ···,P} sampled

from a stationary density Ω(D), with z

i,p

,t

k,p

∈ R for i =1, ···,I and k =1, ···,K;

z

i,p

is the value of input unit z

i

and t

k,p

is the target value of output unit o

k

for

pattern p. According to the signal-plus-noise model,

t

p

= µ(z

p

)+ζ

p

(3.24)

where µ(z) is the unknown function. The input values z

i,p

are sampled with probability

density ω(z), and the ζ

k,p

are independent, identically distributed noise sampled with

density φ(ζ), having zero mean. The objective of learning is then to approximate the

unknown function µ(z) using the information contained in the finite data set D.For

NN learning this is achieved by dividing the set D randomly into a training set D

T

,

a validation set D

V

, and a test set D

G

(all being dependent from one another). The

3.2 Supervised Learning Rules 37

approximation to µ(z) is found from the training set D

T

, memorization is determined

from D

V

(more about this later), and the generalization accuracy is estimated from

the test set D

G

(more about this later).

Since prior knowledge about Ω(D) is usually not known, a nonparametric regression

approach is used by the NN learner to search through its hypothesis space H for a

function f

NN

(D

T

, W) which gives a good estimation of the unknown function µ(z),

where f

NN

(D

T

, W) ∈H. For multilayer NNs, the hypothesis space consists of all

functions realizable from the given network architecture as described by the weight

vector W .

During learning, the function f

NN

: R

I

−→ R

K

is found which minimizes the empirical

error

E

T

(D

T

; W)=

1

P

T

P

T

p=1

(F

NN

(z

p

, W) − t

p

)

2

(3.25)

where P

T

is the total number of training patterns. The hope is that a small empirical

(training) error will also give a small true error, or generalization error, defined as

E

G

(Ω; W)=

(f

NN

(z, W) − t)

2

dΩ(z, t) (3.26)

For the purpose of NN learning, the empirical error in equation (3.25) is referred

to as the objective function to be optimized by the optimization method. Several

optimization algorithms for training NNs have been developed [51, 57, 221]. These

algorithms are grouped into two classes:

• Local optimization, where the algorithm may get stuck in a local optimum

without finding a global optimum. Gradient descent and scaled conjugate gra-

dient are examples of local optimizers.

• Global optimization, where the algorithm searches for the global optimum

by employing mechanisms to search larger parts of the search space. Global

optimizers include LeapFrog, simulated annealing, evolutionary algorithms and

swarm optimization.

Local and global optimization techniques can be combined to form hybrid training

algorithms.

Learning consists of adjusting weights until an acceptable empirical error has been

reached. Two types of supervised learning algorithms exist, based on when weights

are updated:

• Stochastic/online learning, where weights are adjusted after each pattern

presentation. In this case the next input pattern is selected randomly from

the training set, to prevent any bias that may occur due to the order in which

patterns occur in the training set.

• Batch/offline learning, where weight changes are accumulated and used to

adjust weights only after all training patterns have been presented.