Francoise J.-P., Naber G.L., Tsun T.S. (editors) Encyclopedia of Mathematical Physics

Подождите немного. Документ загружается.

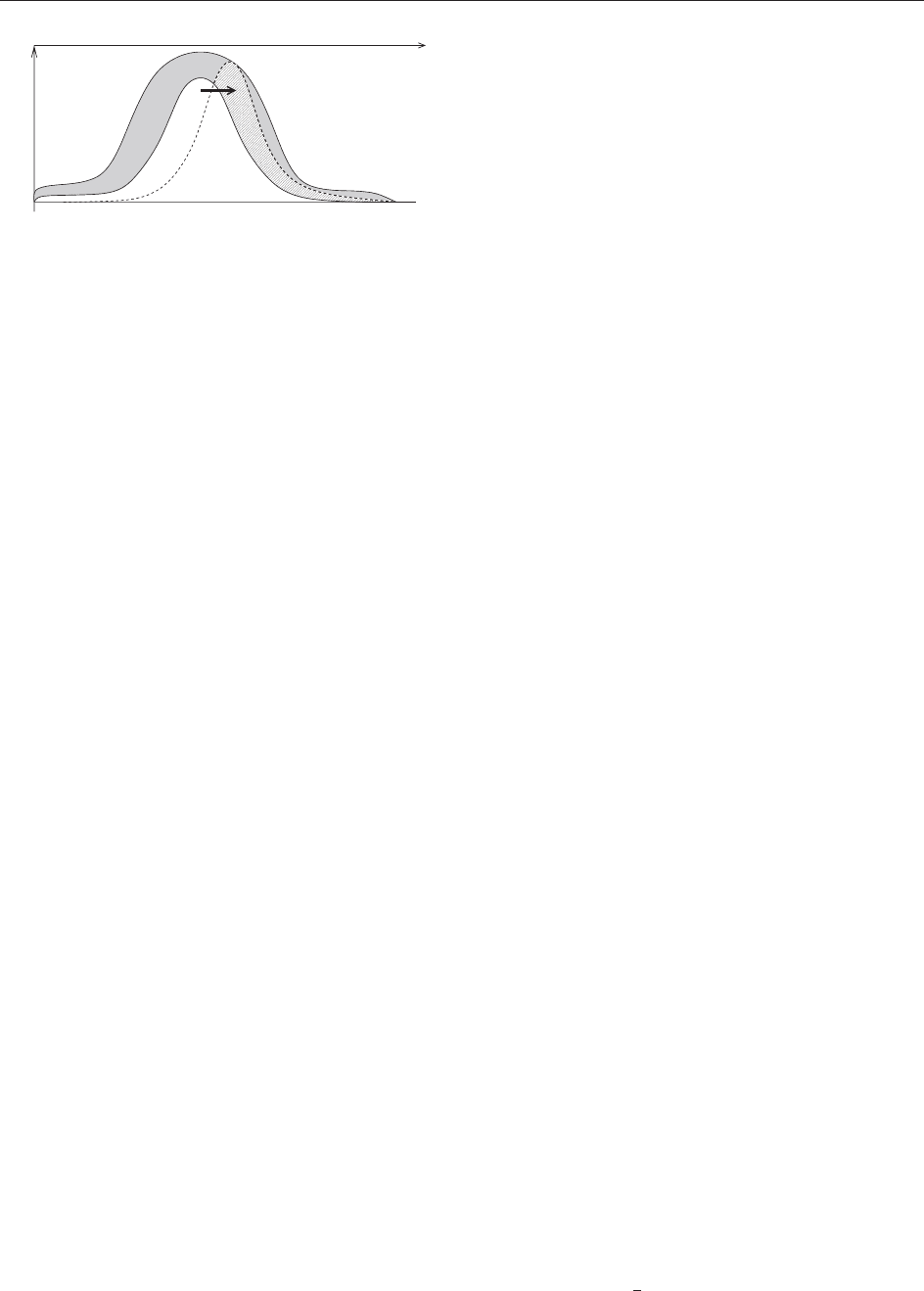

consider only the significant wavelet coefficients of

the solution. Hence, we only retain coefficients

e

u

n

whose modulus is larger than a given threshold ",

that is, j

e

u

n

j >". The corresponding coefficients

are shown in Figure 7 (white area under the solid

line curve).

Adaption strategy To be able to integrate the

equation in time we have to account for the

evolution of the solution in wavelet coefficient

space (indicated by the arrow in Figure 7). There-

fore, we add at time step t

n

the neighbors to the

retained coefficients, which constitute a security

zone (gray area in Figure 7). The equation is then

solved in this enlarged coefficient set (white and

gray areas below the curves in Figure 7) to obtain

e

u

nþ1

. Subsequently, we thresh old the coefficients

and retain only those whose modulus j

e

u

nþ1

j >"

(coefficients under the dashed curve in Figure 7).

This strategy is applied in each time step and hence

allows to automatically track the evolution of the

solution in both scale and space.

Evaluation of the nonlinear term For the

evaluation of the nonlinear term f (u

n

), where the

wavelet coefficients

e

u

n

are given , there are two

possibilities:

Evaluation in wavelet coefficient space.As

illustration, we consider a quadratic nonlinear

term, f (u) = u

2

. The wavelet coefficients of f can

be calculated using the connection coefficients,

that is, one has to calculate the bilinear expres-

sion,

P

P

0

e

u

I

0

00

e

u

0

with the interaction

tensor I

0

00

= h

0

,

00

i. Although many coeffi-

cients of I are zero or very small, the size of I

leads to a computation which is quite untractable

in practice.

Evaluation in physical space. This approach is

similar to the pseudospectral evaluation of the

nonlinear terms used in spectral methods, there-

fore it is called pseudowavelet technique. The

advantage of this scheme is that general nonlinear

terms, for example, f (u) = (1 u)e

C=u

, can be

treated more easily. The method can be summar-

ized as follows: starting from the significant

wavelet coefficients, j

e

u

j >", one reconstructs u

on a locally refined grid and gets u(x

). Then one

can evaluate f (u(x

)) pointwise and the wavelet

coefficients

e

f

are calculated using the adaptive

decomposition.

Finally, one computes the scalar products of the

RHS of [21] with the test functions to advance the

solution in time. We compute

e

u

= hf ,

i belonging

to the enlarged coefficient set (white and gray

regions in Figure 7).

The algorithm is of O(N) complexity, where N

denotes the number of wavelet coefficients retained

in the computation.

Application to 2D Turbulence

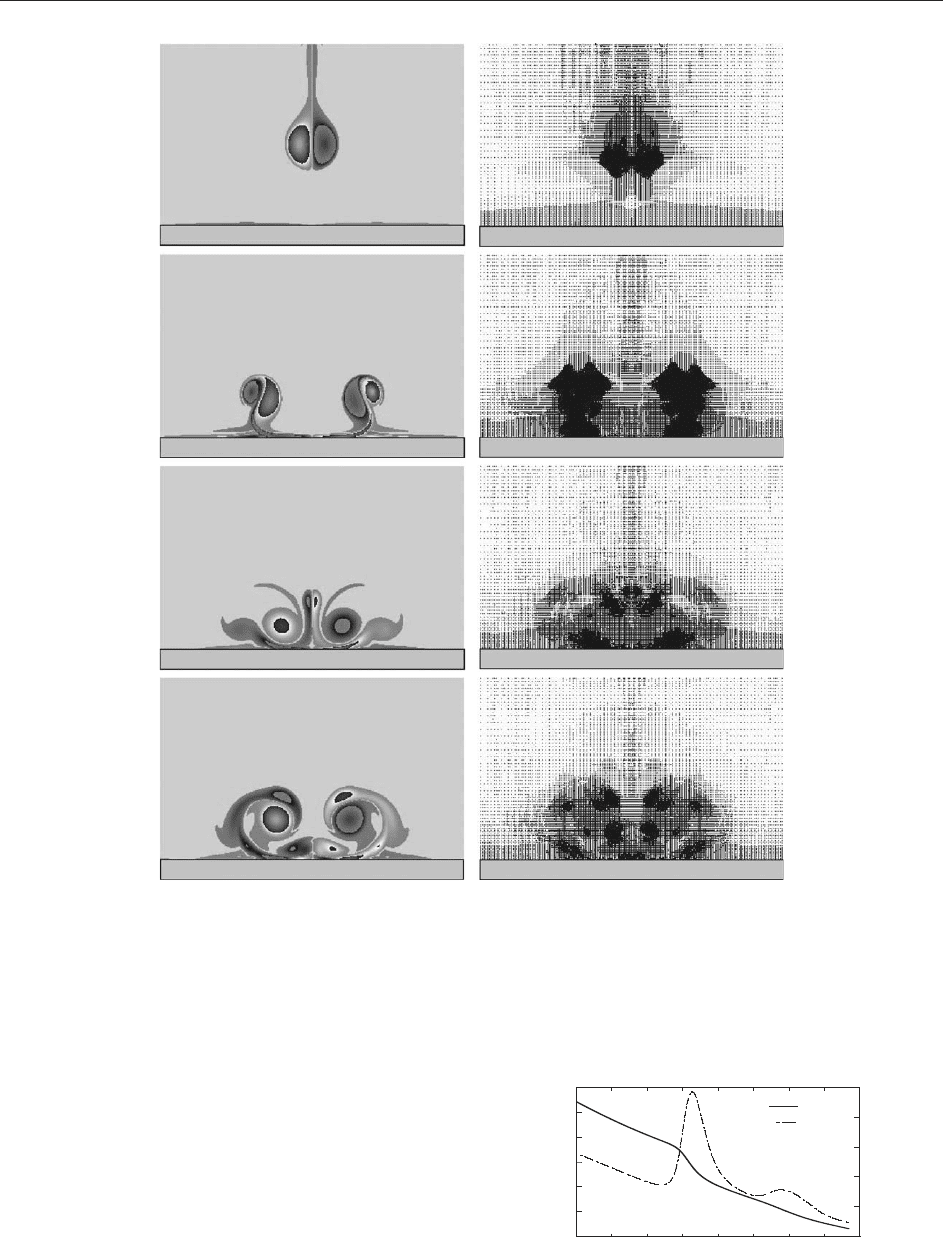

To illustrate the above algorithm we present an

adaptive wavelet computation of a vortex dipole in

a square domain, impinging on a no-slip wall at

Reynolds numb er Re = 1000. To take into account

the solid wall, we use a volume penalization

method, for which both the fluid flow and the

solid container are modeled as a porous medium

whose porosity tends tow ards zero in the fluid and

towards infinity in the solid region.

The 2D Navier–Stokes equations are thus mod-

ified by adding the forcing term F = (1=)v

in eqn [18], where is the penalization parameter

and is the characteristic function whose value is 1

in the solid region an d 0 elsewhere. The equations

are solved using the adaptive wavelet method in

a periodic square domain of size 1.1, in which

the square container of size 1 is imbedded,

taking = 10

3

. The maximal resolution corre-

sponds to a fine grid of 1024

2

points. Figure 8a

shows snapshots of the vorticity field at times

t = 0.2, 0.4, 0.6, and 0.8 (in arbitrary units). We

observe that the vortex dipole is moving towards

the wall and that strong vorticity gradients are

produced when the dipole hits the wall. The

computational grid is dynamically adapted during

the flow evolution, since the nonlinear wavelet filter

automatically refines the grid in regions where

strong gradi ents develop. Figure 8b shows the

centers of the retained wavelet coefficients at

corresponding times.

Note that during the computation only 5% out of

1024

2

wavelet coefficients are used. The time

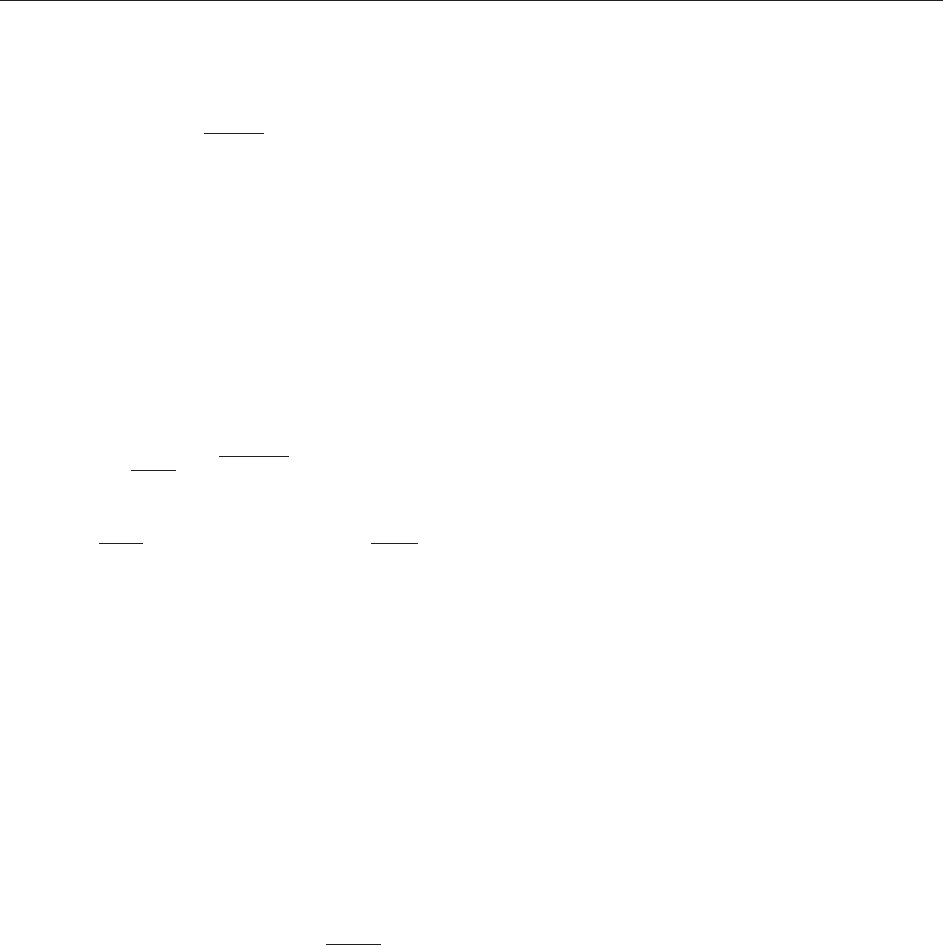

evolution of total kinetic energy and the total

enstrophy F = (

1

y

)v, are plotted in Figure 9 to

J

0

j

i

∼

|ω | > ε

Figure 7 Illustration of the dynamic adaption strategy in

wavelet coefficient space.

418 Wavelets: Application to Turbulence

show the production of enstrophy and the concomi-

tant dissipation of energy when the vortex dipole

hits the wall.

This computation illustrates the fact that the

adaptive wavelet method allows an automatic grid

refinement, both in the boundary layers at the

wall and also in shear layers which develop during

the flow evolution far from the wall. Therewith,

the number of grid points necessary for the

computation is significantly reduced, and we con-

jecture that the resulting compression rate will

increase with the Reynolds number.

(a)

(b)

Figure 8 Dipole wall interaction at Re = 1000. (a) Vorticity field, (b) corresponding centers of the active wavelets, at t = 0.2, 0.4, 0.6,

and 0.8 (from top to bottom).

0.2

0.25

0.3

0.35

0.4

0.45

0.5

50

100

150

200

250

300

0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8

E(t )

Z(t

)

E(t )

Z(t )

t

Figure 9 Time evolution of energy (solid line) and enstrophy

(dashed line).

Wavelets: Application to Turbulence 419

Acknowledgments

Marie Farge thankfully acknowledges Trinity Col-

lege, Cambridge, UK, and the Centre International

de Rencontres Mathe´matiques (CIRM), Marseille,

France, for hospitality while writing this paper.

See also: Turbulence Theories; Viscous Incompressible

Fluids: Mathematical Theory; Wavelets: Applications;

Wavelets: Mathematical Theory.

Further Reading

Cohen A (2000) Wavelet methods in numerical analysis. In:

Ciarlet PG and Lions JL (eds.) Handbook of Numerical

Analysis, vol. 7, Amsterdam: Elsevier.

Cuypers Y, Maurel A, and Petitjeans P (2003) Physics Review

Letters 91: 194502.

Dahmen W (1997) Wavelets and multiscale methods for operator

equations. Acta Numerica 6: 55–228.

Daubechies I (1992) Ten Lectures on Wavelets. SIAM.

Daubechies I, Grossmann A, and Meyer Y (1986) Journal of

Mathematical Physics 27: 1271.

Farge M (1992) Wavelet transforms and their applications to

turbulence. Annual Reviews of Fluid Mechanics 24: 395–457.

Farge M and Rabreau G (1988) Comptes Rendus Hebdomadaires

des Seances de l’Academie des Sciences, Paris 2: 307.

Farge M, Kevlahan N, Perrier V, and Goirand E (1996)

Wavelets and turbulence. Proceedings of the IEEE 84(4):

639–669.

Farge M, Kevlahan N, Perrier V, and Schneider K (1999)

Turbulence analysis, modelling and computing using wavelets.

In: van den Berg JC (ed.) Wavelets in Physics, pp. 117–200.

Cambridge: Cambridge University Press.

Farge M and Schneider K (2002) Analysing and computing

turbulent flows using wavelets. In: Lesieur M, Yaglom A, and

David F (eds.) New trends in turbulence, Les Houches 2000,

vol. 74, pp. 449–503. Springer.

Farge M, Schneider K, Pellegrino G, Wray AA, and Rogallo RS

(2003) Physics of Fluids 15(10): 2886.

Grossmann A and Morlet J (1984) SIAM J. Appl. Anal 15: 723.

Lemarie´ P-G and Meyer Y (1986) Revista Matematica Ibero-

americana 2: 1.

Mallat S (1989) Transactions of the American Mathematical

Society 315: 69.

Mallat S (1998) A Wavelet Tour of Signal Processing. Academic Press.

Schneider K, Farge M, and Kevlahan N (2004) Spatial

intermittency in two-dimensional turbulence. In: Tongring

N and Penner RC (eds.) Woods Hole Mathematics, Perspec-

tives in Mathe matics and Physics, pp. 302–328. Word

Scientific.

http://wavelets.ens.fr – other papers about wavelets and turbu-

lence can be downloaded from this site.

Wavelets: Applications

M Yamada, Kyoto University, Kyoto, Japan

ª 2006 Elsevier Ltd. All rights reserved.

Introduction

Wavelet analysis was first developed in the early

1980s in the field of seismic signal analysis in the

form of an integral transform with a localized kernel

function with continuous parameters of dilation and

translation. When a seismic wave or its derivative

has a singular point, the integral transform has a

scaling property with respect to the dilation para-

meter; thus, this scaling behavior can be available to

locate the singular point. In the mid-1980s, the

orthonormal smooth wavelet was first constructed,

and later the construction method was generalized

and reformulated as multiresolution analysis

(MRA). Since then, several kinds of wavelets have

been proposed for various purposes, and the concept

of wavelet has been extended to new types of basis

functions. In this sense, the most important effect of

wavelets may be that they have awakened deep

interest in bases employed in data analysis and data

processing. Wavelets are now widely used in various

fields of research; some of their applications are

discussed in this article.

From the perspective of time–frequency analysis,

the wavelet analys is may be regarded as a windowe d

Fourier analysis with a variable window width,

narrower for higher frequency. The wavelets can

therefore give information on the local frequency

structure of an event; they have been applied to

various kinds of one-dimensional (1D) or multi-

dimensional signals, for example, to identify an

event or to denoise or to sharpen the signal.

1D wavelets

(a,b)

(x) are defined as

ða;bÞ

ðxÞ¼

1

ffiffiffiffiffiffi

jaj

p

x b

a

where a(6¼0), b are real parameters and (x)isa

spatially localized function called ‘‘analyzing wave-

let’’ or ‘‘mother wavelet.’’ Wavelet analysis gives a

decomposition of a function into a linear combina-

tion of those wavelets, where a perfect reconstruc-

tion requires the analyzing wavelet to satisfy some

mathematical conditions.

For the continuous wavelet transform (CWT),

where the parameters (a, b) are continuous, the

analyzing wavelet (x)L

2

(R) has to satisfy the

admissibility condition

420 Wavelets: Applications

analyzing wavelet (x)L

2

(R) has to satisfy the

admissibility condition

C

Z

1

1

j

^

ð!Þj

2

j!j

d!<1

where

ˆ

(!) is the Fourier transform of (x):

^

ð!Þ¼

Z

1

1

e

i!x

ðxÞdx

The admissibility condition is known to be equiva-

lent to the condition that (x) has no zero-frequency

component, that is,

ˆ

(0) = 0, under some mild

condition for the decay rate at infinity. Then the

CWT and its inverse transform of a data function

f (x) 2 L

2

(R) is defined as

T

ða; bÞ¼

1

ffiffiffiffiffiffi

C

p

Z

1

1

ða;bÞ

ðxÞf ðxÞdx

f ðxÞ¼

1

ffiffiffiffiffiffi

C

p

Z

1

1

Z

1

1

T

ða; bÞ

ða;bÞ

ðxÞ

da db

a

2

In the case of the discrete wavelet transform

(DWT), the parameters (a, b) are taken discret e; a

typical cho ice is a = 1=2

j

, b = k=2

j

, where j and k are

integers:

j;k

ðxÞ¼2

j=2

ð2

j

x kÞ

In order that the wave lets {

j,k

(x) jj, k 2 Z} may

constitute a complete orthonormal system in L

2

(R),

the analyzing wavelet should satisfy more stringent

conditions than the admissibility condition for the

CWT, and is now constructed in the framework of

MRA. A data function is then decomposed by the

DWT as

f ðxÞ¼

X

1

j¼1

j;k

j;k

ðxÞ;

j;k

¼

Z

1

1

j;k

ðxÞf ðxÞdx

Even when the discrete wavelets do not constitute

a complete orthnormal system, they often form a

wavelet frame if linear combinations of the wavelets

are dense in L

2

(R) and if there are two constants A,

B such that the inequality

Akf k

2

X

j;k

jh

j;k

; f ij

2

Bkf k

2

holds for an arbitrary f (x) 2 L

2

(R). For the wavelet

frame {

j,k

}, there is a corresponding dual frame,

{

˜

j,k

}, which permits the following expansion of f (x):

f ðxÞ¼

X

j;k

h

j;k

; f i

~

j;k

ðxÞ¼

X

j;k

h

~

j;k

; fi

j;k

ðxÞ

The wavelet frame is also employed in several

applications.

From the prospect of applications, the CWTs are

better adapted for the analysis of data functions,

including the detection of singul arities and patterns,

while the DWTs are adapted to the data processing,

including signal compression or denoising.

Singularity Detection and Multifractal

Analysis of Functions

Since its birth, the wavelet analysis has been applied

for the detecti on of singularity of a data function.

Let us define the Ho¨ lder exponent h(x

0

)atx

0

of a

function f (x) is defined here as the largest value of

the exponent h such that there exists a polynomial

P

n

(x) of degree n that satisfies for x in the

neighborhood of x

0

:

jf ðxÞP

n

ðx x

0

Þj ¼ Oðjx x

0

j

h

Þ

The da ta function is not differentiable if h(x

0

) < 1,

but if h(x

0

) > 1 then it is differentiable and a

singularity may arise in its higher derivatives. The

wavelet transform is applied to find the Ho¨ lder

exponent h(x

0

), because T

(a, b) has an asymptotic

behavior T

(a, b) = O(a

h(x

0

þ1=2)

)(a ! 0) if the ana-

lyzing wavelet has N(>h(x

0

)) vanishing moments,

that is,

Z

1

1

x

m

ðxÞdx ¼ 0; m 2 Z; 0 m < N

A commonly used analyzing wavelet for this purpose

may be the N-time derivative of the Gaussian

function (x) = d

N

(e

x

2

=2

)=dx

N

.Thismethodworks

well to examine a single or some finite number of

singular points of the data function.

When the data function is a multifractal function

with an infinite number of singular point of various

strengths, the multifractal property of the data

function is often charac terized by the singularity

spectrum D(h) which denotes the Hausdorff dimen-

sion of the set of points where h(x) = h. The

singularity spectrum is, however, difficult to obtain

directly from the CWT, and the Legendre transfor-

mation is introduced to bypass the diff iculty.

Fully developed 3D fluid turbulence may be a

typical example of wavelet application to the

singularity detection. The Kol mogorov similarity

law of fluid turbulence for the longitudinal velocity

increment u(r) e (u(x þ r e) u(x)), where u(x)

is the velocity field and e is a constant unit vector,

Wavelets: Applications 421

predicts a scaling property of the structure function;

for r in the inertial subrange,

hðuðrÞÞ

p

ir

p

;

p

¼ p=3

where hi denotes the statis tical mean. In reality,

however, the scaling exponent

p

measured in

experiments shows a systematic deviation from p/3,

which is considered to be a reflection of intermit-

tency, namely the spatial nonuniformity or multi-

fractal property of active vortical motions in

turbulence. For simplicity, let us consider the

velocity field on a linear section of the turbulence

field. According to the multifractal formalism, the

turbulence veloc ity field has singularities of various

strengths described by the singularity spectrum

D(h), which is related to the scaling exponent

p

through the Legendre transform, D(h) = inf

p

(ph

p

þ 1). This relation is often used to determine D(h)

from the knowledge of

p

(structure function

method). However, this method does not necessarily

work well because, for example, it does not capture

the singular points of the Ho¨ lder exponent larger

than 1 and it is unstable for h < 0.

These difficulties are not restricted to the turbu-

lence research, but arise commonly when the

structure function is employed to determine the

singularity spectrum. In these problems, the CWT

T

(a, b) provide s an alternative method. An inge-

nious technique is to take only the modulus maxima

of T

(a, b) (for each of fixed a) to construct a

partition function

Zða; qÞ¼

X

l2L

max

sup

ða;b

0

Þ2l

jT

ða; b

0

Þj

"#

q

where q 2 R, and L

max

denotes the set of all maxima

lines, each of which is a continuous curve for small

value of a, and there exists at least one maxima line

toward a singular point of the Ho¨ lder exponent

h(x

0

) < N. In the limit of a ! 0, defining the

exponent (q)asZ(a, q) a

(q)

, one can obtain the

singularity spectrum through the Legendre

transform:

DðhÞ¼inf

q

qhþ

1

2

ðqÞ

This method (wavelet-transform modulus-maxima

(WTMM) method) is advantage ous in that it works

also for singularities of h > 1 and h < 0. Several

simple examples of multifractal functions have been

successfully analyzed by this method. For fluid

turbulence, this method gives a singularity spectrum

D(h) which has a peak value of 1ath 1=3,

consistently with Kolmogorov similarity law, but

has a convex shape around h = 1=3 suggesting a

multifractal property. For a fractal signal, we note

that the WTMM method enlightens the hierarchical

organization of the singularities, in the branching

structure of the WT skeleton defined by the

maxima lines arrangement in the (a, b ) half-plane.

Though the above discussion also applies to the

DWT, the detection of the Ho¨ lder exponent h in

experimental situations is usually performed by the

CWT, which has no restriction on possible values of

a, while the DWT is often employed for theoretical

discussions of singularity and multifractal structure

of a function.

Multiscale Analysis

Wavelet transform expands a data function in the

time–frequency or the position–wavenumber space,

which has twice the dimension of the original signal,

and makes it easier to perform a multiscale analysis

and to identify events involved in the signal. In the

wavelet transform, as stated above, the time resolu-

tion is higher at higher frequency, in contrast with

the windowed Fourier transform where the time and

the frequency resolutions are independent of fre-

quency. Another advantage of wavelet is a wide

variety of analyzing wavelet, which enables us to

optimize the wavelet according to the purpose of

data analysis. Both the CWT and the DWT are

available for these time–frequency or position–

wavenumber analysis. However, the CWT has

properties quite different from those of familiar

orthonormal bases of discrete wavelets.

Multidimensional CWT

The CWT can be formulated in an abstract way. We

can regard G = {(a, b) ja(6¼0), b 2 R} as an affine

group on R with the group operation of

(a, b)(a

0

, b

0

) = (aa

0

, ab

0

þ b) associated with the

invariant measure d = da db=a

2

. The group G has

its unitary representation in the Hilbert space

H = L

2

(R):

ðUða; bÞf ÞðxÞ¼

1

ffiffiffiffiffiffi

jaj

p

f

x b

a

and then we can consider the CWT can be constructed

as a linear map W from L

2

(R)toL

2

(G;da db=a

2

):

W : f ðxÞ7!T

ða; bÞ¼

1

ffiffiffiffiffiffi

C

p

hUð a ; bÞ ; f i

where h, i is the inner product of L

2

(R) with the

complex conjugate taken at the first element, and

422 Wavelets: Applications

(x) is a unit vector (analyzing wavelet) satisfying

the abstract admissibility condition

C

¼

Z

G

jhUða; bÞ ; ij

2

d<1

This formulation is applicable also to a locally

compact group G and its unitary and square

integrable representation in a Hilbert space H.

Note that even the canonical coherent states are

included in this framework by taking the Weyl–

Heisenberg group and L

2

(R)forG and H,

respectively. This abstract formulation allows us

to extend the CWT to higher-dimensional Eucli-

dean spaces and other manifolds: for example, 2D

sphere S

2

for geophysical application and 4D

manifold of spacetime t aking the Poincare´ group

into consideration.

In R

n

, the CWT of f (x) 2 L

2

(R

n

) and its inverse

transform are given by

T

ða; r; bÞ¼

1

ffiffiffiffiffiffi

C

p

Z

R

n

ða;r;bÞ

ðxÞf ðxÞdx

f ðxÞ¼

1

ffiffiffiffiffiffi

C

p

Z

G

Tða; r; bÞ

ða;r;bÞ

ðxÞ

da dr db

a

nþ1

where r 2 SO(n), b 2 R

n

,dr is the normalized invar-

iant measure of G = SO(n), and the wavelets are

defined as

(a, r, b)

(x) = (1=a

n=2

) (r

1

(x b)=a), with

the analyzing wavelet satisfying the admissibility

condition

C

¼

Z

R

n

j

^

ðwÞj

jwj

n

dw < 1

Note that these wavelets are constructed not only

by dilation and translation but also by rotation

which therefore gives the possibility for directional

pattern detection in a da ta function. In the case of

2D sphere S

2

, on the other hand, the dilation

operation should be reinterpreted in such a way

that at the North Pole, for example, it is the normal

dilation in the tangent plane followed by lifting it

to S

2

by the stereographic projection from the

South Pole.

Generally, the abstract map W thus defined is

injective and therefore reversal, but not surjective in

contrast with the Fourier case. Actually in the case of

1D CWT, T

(a, b) is subject to an integral condition:

T

ða; bÞ¼

Z

1

1

Z

1

1

da db

a

2

Kða; b; a

0

; b

0

ÞT

ða

0

; b

0

Þ

Kða; b; a

0

; b

0

Þ¼

Z

1

1

ða;bÞ

ðxÞ

ða

0

;b

0

Þ

ðxÞdx

which defines the range of the CWT, a subspace

of L

2

(R). Therefore, if one wants to modify T

(a, b)

by, for example, assigning its value as zero in some

parameter region just as in a filter process, care

should be taken for the resultant T

(a, b) to be in the

image of the CWT. The reason may be understood

intuitively by noticing that the wavelets

(a,b)

(x) are

linearly dependent on each other. The expression of

a data function by a linear combination of the

wavelets is therefore not unique, and thus is

redundant. The CWT gives only T

(a, b)ofthe

least norm in L

2

(R

2

;da db=a

2

). In physical inter-

pretations of the CWT, however, this nonuniqueness

is often ignored.

Pattern Detection

Edge detection The edges of an object are often the

most important components for pattern detection.

The edge may be considered to consist of points of

sharp transition of image intensity. At the edge, the

modulus of the gradient of the image f (x, y)is

expected to take a local maximum in the 1D

direction perpendicular to the edge. Therefore, the

local maxima of jrf (x, y)j may be the indicator of

the edge. However, the image textures can also give

similar sharp transitions of f (x, y), and one should

take into account the scal e dependence which

distinguishes between edges and textures. One of

the practically possible ways for this purpose is to

use dyadic wavelets

m

j

(x, y) = 2

j

m

(2

j

x,2

j

y) which

are generated from the two wavelets (

1

,

2

) = (

@=@x, @=@y), where is a localized function

(multiscale edge detection method). The dyadic

wavelet transform of the image f (x, y )

T

m

j

ðb

1

; b

2

Þ¼hf ðx; yÞ;

m

j

ðx b

1

; y b

2

Þi; m ¼ 1; 2

defines the multiscale edges as a set of points

b = (b

1

, b

2

) where the modulus of the wavelet trans-

form, j(T

1

j

, T

2

j

)j, takes a locally maximum value

(WTMM) in a 1D neighborhood of b in the

direction of (T

1

j

(b), T

2

j

(b)). Scale dependence of

the magni tude of the modulus maxima is related to

the Ho¨ lder exponent of f (x, y) similarly to 1D case,

and thus gives information to distinguish between

the edges and the textures.

Inversely, the information of WTMM b

j,p

=

{(b

1,j,p

, b

2,j,p

)} of multiscale edges can be made use

of for an approximate reconstruction of the original

image, although the perfect reconstruction cannot be

expected because of the noncompleteness of the

modulus maxima wavelets. Assuming that

{

1

j,p

,

2

j,p

} = {

1

j

(x b

j,p

),

2

j

(x b

j,p

)} constitutes a

frame of the linear closed space generated by

Wavelets: Applications 423

{

1

j,p

,

2

j,p

}, an approximate image

ˆ

f is obtained by

inverting the relation

L

ˆ

f

X

m

X

j;p

h

ˆ

f ;

m

j;p

i

m

j;p

¼

X

m

X

j;p

T

m

j

ðb

j;p

Þ

m

j;p

using, for example, a conjugate gradient algorithm,

where a fast calculation is possible with a filter bank

algorithm for the dyadic wavelet (‘‘algorithm a`

trous’’). This algorithm gives only the solution of

minimum norm among all possible solutions, but it

is often satisfactory for practical purposes and thus

is applicable also to data compression.

Directional detection For oriented features such as

segments or edges in images to be detected, a

directionally selective wavelet for the CWT is desired.

A useful wavelet for this purpose is one that has the

effective support of its Fourier transform in a convex

cone with apex at the origin in wave number space. A

typical example of the directional wavelet may be the

2D Morlet wavelet:

ðxÞ¼expðik

0

xÞexpðjAxj

2

Þ

where k

0

is the center of the support in Fourier

space, and A is a 2 2 matrix diag[

1=2

, 1]( 1),

where the admissibility condition for the CWT is

approximately satisfied for jk

0

j5. Another exam-

ple is the Cauchy wavelet which has the support

strictly in a convex cone in wave number space.

These wavelets have the directional selectivity

with preference to a slender object in a specific

direction. One of their applications is the analysis of

the velocity field of fluid motion from an experi-

mental data, where many tiny plastic balls distrib-

uted in fluid give a lot of line segments in a picture

taken with a short exposure. The directional wavelet

analysis of the picture classifies the line segments

according to their directions, indicating the direc-

tions of fluid velocity. Another example may be a

wave-field analysis where many waves in different

directions are superimposed; the directional wavelets

allow one to decompose the wave field into the

component waves. Directional wave lets have also

been applied successfully to detect symmetry of

objects such as crystals or quasicrystals.

Denoising and separation of signals The wavelet

frame as well as the CWT give a redundant

representation of a data function. If, instead of the

original data, the redundant expression is trans-

mitted, the redundancy is used to reduce the noise

included in the received data because the redun-

dancy requires the data to belong to a subspace, and

the projection of the received data to the subspace

reduces the noise component orthogonal to it. More

specifically, the wavelet frame gives a representation

of a data function as f (t) =

P

j,k

j,k

j,k

, where the

expansion coefficients

j,k

= h

j,k

, f (x)i satisfy the

defining equation of the subspace

j

0

;k

0

¼

X

j;k

h

j

0

;k

0

;

j;k

i

If the frame coefficients are transmitted, the projec-

tion operator P, which is defined on the right-hand

side of the above equation, reduces the noise in the

received coefficients

j,k

contaminated during the

transmission.

However, this method is not applicable if the

transmitted signal is not redundant. Then some

a priori criterion is necessary to discriminate between

signal and noise. Various criteria have been pro-

posed in different fields. If the signal and the noise,

or plural signals have different power-law forms of

spectra, then their discrimination may be possible by

the DWT at higher-frequency region where the

difference in the magnitude of the coefficients is

significant. In this approach, the wavelets of Meyer

type, that is, an orthogonal wavelet with a compact

support in Fourier space, may be preferable because

the wavelets of differen t scales are separated, at least

to some extent, in Fourier space.

In fluid dynamics, the vorticity field of 2D

turbulence is found to be decom posed into coherent

and incoherent vorticity fields, according as the

CWT is larger than a threshold value or not,

respectively. These two fie lds give different Fourier

spectra of the velocity field (k

5

for coherent part

while k

3

for incoherent part), showing that the

coherent structures are responsible for the deviation

from k

3

predicted by the classical enstrophy

cascade theory. In an astronomical application, on

the other hand, the data processing is performed by

a more sophisticated method taking into account

interscale relation in the wavelet transform, because

an astronomical image contains various kinds

of objects, including stars, double-stars, galaxies,

nebulas, and clusters. In a medical image however

contrast analysis is indispensable for diagnostic

imaging to get a clear detailed picture of organic

structure. A scale-dependent local contrast is defined

as the ratio of the CWT to that given by an

analyzing wavelet with a larger support. A multi-

plicative scheme to improve the contrast is con-

structed by using the local contrast.

Signal Compression

Signal compression is quite an important technology

in digital communication. Speech, audio, image, and

digital video are all important fields of signal

424 Wavelets: Applications

compression, and plenty of compression methods

have been put to practical use, but we mention here

only a few.

The MRA for orthogonal wavelets gives a

successive procedure to decompose a subspace of

L

2

(R) into a direct sum of two subspaces corre-

sponding to higher- and lower-frequency parts; only

the latter of which is decomposed again into its

higher- and lower-frequency parts. Algebraically,

this procedure was already known before the

discovery of MRA in filter theory in electrical

engineering, where a discretely sampled signal is

convoluted with a filter series to give, for example, a

high-pass-filtered or low-pass-filtered series. An

appropriate designed pair of a high-pass and a

low-pass filters followed by the downsampling

yields two new series corresponding to the higher-

and lower-frequency parts, respectively, which are

then reversible by another two reconstruction filters

with the upsampling. These four filters which are

often employed in a widely us ed technique of ‘‘sub-

band coding’’ then constitute a perfect reconstruc-

tion filter bank. Under some conditions, successive

applications of this decomposition process to the

series of lower-frequency parts, which is equivalent

to the nesting structure of MRA, have been used for

data compression (quadrature mirror filter). A

famous example is a data compression system of

FBI for finger prints, consisting of wavelet coding

with scalar quantization.

In MRA, however, it is only the lower-frequency

parts that are successively decomposed. If both the

lower- and the higher-frequency parts are repeatedly

decomposed by the decomposition filters, then the

successive convolution processes correspond to a

decomposition of data function by a set of wavelet-

like functions, called ‘‘wavelet packet,’’ where there

are choices whether to decompose the higher- and/or

the lower-frequency parts. The best wavelet packet, in

the sense of the entropy, for example, within a

specified number of decompositions, often provides

with a powerful tool for data compression in several

areas, including speech analysis and image analysis.

We also note that from the viewpoint of the best basis

which minimizes the statistical mean square error of

the thresholded coefficients, an orthonormal wavelet

basis gives a good concentration of the energy if the

original signal is a piecewise smooth function super-

imposed by a white noise, which is thus efficiently

removed by thresholding the coefficients. The effi-

ciency of a wavelet expansion of a signal is sometimes

evaluated with the entropy of ‘‘probability’’ defined as

j

j,k

j

2

=jjf jj

2

. A better wavelet can be selected by

reducing the entropy, practically from among some

set of wavelets, and its restricted expansion coefficients

give a compressed signal. One of the systematic

methods to generate such a suitable basis is also to

employ the wavelet packets.

Numerical Calculation

Application of wavelet transform, especially of the

DWT, to numerical solver for a differential equation

(DE) has long been studied. At the first sight, the

wavelets appear to give a good DE solver because

the wavelet expansion is generally quite efficient

compared to Fourier series due to its spatial

localization. But its implementation to an efficient

computer code is not so straight forward; research is

still continuing for concrete problems. Application

of the CWT to spectral method for partial differ-

ential equation (PDE) has been studied extensively.

There is no wavelet which diagonalizes the differ-

ential operator @=@x; therefore, an efficient numer-

ical method is necessary for derivatives of wavelets.

Products of wavelets also yield another numerical

problem. MRA brings about mesh points which are

adaptive to some extent, but finite element method

still gives more flexible mesh points.

For some scaling-invariant differential or integral

operators, including @

2

=@x

2

, Abel transformations,

and Reisz potential, adaptive biorthogonal wavelets

can be provided with block-diagonal Galerkin

representations, which has been applied to data

processing. Generally, simultaneous localization of

wavelets, both in space and in scale, leads to a

sparse Galerkin representation for many pseudodif-

ferential operators and their inverses. A threshold-

ing technique with DWT has been introduced to

coherent vortex simulation of the 2D Navier–Stokes

equations, to reduce the relevant wavelet co effi-

cients. Another promising application of wavelet

occurs as a preprocessor for an iterative Poisson

solver, where a wavelet-based preconditioning leads

to a matrix with a bounded condition number.

Other Wavelets and Generalizations

Several new types of wavelets have been proposed:

‘‘coiflet’’ whose scaling function has vanishing

moments giving expansion coefficients approxi-

mately equal to values of the data functions, and

‘‘symlet’’ which is an orthonormal wavelet with a

nearly symmetric profile . Multiwavelets are wavelets

which give a complete orthonormal system in L

2

space. In 2D or multidimensional applications of the

DWT, separable orthonormal wavelets consisting of

tensor products of 1D orthonormal wavelets are

frequently used, while nonseparable orthonormal

wavelets are also available. Another generalization

Wavelets: Applications 425

of wavelets is the Malvar basis which is also a

generalization of local Fourier basis, and gives a

perfect reconstruction. A new direction of wavelet is

the second-generation wavelets which are con-

structed by lifting scheme and free from the regular

dyadic procedure, and thus applicable to compact

regions as S

2

and a finite interval.

See also: Fractal Dimensions in Dynamics; Image

Processing: Mathematics; Intermittency in Turbulence;

Wavelets: Application to Turbulence; Wavelets:

Mathematical Theory.

Further Reading

Benedetto JJ and Frazier W (eds.) (1994) Wavelets: Mathematics

and Applications. Boca Raton, FL: CRC Press.

van den Berg JC (ed.) (1999) Wavelets in Physics. Cambridge:

Cambridge University Press.

Daubechies I (1992) Ten Lectures on Wavelets, SIAM, CBMS61,

Philadelphia.

Mallat S (1998) A Wavelet Tour of Signal Processing. San Diego:

Academic Press.

Strang G and Nguyen T (1997) Wavelet and Filter Banks.

Wellesley: Wellesley-Cambridge Press.

Wavelets: Mathematical Theory

K Schneider, Universite

´

de Provence, Marseille,

France

M Farge, Ecole Normale Supe

´

rieure, Paris, France

ª 2006 Elsevier Ltd. All rights reserved.

Introduction

The wavelet transform unfolds functions into time

(or space) and scale, and possibly directions. The

continuous wavelet trans form has been discovered

by Alex Grossmann and Jean Morlet who published

the first paper on wavelets in 1984. This mathema-

tical technique, based on group theory and square-

integrable representations, allows us to decompose a

signal, or a field, into both space and scale, and

possibly directions. The orthogonal wavelet trans-

form has been discovered by Lemarie´ and Meyer

(1986). Then, Daubechies (1988) found orthogonal

bases made of compactly supported wavelets, and

Mallat (1989) designed the fast wavelet transform

(FWT) algorithm. Further developments were done

in 1991 by Raffy Coifman, Yves Meyer, and Victor

Wickerhauser who introduced wavelet packets and

applied them to data compression. The development

of wavelets has been interdisciplinary, with con-

tributions coming from very different fields such as

engineering (sub-band coding, quadrature mirror

filters, time–frequency analysis), theoretical physics

(coherent states of affine group s in quantum

mechanics), and mathematics (Calderon–Zygmund

operators, characterization of function spaces, har-

monic analysis). Many reference textbooks are

available, some of them we recommend are listed

in the ‘‘Further readin g’’ section. Meanwhile, a large

spectrum of applications has grown and is still

developing, ranging from signal analysis and image

processing via numerical analysis and turbu lence

modeling to data compression.

In this article, we will first define the continuous

wavelet transform and then the orthogonal wavelet

transform based on a multiresolution analysis.

Properties of both transforms will be discussed

and illustrated by examples. For a general intro-

duction to wavelets, see Wavelets: Applications.

Continuous Wavelet Transform

Let us consider the Hilbert space of square-int egr-

able functions L

2

(R) = {f : jkf k

2

< 1}, equipped

with the scalar product hf , gi=

R

R

f (x)g

?

(x)dx

(

?

denotes the complex conjugate in the case of

complex-valued functions) and where the norm is

defined by kf k

2

= hf , f i

1=2

.

Analyzing Wavelet

The starting point for the wavelet transform is to

choose a real- or complex-valued function 2

L

2

(R), called the ‘‘mother wavelet,’’ which fulf ills

the admissibility condition,

C

¼

Z

1

0

b

ðkÞ

2

dk

jkj

< 1½1

where

b

ðkÞ¼

Z

1

1

ðxÞe

2kx

dx ½2

denotes the Fourier transform, with =

ffiffiffiffiffiffi

1

p

and k

the wave number. If is integrable, that is, 2

L

1

(R), this implies that has zero mean,

Z

1

1

ðxÞdx ¼ 0or

b

ð0Þ¼0 ½3

In practice , however, one also requires the wavelet

to be well localized in both physical and Fourier

426 Wavelets: Mathematical Theory

Z

1

1

x

m

ðxÞdx ¼ 0 for m ¼ 0; M 1 ½4

that is, monomials up to degree M 1 are exactly

reproduced. In Fourier space, this property is

equivalent to

d

m

dk

m

b

ðkÞj

k¼0

¼ 0 for m ¼ 0; M 1 ½5

therefore, the Four ier transform of decays

smoothly at k = 0.

Analysis

From the mother wavelet , we generate a family of

continuously translated and dilated wavelets,

a;b

ðxÞ¼

1

ffiffiffi

a

p

x b

a

for a > 0andb 2 R ½6

where a denotes the dilation parameter, correspond-

ing to the width of the wavelet support, and b the

translation parameter, corresponding to the position

of the wavelet. The wavelets are normalized in

energy norm, that is, k

a, b

k

2

= 1.

In Fourier space, eqn [6] reads

b

a;bðkÞ

¼

ffiffiffi

a

p

b

ðakÞe

2kb

½7

where the contraction with 1/a in [6] is reflected in

a dilation by a [7] and the translation by b implies a

rotation in the complex plane.

The continuous wavelet transform of a function f

is then defined as the convolution of f with the

wavelet family

a, b

:

e

f ða; bÞ¼

Z

1

1

f ðxÞ

a;b

ðxÞdx ½8

where

a, b

denotes, in the case of complex-valued

wavelets, the complex conjuga te.

Using Parseval’s identity, we get

e

f ða; bÞ¼

Z

1

1

b

f ðkÞ

b

a;b

ðkÞdk ½9

and the wavelet transform could be interpreted as a

frequency decomposition using bandpass filters

b

a, b

centered at frequencies k = k

=a. The wave number

k

denotes the barycenter of the wavele t support in

Fourier space

k

¼

R

1

0

kj

b

ðkÞjdk

R

1

0

j

b

ðkÞjdk

½10

Note that these filters have a variable width k=k;

therefore, when the wave number increases, the

bandwidth becomes wider.

Synthesis

The admissibility condition [1] implies the existence

of a finite energy reproducing kernel, which is a

necessary condition for being able to reconstruct the

function f from its wavele t coefficients

~

f . One then

recovers

f ðxÞ¼

1

C

Z

1

0

Z

1

1

e

f ða; bÞ

a;b

ðxÞ

dadb

a

2

½11

which is the inverse wavelet transform.

The wavelet transform is an isometry an d one has

Parseval’s identity. Therefore, the wavelet transform

conserves the inner product an d we obtain

hf ; gi¼

Z

1

1

f ðxÞg

ðxÞdx

¼

1

C

Z

1

0

Z

1

1

e

f ða; bÞ

e

g

ða; b Þ

dadb

a

2

½12

As a consequence, the total energy E of a signal

can be calculated either in physical space or in

wavelet space, such as

E ¼

Z

1

1

jf ðxÞj

2

dx

¼

1

C

Z

1

0

Z

1

1

j

e

f ða; bÞj

2

dadb

a

2

½13

This formula is also the starting point for the

definition of wave let spectra and scalogram (see

Wavelets: Application to Turbulence).

Examples

In the following, we apply the continuous wavelet

transform to different academic signals using the

Morlet wavelet. The Morlet wavelet is complex

valued, and consists of a modulated Gaussian with

width k

0

=:

ðxÞ¼ðe

2x

e

k

2

0

=2

Þe

2

2

x

2

=k

2

0

½14

The envelope factor k

0

controls the number of

oscillations in the wave packet; typically, k

0

= 5is

used. The correction factor e

k

2

0

=2

, to ensure its

vanishing mean, is very small and often neglected.

The Fourier transform is

b

ðkÞ¼

k

0

2

ffiffiffi

p

e

ðk

2

0

=2Þð1þk

2

Þ

ðe

k

2

0

k

1Þ½15

Figure 1 shows wavelet analyses of a cosine, two

sines, a Dirac, and a characteristic function. Below

Wavelets: Mathematical Theory 427