Ambaum M., Thermal Physics of the Atmosphere

Подождите немного. Документ загружается.

2.5 ENTROPY AND PROBABILITY: A MACROSCOPIC EXAMPLE 31

We find the same expression for entropy change for an ideal gas at constant

volume, see Eq. 2.23. Indeed, the heat capacity of a monoatomic ideal gas at

constant volume is 3Nk

B

/2.

The initial and final temperatures are related by

T

b

= T

a

+ Q

a→b

/C, (2.43)

with Q

a→b

the total amount of heat transferred to the system to change its

temperature from T

a

to T

b

. The heat transfer can be either positive, T

b

>T

a

,

or negative, T

b

<T

a

. Using this relationship between T

a

and T

b

, we can write

the expression for the entropy change as

S

a→b

= C ln

1 +

Q

a→b

CT

a

. (2.44)

For small heat transfers Q

a→b

C/T

a

we can use the Taylor expansion

ln(1 + ) ≈ to find the familiar expression

S

a→b

= Q

a→b

/T

a

. (2.45)

For small heat transfers, or large heat capacity, the temperature of the sys-

tem will not vary by much and the heat transfer occurs at a nearly constant

temperature.

The change of entropy of the system is only dependent on its initial and

final temperatures; it does not matter what temperatures the system had in

between. Suppose we change the system from temperature T

a

to T

c

, but first

change it from T

a

to T

b

and then from T

b

to T

c

. The total entropy change in

the combined transformation is

S

a→b→c

= S

a→b

+ S

b→c

= C ln (T

b

/T

a

) + C ln (T

c

/T

b

) = C ln (T

c

/T

a

). (2.46)

This is an important property: we can construct any number of ways a system

can achieve equilibrium, but the resulting entropy change is only dependent

on the initial and final state. It demonstrates how, for this simple system, the

entropy is a function of state.

Now consider two such systems with temperatures T

1

and T

2

, respectively.

If we bring the two systems into thermal contact, heat will start to flow from

the high temperature system to the low temperature system. Because the

systems have the same heat capacity, the final temperature for both systems

will be the average T

a

of the two initial temperatures,

T

a

= (T

1

+ T

2

)/2. (2.47)

The total entropy change in the two systems is

S = S

1

+ S

2

= C ln (T

a

/T

1

) + C ln (T

a

/T

2

). (2.48)

32 CH 2 THE FIRST AND SECOND LAWS

0

1

2

–2 –1 0 1

2

ΔT /T

a

ΔS /C

FIGURE 2.6 Entropy gain, S, in units of heat capacity, C, associated with removing a

temperature difference T between two systems with an average temperature T

a

. Thick

line: exact result of Eq. 2.50; thin line: quadratic approximation of Eq. 2.51.

The latter expression can be most easily analyzed if we write

T

1

= T

a

+ T/2,T

2

= T

a

− T/2, (2.49)

where T = T

1

− T

2

. Note that T can be either positive or negative. The

total entropy change, on putting the two systems into thermal contact, can

then be written

S = C ln

T

2

a

T

2

a

− T

2

/4

. (2.50)

Figure 2.6 shows this total entropy change as a function of T. As can be seen,

the entropy change is always positive; the heat conduction system satisfies

the second law of thermodynamics.

For small differences in the initial temperature, the total entropy change

can be approximated with the usual Taylor expansion to

S =

CT

2

4T

2

a

, if T

2

T

2

a

. (2.51)

Now using the relationship between entropy and the probability of a partic-

ular state, Eq. 2.38, we can write the ratio of the probability of finding the

two systems with a temperature difference T to the probability of finding

them with no temperature difference as

P(T)

P(0)

= exp

−

CT

2

4k

b

T

2

a

. (2.52)

This is a Gaussian distribution, with the variance of the temperature fluctua-

tions given by

T

2

=2k

B

T

2

a

/C. (2.53)

2.6 ENTROPY AND PROBABILITY: A MACROSCOPIC EXAMPLE 33

So if the heat capacity of the systems increases, the temperature fluctuations

decrease, as expected. The heat capacity of any system increases with the

number of molecules N in the system. For a monoatomic gas, we have C =

3Nk

B

/2, so the relative size of the temperature fluctuations is

T

2

/T

2

a

= 4/3N. (2.54)

For macroscopic systems, spontaneous macroscopic fluctuations are vanish-

ingly small.

A useful way of rewriting the above fluctuation equation is

CT

2

4T

a

=

1

2

k

B

T

a

. (2.55)

The left-hand side is the average energy required to produce a temperature

fluctuation of the size T (this energy is T

a

S, see Eq. 2.51). We see that

this energy is k

B

T

a

/2, which is precisely what would be expected from the

equipartition theorem: any accessible degree of freedom in the system con-

tains, on average, k

B

T

a

/2 of energy.

Now consider a large system made up of many such subsystems. If we put

heat into the system – perhaps in the sub-systems representing the bound-

ary of the large system – then the entropy of the large system will increase

reversibly. The system will be out of equilibrium, however, because the tem-

perature of the subsystems will now be different. If we allow this heat to

be redistributed equally amongst all subsystems, the entropy continues to

increase. We can generalize Eq. 2.50 to demonstrate that the entropy will

increase when the temperature difference between two subsystems decreases

through heat exchange. The final equilibrium state must have constant tem-

perature throughout. It will also be the maximum entropy state (amongst all

possible redistributions of the heat throughout the subsystems) because any

redistribution of heat that increases the temperature difference between two

subsystems would reduce the entropy.

The maximum entropy state thus defines the final equilibrium state of the

large system when there are no internal constraints that keep the heat from

redistributing over the subsystems.

We can impose extra macroscopic constraints on the system that would

prevent the heat from being redistributed equally amongst the subsystems.

But the large system would then again evolve to a maximum entropy state

consistent with the externally imposed constraints. The entropy of this con-

strained system is necessarily lower than the entropy of the unconstrained

system. In this sense, entropy measures how many system configurations are

consistent with the macroscopic constraints.

14

14

The configuration freedom of a system can be quantified by its information entropy, as

defined by Claude Shannon. Edwin Jaynes showed how Shannon’s information entropy

maps onto the thermodynamic entropy we use here. See Shannon, C. E. (1948) Bell System

Technical Journal 27, 379–423, 623–656, Jaynes, E. T. (1957) Physical Review 4, 620–630,

Jaynes, E. T. (1965) American Journal of Physics 33, 391–398. See also Problem 4.8.

34 CH 2 THE FIRST AND SECOND LAWS

2.6 ENTROPY AND PROBABILITY: A STOCHASTIC EXAMPLE

A stochastic model for molecules in a cylinder illustrates some microscopic

aspects of the second law. Consider a system of molecules in a cylinder that

is divided into two equal subvolumes, say ‘left’ and ‘right’. The molecules can

move freely between the two subvolumes without preference for direction.

We assume that the chance P

lr

that some molecule moves from left to right

per unit time is the same as the chance P

rl

that some molecule moves from

right to left. This is microscopic reversibility,

P

lr

= P

rl

. (2.56)

If at some point there are N

l

molecules to the left and N

r

= N −N

l

molecules to

the right then the chance that any molecule moves from left to right is N

l

P

lr

and the chance that any molecule moves from right to left is N

r

P

rl

. These

chances have a ratio N

l

/N

r

and if N

l

>N

r

then there is a bigger chance that a

molecule moves from left to right, and vice versa. In equations, we can write

the evolution of N

l

as

dN

l

dt

= N

r

P

rl

− N

l

P

lr

, (2.57)

where the right-hand side is the sum of a gain term and a loss term. We now

set P

lr

= P

rl

and N

l

+ N

r

= N to find

dN

l

dt

= (N − 2N

l

) P

lr

, (2.58)

which has the solution

N

l

(t) =

N

2

+ e

−2P

lr

t

N

l

(0) −

N

2

. (2.59)

So N

l

decays exponentially to its equilibrium value N/2. The same is true for

N

r

. This regression to the mean is the second law as applied to this simple

system.

We can also analyze this system in terms of its entropy. It is a standard

result from combinatorics that the number of states with N

l

molecules in the

left subvolume is

W (N

l

) =

N!

N

l

!(N − N

l

)!

. (2.60)

The situation is equivalent to considering the number of states with N

r

molecules in the right volume because W (N

r

) = W (N − N

l

) = W (N

l

).

The maximum of W is achieved for N

l

= N

r

= N/2.

2.6 ENTROPY AND PROBABILITY: A STOCHASTIC EXAMPLE 35

The total number of possible microstates is 2

N

because each molecule can

be in either the left or right subvolume. This means that the probability

of finding N

l

molecules in the left subvolume is P(N

l

) = W (N

l

)2

−N

. The en-

tropy of the state follows from the Boltzmann definition S(N

l

) = k

B

ln W (N

l

).

Because of Eq. 2.59, the entropy will on average relax exponentially to its

equilibrium value k

B

ln W (N/2), which is a maximum.

We can quantify this further by using Stirling’s approximation for the fac-

torial of a number,

n! ≈

√

2n (n/e)

n

, (2.61)

which is accurate for n>10. Using Stirling’s approximation we can write

W (N

l

) =

1

√

2N

N

N+1

N

N

l

+1/2

l

(N − N

l

)

N−N

l

+1/2

. (2.62)

We can now use a Taylor expansion around N

l

= N/2 in terms of the small

number (N

l

−N/2)/N to find an approximation for the logarithm of W . After

some algebra we find

ln W (N

l

) = ln W (N/2) −

(N

l

− N/2)

2

N/2

+ ... (2.63)

where the dots represent small terms of higher order in (N

l

− N/2)/N and

W (N/2) = 2

N+1

/

√

2N. Ignoring the small, higher order terms, this can be

rewritten as

W (N

l

) = W (N/2) exp

−

1

2

N

l

− N/2

2

, (2.64)

with the width of the distribution

=

N/4. (2.65)

This means that the number of possible microstates becomes much smaller

than its maximum value at N

l

= N/2ifN

l

deviates more than from this

value. The relative width of the distribution is measured by

/(N/2) = 1/

√

N. (2.66)

This result is analogous to the result for thermal fluctuations in the conduction

system, see Eq. 2.54. For macroscopic systems this number is very small: for

N = N

A

, Avogadro’s number, the relative width of the distribution is about

one part in 10

12

. There is a very small probability indeed that any observed

value of N

l

is measurably different from its mean N/2.

36 CH 2 THE FIRST AND SECOND LAWS

The entropy can now be approximated by

S(N

l

)

k

B

=

S(N/2)

k

B

−

1

2

N

l

− N/2

2

. (2.67)

So the entropy is maximum for N

l

= N/2 and it decays rapidly away for other

values of N

l

.

The narrowness of the peak of the distribution means that macroscopic

variables are well defined and usually limited by the accuracy of the mea-

suring device rather than the microscopic variations in molecule densities.

Even though the system does explore the whole phase space of microstates,

the macroscopic variables in practice do not deviate from their mean values.

Because the maximum entropy state is so much more probable than any other

state, the thermodynamic equilibrium state is a very accurate description of

the observed state of the system.

P

ROBLEMS

2.1. Boltzmann definition of entropy. Suppose the entropy S is only a func-

tion of the number of microstates W so that S = g(W ). Because S is

extensive we need to have

g(W

1

W

2

) = g(W

1

) + g(W

2

).

Show that the only possibility for g is that g(W ) ∝ ln W . Hint: start

by differentiating the above formula twice, first with respect to W

1

and

then with respect to W

2

to find a differential equation for g with variable

x = W

1

W

2

.

2.2. Stirling engine. Atmospheric motion gets its energy from thermody-

namic cycles. Here we examine a version of a thermodynamic cycle

which forms the basis of the Stirling engine. The Stirling engine is a

simple, high efficiency engine used in small-scale applications where

energy needs to be generated at relatively low excess temperatures (for

example from waste heat in an office or factory).

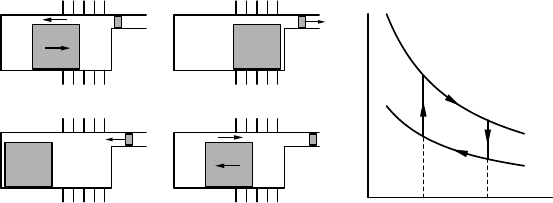

Figure 2.7 shows a schematic of a Stirling engine and the associated

thermodynamic cycle of the working fluid, which consists of two isother-

mal (dT = 0) and two isochoric (dV = 0) transformations. Assume the

working fluid is an ideal gas of n moles.

(i) Show that the total work output L of the cycle ABCDA equals

L = nR

(T

1

− T

0

)ln(V

1

/V

0

).

(ii) The heating and cooling branches A and C can be performed with

no net energy input because heat extracted from the working fluid on the

cooling stroke can be stored and re-injected in the working fluid on the

2.6 ENTROPY AND PROBABILITY: A STOCHASTIC EXAMPLE 37

A

coldhot

B

coldhot

coldhot coldhot

CD

V

0

V

1

T

0

T

1

p

A

B

D

C

FIGURE 2.7 Left panel: Schematic of four stages in the cycle of a Stirling engine. The

engine has a hot and a cold side. The power piston (small grey block) moves a flywheel

(not-depicted) which in turn moves the displacer piston (large grey block). The displacer

piston moves the working fluid isochorically (at constant volume, so no work is performed

or extracted) between the hot and the cold side (parts A and C of the cycle). When the

working fluid is on the hot side it expands and drives the power piston (part B of the

cycle). When the working fluid is on the cold side it is compressed by the power piston

(part D of the cycle). Right panel: thermodynamic transformations of the working fluid

on a pV diagram.

heating stroke. In a real Stirling engine this is accomplished by pushing

the working fluid through a regenerator in the displacement part of the

cycle. A regenerator is often some porous material which stores the heat

extracted from the working fluid and which offers little friction to the

motion of the fluid (two incompatible features in practice). Show that

the total heat input Q during the cycle ABCDA equals

Q = nR

T

1

ln (V

1

/V

0

).

(iii) The efficiency of an engine is the ratio of power output to power

input,

= L/Q.

Show that for the Stirling engine the efficiency equals

I = 1 − T

0

/T

1

. (2.68)

This equation for the efficiency turns out to be valid for any reversible

and frictionless engine, irrespective of the engine design or substance

of the working fluid. For real cycles, the efficiency is always less, which

is an expression of the second law of thermodynamics.

(iv) Estimate the maximum efficiencies of the climate system and a

hurricane.

3

General applications

Now that we have reviewed the first and second laws of thermodynamics we

can start to look at some of their basic consequences. This will provide us

with relationships that will be used throughout the rest of the book and that

are also applicable outside the field of atmospheric physics.

In this chapter we only consider simple substances. Simple substances have

only one constituent, or are accurately described as if made up of one con-

stituent, as opposed to compound substances where variations in composi-

tion would contribute to variations in internal energy through their chemical

potentials. Dry air is a good example of a simple substance: the bulk compo-

sition of dry air is constant up to great heights. Sea water would be a simple

substance if we discarded any variation in the salinity. However, variation in

salinity is an important driver of the ocean currents, so any approximation of

sea water as a simple substance has limited applicability.

The developments in this chapter are largely of general validity. We often

apply these results specifically to an ideal gas. But the more general approach

will be advantageous, for example when considering the more complex situ-

ation of a vapour–dry air mixture in Chapter 5.

3.1 THERMODYNAMIC POTENTIALS

First we introduce the four thermodynamic potentials for simple substances:

the internal energy, the enthalpy, the Helmholtz free energy, and the Gibbs

function. These thermodynamic potentials are energy-like quantities, which

can be defined as transformed versions of the internal energy. Each of them

has particular applications. For example, enthalpy plays a key role in the

thermodynamics of flow processes, as discussed in Section 3.5. The four

thermodynamic potentials lead to the four Maxwell relations, general and

non-obvious differential relationships between thermodynamic variables.

The notation for partial differentiation used here and throughout the rest

of this book is reviewed in Appendix A.

39

Thermal Physics of the Atmosphere Maarten H. P. Ambaum

© 2010 John Wiley & Sons, Ltd. ISBN: 978-0-470-74515-1

40 CH 3 GENERAL APPLICATIONS

Internal energy

In differential form, the first law of thermodynamics for any simple substance

is written as

du = T ds − p dv. (3.1)

The form with specific variables is used here, but all the following derivations

can be equally performed using extensive variables. This formulation of the

first law is completely general; it does not depend on the particular substance

or what conditions the substance is in. One way to read the first law is that the

internal energy for any substance is a natural function of the specific entropy

s and the specific volume v,sou = u(s, v). The first law then defines the

partial derivatives of u with respect to each of the variables,

T =

∂u

∂s

v

,p=−

∂u

∂v

s

. (3.2)

These relationships are always true, whether the system is a gas in a cylinder,

a cup of water, or a stone.

For compound substances, such as sea water (in which salinity plays a role),

a chemical mixture, or a system where various phases occur simultaneously

the first law is still valid but only in its extensive form, that is dU = T dS−p dV,

and where there are no other fluxes into or out of the system (such as salinity

fluxes, in the case of sea water). As long as the system only interacts with

its environment through pressure work or heat exchange, the first law in

extensive form is the appropriate expression of conservation of total energy

in the system.

For repeated derivatives, the order of differentiation is not important. So

on differentiating the specific internal energy with respect to s and then with

respect to v we get the same result if we perform these differentiations in

reverse order. The result is

I

∂T

∂v

s

=−

∂p

∂s

v

. (3.3)

This relationship is called a Maxwell relation. It can also be written in exten-

sive variables. The Maxwell relation is general because it is derived from the

general form of the first law; it does not depend on the particular substance.

For simple substances, there are four Maxwell relations in all. We will de-

rive these next. For mixtures or systems where the internal energy depends

on other processes, there are more Maxwell relations corresponding to dou-

ble differentiation, including the additional pairs of generalized forces and

displacements.

3.1 THERMODYNAMIC POTENTIALS 41

Enthalpy

The specific enthalpy h is defined as

I h = u + pv. (3.4)

The specific enthalpy h is an intensive variable, while enthalpy H = U + pV

is an extensive variable. The differential form of the specific enthalpy follows

from dh =du+p dv+dpvand the first law in differential form, du = T ds−p dv.

We find that

dh = T ds + v dp. (3.5)

Enthalpy is a natural function of entropy and pressure. In mathematical terms,

enthalpy is a Legendre transformation of the internal energy, where a function

of variables v and s is transformed to a new function of variables p and s.

15

There are several transformed versions of the internal energy (three in all for

a substance made up of a single component; for substances made up of several

components more transformed versions can be defined); these transformed

versions are called thermodynamic potentials.

Enthalpy plays a role when there is flow of substance or when considering

open systems. Section 3.5 discusses this use of enthalpy. However, like all the

thermodynamic potentials, it is initially perhaps easiest to consider enthalpy

just as a transformed version of internal energy with some useful properties.

The first derivatives of enthalpy give

T =

∂h

∂s

p

,v=

∂h

∂p

s

, (3.6)

and the double derivatives give a second Maxwell relation

I

∂T

∂p

s

=

∂v

∂s

p

, (3.7)

which, as before, is generally valid for any simple substance.

15

Legendre transforms occur in several areas of physics, thermodynamic potentials being

one of them. Another notable example is the transformation between the Lagrangian and

the Hamiltonian of a system in mechanics. The Legendre transform of a function f of

variable x is a function g of variable z where z is defined through z = df/dx and g is

defined through f +g = xz. More explicitly, the definition of z leads to an implicit relation

x = x(z) and the Legendre transform g is then g(z) = x(z)z − f(x(z)). For functions

with curvature of a single sign, the transform is defined everywhere and is invertible: the

Legendre transform of g is f, so it is its own inverse.